NOTE: many of the urls referenced in this newsletter are no longer active.

At a ceremony on 20 October, Paul Williams welcomed the latest addition to the computing facilities run by DCI for the research community. The three-processor Fujitsu VPP 300 system, procured by CLRC on behalf of the Natural Environment Research Council, will more than double the vector computing power available to NERC's research community. Its major applications will be atmospheric, ocean and climate systems modelling as well as contributing to solar-terrestrial and mineral physics and seismology. David Drewry, NERC's Director of Science and Technology, looked forward to the results of the work that could be carried out, stressing the integration that would now be possible of oceanographic and atmospheric models to draw together the combined effects on climate and other environmental impacts.

Prof Alan O'Neill (Reading) and Dr David Webb (Southampton) described how the additional computing power would impact their own research areas and Prof O'Neill drew attention to the much greater understanding that is emerging on the process involved in such global events as the current El Nino episode and its effect on the climate of more than half of the globe.

The online web-based Tcl/Tk Cookbook, developed by DCI in collaboration with IRMCS, Cranfield University, has been a major success within the user community worldwide. Tcl, Tool Command Language, is a scripting language with programming features and together with Tk, the associated toolkit, provides a powerful software tool for even novices to develop their own simple window-based applications.

The take-up has been beyond our expectation and this success is reflected in the Cookbook being included in the selective Tcl/Tk resource indices including at least one commercial web resource index. As a result of this accolade we were invited to include the logo of an award in the update in future releases.

The Cookbook tutorial is designed to introduce Tcl/Tk programming basics via extensively annotated examples that strive to provide an appreciation of Tcl/Tk and some extensions such as how to integrate application code in FORTRAN and C. In short, the goal of the Cookbook is to provide a suite of simple examples with comments so novice users can quickly learn by finding examples similar to their problem. It is largely aimed at readers who are new to developing toolkit-based applications and at those who wish to know how to develop simple interfaces and have a relatively short time to achieve that. The popularity points to the achievement of this goal and has provided enough incentive for the authors to update the material to be compatible with the latest release of Tcl/Tk as well as including examples on the object oriented paradigm and Common Gateway Interface (CGI).

RAL's contribution to the development of the first version of this Cookbook was supported by the Advisory Group On Computer Graphics (AGOCG) which is an initiative funded by the Higher Education Funding Councils and the Research Councils to provide national focus within the UK higher education community on all aspects of visual information processing.

Users interested in reading/using the cookbook should visit: www.dci.clrc.ac.uk/Activity.asp?TclTk

When shift work ceased and computing moved away from mainframe machines to a client/server regime, a way was sought to assist staff to pick up faults automatically as they occurred and to provide an accurate pointer to the faulty item. We learned that CERN personnel were developing a system monitoring package to produce a hierarchical picture of all defined equipment and its underlying services. So we decided on a two pronged flexible approach to automation by using the CERN package called SURE (StatUs Report Environment), interfaced to a Computer Associates product called AUTOMATE/XC.

SURE monitors the various hardware and software components of all equipment known to it and reports any failures to AUTOMATE. The latter provides a rule-based system in which predefined conditions are checked and appropriate actions taken. It is capable of some low level error correction and has the ability to call out Operations staff, if necessary, via a bleeper system during the silent hours. SURE works by listening to the monitored systems. They can report particular error conditions (eg filesystem full) or just send a simple heartbeat to say that they are still alive. SURE reacts to alarms or absence of heartbeat. Every alarm raised has an associated description file that defines the problem and gives helpful hints for solution.

A summary of this information is available to users via the web at www.cc.rl.ac.uk/cgi-bin/ dci/sure which gives an indication of current problems and their status, and whether the equipment is covered by a 24-hour agreement. In the latter case, users can expect Operations staff to be on site and attempting to restore the service within 2 hours of the failure. If additional help, which is not on 24-hour call out, is required, users will be informed through an update to this web page which can also be updated by platform managers to keep users informed of progress.

Operations staff on call who have been alerted by AUTOMATE can dial in remotely to carry out further diagnostics or to check the status of equipment. However, experience has shown that in most cases when an Operator receives such a call, 'human intervention' is required as all possible automatic corrective actions have failed.

As part of the Daresbury Laboratory Open Days, we (Advanced Interactive Systems Group at RAL) were challenged to demonstrate Virtual Reality (VR) to the VIPs, schoolchildren and general visitors who would be there.

The most widely known aspect of virtual reality is the presentation of pictures in 3D, which requires two simultaneous streams of images - one for the left eye and one for the right eye. These streams can be presented to the viewer in a number of ways: for the Daresbury exhibition we used the same method as in the VR Centre at RAL, using mutually polarised filter glasses.

First we captured the images out of the main VR computer at RAL onto two Betacam tapes, and, after a lot of editing, had two tapes (left and right eye) with five sequences each about 4 minutes long.

The sequences showed the layout and proposed assembly sequence for the Atlas End Cap Toroid (part of the Atlas experiment on the LHC at CERN), an animated view around and inside the MAPS instrument due to be built in the ISIS experimental hall at RAL, a mystery tour around the VisLab and a demonstration of the power of VR to help visualize the atomic activity in the interaction of water with Titanium Dioxide, from Nic Harrison and Phil Lindan at Daresbury.

For the exhibition, we assembled a fairground booth with the screen where the coconuts would be and a shelf covering the video projectors that were throwing the left and right eye images onto the special silver screen.

We were astonished at the interest that was shown in the booth; we had assumed that most of the younger schoolchildren would, at best, stay interested for part of one sequence: in fact many stayed for a full 22 minute cycle. The official photographer had no difficulty getting shots of a row of faces wearing the bright yellow polarising glasses, and at one point we had a full line of Mayors, all in their mayoral chains - definitely one for the scrap-book. Not captured on film were many instances of the children reaching out to grasp the atoms in the Titanium Dioxide sequence, so close did the atoms seem to be to them.

We are now looking at alternative methods of achieving a more permanent display (using disks with MPEG sequences instead of tapes). For RAL Open Days 1998 we will be able to use the VR computers to provide interactive demonstrations in a new VR Centre, enabling new applications to be shown live, rather than off tape. We are also looking at ways of achieving a portable version of the Daresbury type of demonstration.

Many parallel machines currently being used are based on a Multiple Instruction "Multiple Data (MIMD) form of parallelism where the memory of the machine is distributed over a network of processors. A consequence of this is that the program and its associated data must be distributed between these processors. In finite volume and finite element methods this leads to the problem of how to distribute large unstructured grids and meshes initially, and how to redistribute them subsequently.

The goal of partitioning methods is to reduce to a minimum the communication cost of the information transfer necessary during a parallel solution whilst ensuring that the computational load for each processor is about equal. In many problems the load balance can be simply related to an equi-distribution of the number of grid points or finite elements between the processors and the communication cost to the number of node points on the sub-domain interfaces.

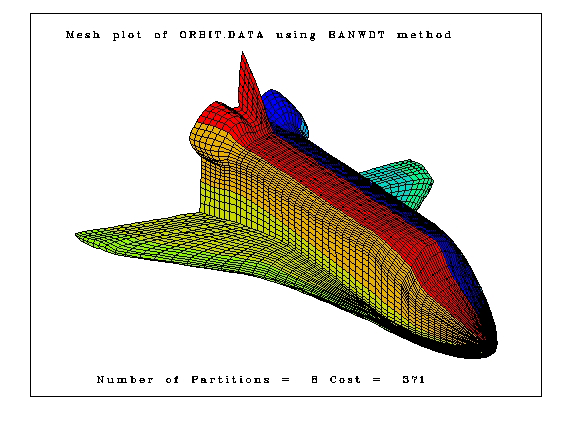

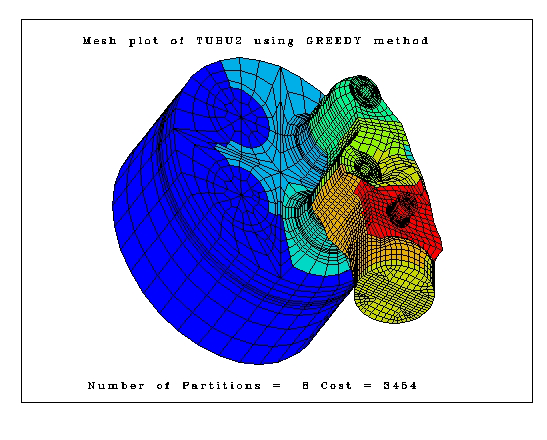

The Particle Partitioning Methods for Unstructured Meshes (PPMUM) project undertaken by DCI's Mathematical Software Group at RAL is funded by EPSRC. It has been developing and implementing mesh partitioning algorithms which are included in the RALPAR program. PPMUM has collected a large number of single and multilevel methods and performed some comparisons on them using simple computational models of parallel applications.

When comparing the efficiency of parallel computations with the equivalent serial method, it is only fair to include all the pre-processing costs required to set up the parallel version. The best partitioning methods, such as spectral bisection, are very expensive for large meshes, and the preprocessing cost can cancel out the gain in reduced communication time.

Multilevel partitioning methods are able to greatly reduce the time required to split up the mesh, while still giving results of similar quality to spectral bisection. They do this by condensing the mesh through the amalgamation of neighbouring elements. If each element in a million element mesh is merged with a neighbour, we produce a new mesh of half a million elements. This process is repeated until we have a sufficiently small mesh that it can be quickly partitioned, even if we use spectral bisection. The partition of the smallest mesh can then be transferred back up through the levels to the full mesh. To get good results, it is necessary to perform some refinement on the edges of partitions at each level, but this can be done relatively cheaply. RALPAR allows the use of multilevel partitioning in conjunction with a range of methods to split the smallest graph.

The results of these comparisons will be published over the next few months.

Figure 1 illustrates the partitioning produced by RALPAR on a mixed finite element mesh of 26571 nodes and 23446 elements. It shows the partition of the mesh into eight sub-domains using the Farahat's Greedy method. Figure 2 shows the partitioning of the surface mesh on a space orbiter containing 6344 nodes and 6171 quadrilateral patches.

The author would be very interested in any comments on the software and its methods.

The RALPAR software is available to UK research groups through the Mathematical Software Group's WWW pages at www.dci.clrc.ac.uk/Group.asp?DCICSEMSW

Formal methods of software development offer the opportunity of improved software quality, but their uptake has been hindered by the diversity of competing methods. The Vienna Development Method (VDM) and the B Method are two formal methods currently in use by industry and supported by mature commercial tools. These methods are essentially similar, but the coverage of the tools for VDM and B differ significantly in their capabilities for static analysis, proof, animation and code generation. This complementary functionality could benefit users from both communities, but because of the differences between the methods, interoperation is not currently possible.

SPECTRUM brings together DCI's Well Founded Systems Unit and suppliers and users of VDM and B toolkits to investigate the feasibility of integrating support for these two methods. By doing this, a design given in one notation switches to the other for a time, exploiting the strengths of each method for different phases of the design process. Further, the facilities of both tools are available from either notation.

The integration approach adopted in SPECTRUM centres on determining the commonalities between the VDM and B notations, via the development of translations between them. From this common core emerge techniques for modularisation and proof construction that support common analysis and automation tasks.

The user partners in SPECTRUM represent a cross-section of safety-critical applications in avionics, terrestrial transportation, satellite control and nuclear power. Initial case studies in these application areas show the potential of using the combination of methods within a commercial software development process. Advantages in terms of development cost and product quality will be gained from using the SPECTRUM technology.

The tool suppliers are assessing the commercial case for the provision of the required functionality. The long term aim of the SPECTRUM project is to integrate the two toolkits so that the benefits of both can be gained from either.

For further information please contact: Juan Biccaregui@rl.ac.uk or access www.dci.clrc.ac.uk/Activity.asp?SPECTRUM

This is a final reminder for the 1997 I Machine Evaluation Workshop which will be held on 11 - 12 November at Daresbury Laboratory as part of the EPSRC's Distributed Computing Support Programme. This Workshop is by now established as the leading National event dedicated to distributed high performance scientific computing. The principle objective is to encourage close contact between the research communities from the Mathematics, Chemistry, Physics and Materials grant lines of EPSRC and the major vendors of workstations, software and peripherals.

You are invited to attend this year's workshop which will have a format similar to that of last year. About a dozen major vendors will make 30-minute presentations on topics such as hardware, compilers, graphics, storage, networking etc. In past years the audience has been very appreciative of the technical content of these talks.

A very important component of the workshop is the availability of systems for benchmarking evaluation purposes and the exhibition. Vendors will usually permit Internet access to the systems for the delegates and the availability of products to evaluate will help grant holders make their decisions. We hope to make loaned systems available from the 3 November onwards.

Please contact Robert Allen or Martyn Guest; for further information or registration: David Emerson

Our WWW page will also contain up to date information see: www.dl.ac.uk/TCSC/DisCo/Events/workshop