NOTE: many of the urls referenced in this newsletter are no longer active.

I would like to let you know about two "forthcoming events". The first is that CCLRC will welcome a new Chairman and CEO from 1 April 1998. He is Dr A R C (Bert) Westwood FEng FInst P, a materials scientist who has had a distinguished scientific and managerial career in the USA with the Martin Marietta Corporation, now Lockheed Martin, and was most recently Vice President Research and Exploratory Technology at the Sandia National Laboratory in Albuquerque, New Mexico. He is the winner of many prestigious medals and awards. Secondly, CLRC will be holding its Open Days at its Rutherford Appleton Laboratory site between 26 June and 1 July. We hope that many of you will be able to call in to see some of the work of the Central Laboratory.

For information on the RAL Open Days see: http://www.cclrc.ac.uk/Opendays98/

The superscalar computing service that CLRC provides to EPSRC has been a great success and since the award of large amounts of computing resource to the UK Computational Chemistry Working Party (UKCCWP - ATLAS issue 7) it has been clear that the existing six processor DEC AlphaServer 8400 would soon become overloaded.

In November 1997 a decision was made to upgrade the 8400 by the addition of a second 8400 with four CPUs in a closely coupled configuration using Digital's Truecluster software. This route has the advantage of being very cost effective since an older Digital 7000 chassis in the Atlas building was no longer required and could be upgraded to an 8400.

In the period since the original 8400 was bought the Alpha processor technology has advanced considerably and the new machine (known as Magellan to partner the older Columbus) has 625MHz processors compared with the original 300MHz parts. The increase in application performance is substantial but somewhat less than the factor of 2.08 from the clock speeds since the memory bandwidth does not increase in the same ratio. Typically code sections such as matrix multiplies and FFTs gain almost in the ratio of the clock speeds while an "average" ratio is around 1.5 - 1.7.

Columbus and Magellan are connected together with Digital's "memory channel" which runs at 100 MBytes/sec in each direction and allows very efficient sharing of machine resources. The present configuration gives Columbus and Magellan a set of shared home filesystems while each has its own temporary filespace for maximum performance. Message passing parallel programs using MPI or PVM protocols can run very well across the memory channel giving the Columbus/Magellan system an impressive peak performance of 8.6 Gflops/sec, greater than either the 32 processor J90 (6.4 Gflops/sec) or the 3 processor NERC Fujitsu VPP300 (6.6 Gflops/sec).

Together with the addition of Magellan to the service, the memory on Columbus has been increased to 4 GBytes. Magellan, currently at 2 GBytes will hopefully expand to 4 GBytes in the second quarter of 1998.

The user perspective of the superscalar service is very little changed. Users still login to Columbus and see their normal filestore. New batch queues give access to the additional CPU power of Magellan and a four CPU parallel job stream will be available on Magellan to maximise the single job performance for the more demanding applications. The extra processing throughput will go towards doubling the allocation to the UKCCWP and new EPSRC grant applications to use the additional capabilities of Columbus /Magellan are welcome.

See http: //wwwhpc.rl.ac.uk/Columbus for further grant information.

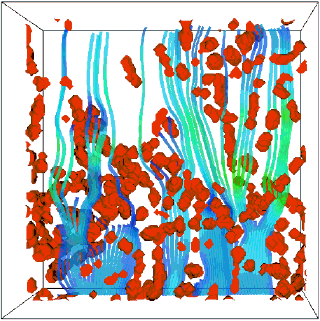

Imagine immersing yourself in a three-dimensional visual representation of your data. For the first time, you are able to study at close quarters effects which could hold the key to a new understanding of the behaviour you are modelling. This is the capability which the VIVRE project will realise.

VIVRE is a new project involving the Advanced Interactive Systems Group in DCI which is supported by the EU as part of its High Performance Computing and Networking Programme. The project will combine the presentational power of today's best data visualization software with the real time immersive interaction provided by a state-of-the-art virtual reality system. Together these techniques have the potential to revolutionise the way we explore data to gain new insights into a wide range of industrial and business processes. By placing the user "inside" their data, subtle features can be observed and new relationships previously hidden in the data can be revealed.

The project will demonstrate commercial benefits by applying these developments to a range of business applications proposed by the partners. These include:

The partners in VIVRE (and their home countries) are Air Liquide (F), Bergen Software Services (N), British Nuclear Fuels (UK), CLRC Rutherford Appleton Laboratory (UK), Labein (E), NAG (UK), Tessella Support Services (UK), Tethys (F) and Unilever (UK). Tessella is the project co-ordinator. Division (UK) is also contributing as the supplier of dV/Reality, the virtual reality system being used by the project. The project will focus on visualization systems already being used by the project partners, namely AVS and IRIS Explorer, the latter marketed by NAG. VIVRE is one of the projects managed by the ESCALATE Technology Transfer Node at the Southampton Parallel Applications Centre.

RAL are the principal technology developers within the project with responsibility for the design and implementation of the integrated software framework which brings together AVS, IRIS Explorer and dV/Reality into a real time, immersive data visualization environment. This approach makes maximum use of the existing investment in visualization software and expertise by the user partners. The intention is to make the VIVRE environment available on both Silicon Graphics and PC/NT systems. The software requirement specification has been derived from a comprehensive set of user requirements provided by the industrial partners. The resulting system will be evaluated using application problems such as those listed above and the results widely disseminated through Europe to provide support to others wishing to exploit this technique.

For more information see: http://www.dci..clrc.ac.uk/Activity.asp?VIVRE, http://www.tessella.co.uk/vivre/Page_001.htm , http://www.pac.soton.ac.uk/projects/escalate/escalate.html

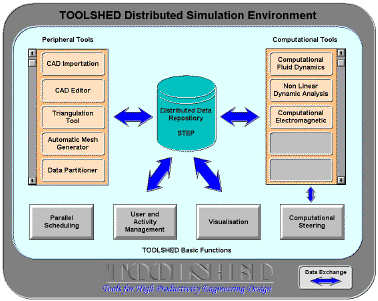

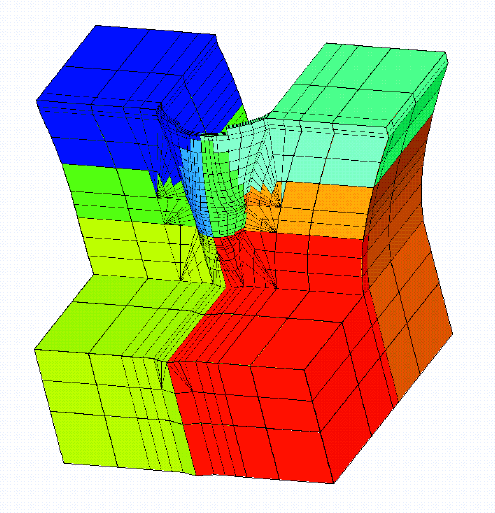

The availability of High Performance Computing (HPC) Technology is making simulation available as a predictive tool in the engineering design cycle. Such simulations can help design complex components such as combustion chambers, turbomachines, large civil engineering structures, aircraft, cars and trains through computational fluid dynamics, computational electromagnetics or computational structural and vibrational analysis.

The Toolshed project seeks to address the reasons why in the past industrial users were deterred from using High Performance Computing tools during the design process. These reasons are:

The Toolshed project is developing a highly productive interactive open environment supporting High Performance Computing simulation applied to engineering problems. Toolshed combines recent results in HPC for the development of practical design tools in a common framework which has been designed to enable new software packages to be integrated flexibly and with low effort (Figurel).

This is achieved by making use of standards in distributed computing and in data management such as STEP (Standard for Exchange of Product Data) and the data modelling language Express. The usefulness of the environment is being demonstrated in the project by using three examples of industrial design:

Toolshed is a collaborative project with partners from five European countries, supported by the EC. The partners (with country) are Aerospatiale (F), Bertin (F), CISE (I), ENEL (I), Numeca (B), PAC (UK), Ruston Diesels (UK), SINTEF (N) and RAL (UK).

The contribution of DCI is building on experience acquired during earlier projects like IDENTIFY and Midas and consists of:

For more details of the Toolshed project, please see our web pages at: http://www.dci.clrc.ac.uk/Activity.asp?TOOLSHED

Support for UK users of High Performance Computers (HPC) is one of the major activities of the Central Laboratory of the Research Councils (CLRC) through DCI. Over the past decade HPC has demonstrated the ability to model and predict accurately a wide range of physical properties and phenomena. Many of these have had an important impact in contributing to wealth creation and improving the quality of life through developing new products and processes with greater efficacy, efficiency or reduced harmful side effects, and contributing to our ability to understand and describe the world around us.

The tide in computational science and engineering now is to study more complex and larger systems and to increase the realism and detail of the modelling. In their 1992 report (Research Requirements for High Performance Computing (SERC, September 1992)) a scientific working group chaired by Prof C R A Catlow of the Royal Institution of Great Britain presented a scientific case for future supercomputing requirements across many of the most exciting and urgent areas of relevance to the missions of the UK Research Councils. The report highlighted a number of fields at the forefront of science in which computational work would play a central role. It was noted that to make progress in these most computationally demanding and challenging problems, increases of two to three orders of magnitude in computing resources such as processing power, memory and storage rates and volume would be required. The report led ultimately to the procurement of a Cray Research Inc T3D system which went into operation at the University of Edinburgh in the summer of 1994.

The High Performance Computing Initiative (HPCI) was set up in November 1994 to support the efficient and effective exploitation of the T3D, and future generations of systems, by consortia working in the "frontier" areas of computational research.

The Cray T3D, now comprising 512 processors and total of 32 GBytes of memory, represented a very significant increase in computing power allowing simulations to move forward on a number of fronts. The HPCI Centre at CLRC Daresbury Laboratory, with assistance from the other Centres at the Universities of Edinburgh and Southampton, organised the first National HPCI Conference to assess the success of this service and indicate some scientific requirements which may be met in the coming 3 years.

The High Performance Computing Initiative Conference took place at the Manchester Conference Centre Renold's complex on the UMIST campus 12 - 14 January 1998. 140 people attended including representatives of most of the companies active in HPC who, with NERC and EPSRC, all provided generous sponsorship enabling the event to take place.

The ambitious programme had 8 plenary talks and 40 speakers presenting submitted talks in parallel sessions and a further poster session which eventually had some 15 papers. Thus we were able to have information about all the HPCI-supported consortia and other relevant UK projects.

The conference was also timely because the procurement procedure, HPC'97, for the next UK national supercomputer facility is currently nearing completion. CLRC's involvement in a strong consortium together with IBM and Fujitsu (the GIF Consortium) bidding via the Private Finance Initiative (PFI) was made public at the HPCI Conference.

All presentations from the Conference are to be included in a book by the Plenum Publishing Company which should appear this summer. This will act as a record of the scientific achievements of 3 years use of the Cray T3D at EPCC and also indicate some scientific requirements which may be met in the coming three years.

For the full version of this article see: http://www.dci.clrcl.ac.uk/Publictions/ATLAS/Issue11/hpc1.html. For more information on the project see: http://www.computer50.org. For more information about HPCI see http://www.dci.clrc.ac.uk/Activity.asp?HPCI.

W3C-LA Technical Workshop Series The Web of the Future A one day technical workshop is planned at CLRC, Rutherford Appleton Laboratory (RAL) on Monday, 27 April 1998. This workshop has been designed to highlight the new tools and techniques that will make up the "Web of the Future".

Further details can be obtained from the W3C Office at RAL (e-mail w3c-ral@inf.rl.ac.uk subject: w3cla workshop 27/4/98).