Paul Nelson from the Atlas Computer Laboratory attended the Conference and presented two papers.

The goal this year was to insure that the effort which each member put forth in support of UAIDE had a positive result. This required that every operation performed at the national, regional, and local level be reviewed. This review indicated certain action must he taken concerning our legal structure, organization structure, publications, local chapters, regional meetings, and the annual meeting. This goal brought about significant individual effort, tremendous team effort, and helped to identify the problem UAIDE faced in the future.

UAIDE was incorporated in the State of California as a non-profit organization with a non-exempt tax status. This incorporation provides the officers of UAIDE legal protection in those areas where they had to enter into contractual agreements. It provided DatagraphiX with a better legal environment for subsidizing UAIDE's effort, and finally provide a definite procedure for disposal of material assigned to UAIDE by its users (UAIDE Library)

Several changes were made to the organizational structure of UAIDE to make it more functional. The first major change was the separation of the combined Secretary-Treasurer office into two distinct offices and responsibilities. This has been extremely effective in providing a much more balanced work load for the two offices. A corresponding secretary position was also established and is filled by appointment via the President. This addition allows the secretary to function as an officer of the organization rather than spending an inordinate amount of time doing clerical work. This has proven effective in getting more timely minutes and newsletter publication. Finally three distinct special interest groups Scientific, Business, Animation were established in place of the standing committees, Hardware, Software, Advanced Design, Application, Business Systems, and Computer Animation. This change was intended to help the user identify himself with a specific area of effort. This change helped us to better organize the annual meeting and to add depth of participation to the organization.

The format and publication date of the UAIDE newsletter were modified. The format was changed to one using excerpts from the minutes of board meetings, DatagraphiX news, Publications concerning COM, and specific messages from the UAIDE President. This change was well received. Publication date was set at a bi-monthly cycle.

An attempt was made to have the proceedings made available at the annual meeting. This failed although a target date of January 1, 1970 has been set for publication and would be a significant improvement.

Two UAIDE local chapters are now active. One is in the Los Angeles Area and the other in Michigan. Commitment has been made for the development of a Local chapter in the Washington D. C., Hew York City, and Chicago areas. It is felt that only through local chapters will UAIDE be able to have the input of those who are closest to doing actual application using DatagraphiX COM equipment.

A business oriented regional meeting was held in Washington, D. C. with an attendance of 100 users. This consisted of entirely paper presentations for an entire day and was well received by all attendees. Most all of the papers given were represented at the annual meeting and will be published in the proceedings of the Annual Meeting. Two scientific regional meetings were held; one in Washington, D. C. and the other in the L. A. area. Attendance was approximately 30 at both.

Jim Stubbs, UAIDE DatagraphiX representative, spent considerable time convincing DatagraphiX senior management of the potential of UAIDE. This effort resulted in a mandate from Don Mitchell to his staff to use UAIDE wherever possible to insure that DatagraphiX goals were in tune with user needs.

Gary Clickard, UAIDE board member from Ford Motor Co., effort in preparing a three year plan for the expansion of UAIDE activity to meet the expanding needs of DatagraphiX and its users will serve as an excellent guideline for the coming year.

John Logan, UAIDE Executive Secretary, efforts in preparing a handbook for his position, in preparing the user catalog, in preparing annual meeting material, plus the responsibility for proceedings publication has worked harder than any other individual in the organization to insure UAIDE's goals are reached.

The biggest problem that lays ahead is avoiding the annual update of the objectives of the organization and concentrating on achieving the objectives of UAIDE. The minutes of the past years indicate the large percentages of time has been spent trying to figure out what UAIDE was supposed to be doing rather than actually achieving a specific end product.

Jim Splear

UAIDE President - 1970

Two items concerning the financial organization of UAIDE may be of interest to the readers:

Attached is a report summarizing the income and disbursement transactions during the year 1970. The reports of both the UAIDE Group and UAIDE are included.

D. T.Rumford, Treasurer

| UAIDE Group 1 January- 2 June 1970 |

Total ($) | UAIDE (as incorporated) 3 June- 31 December 1970 |

Total ($) | |

|---|---|---|---|---|

| Cash (beginning of period) | 6960.08 | 6313.01 | ||

| Receipts DatagraphiX |

6000.00 | 3000.00 | ||

| Proceedings | 90.26 | 463.00 | ||

| Other Publications | 8.00 | 32.00 | ||

| UAIDE Annual Meeting | 0.00 | 4930.00 | ||

| Other Registrations | 490.00 | 1055.00 | ||

| Total Receipts | 6588.26 | 9480.00 | ||

| Disbursements |

||||

| Telephone | 1482.49 | 1276.13 | ||

| Postage and Office Supplies | 15.00 | 108.40 | ||

| Travel | 4062.71 | 4752.85 | ||

| Board Meals | 346.86 | 0.00 | ||

| Clerical Support | 405.08 | 1059.47 | ||

| Chapter Promotion | 153,20 | 550.00 | ||

| Regional Meeting Promotion | 624.86 | 2104.64 | ||

| UAM Expenses | 20.13 | 4230.26 | ||

| Miscellaneous | 125.00 | 490.71 | ||

| Total Disimbursements | 7235.33 | 14572.46 | ||

| Cash (end of period) | 6313.01 | 1220.55 |

The objectives of the Business Systems Committee for calendar year 1970 were as follows:

With the above goals established, here is what was accomplished during calendar year 1970.

The following objectives have been established for the Business Systems Committee in Calendar year 1971.

The Southern California Local Chapter was conceived and started in the year 1970. It was an exciting year for UAIDE in the Local Chapter. The dinner meetings are monthly and have had an average attendance of 25 attendees. The membership includes 25 members for 11 different companies.

The officers of the Local Chapter are:

President Don Stanley Lockheed-California Company, Burbank

Treasurer Hans Lindblom Naval Weapons Laboratory, China Lake

Program Chairman George Baum MeDonnell-Douglas, Huntingdon Beach

Jim Tsukida Pacific Missile Range, Point Mugu

Secretary Steve Popelka DatagraphiX, Santa Monica

Gary Haas DatagraphiX, Santa Monica

Arrangements Jeannine Lamar Rand Corporation, Santa Monica

One of the events of 1970 was the establishment for UAIDE of an official address. All of the officers, board members, committee chairman, and representatives of UAIDE can be addressed by name or title through the following address:

UAIDE P. 0. Box 2449 San Diego, Calif. 92112

Mr Doug Woodham Wally Taber Productions 111 Continental Avenue Suite #309 Dallas, Texas 75207 (214) 748-6392

Mr Rod Keitz K & H Productions 3601 Oak Grove Dallas, Texas 75204 (214) 526-5268

Mr Ed Edwards Battelle Memorial Institute 505 King Avenue Columbus, Ohio 43201 (614) 299-3151 X3388

Mr James M. Tsukida Scientific Data Analysis and Processing Department Code 3432 Pacific Missile Range Pt. Mugu, California 93041

Mrs Evelyn Clickard 8221 Clippert Taylor, Michigan 48180 (313) 291-8554

Mr George A. Baum McDonald Douglas Bldg. 35-25 3855 Lakewood Blvd. Long Beach, California 90801 (213) 593-2096 Mr John L. Ferber Dept. of Health, Education & Welfare 5906 Bryn Mawr Road College Park, Maryland 20740 (202) 963-5695 Mr Don Kennedy NASA GODDARD Information Processing Code 656 Greenbelt, Maryland 20771 (301) 982-6346 Mr Jim Splear General Motors Corp. - Research Labs 12 Mile and Mound Road Warren, Michigan 48092 (313) 575-3004

Mr Roger Nagel University of Maryland Computer Science Center College Park, Maryland 20783 (301) 454-4527 Mr Franklin Gracer IBM Research P. 0. Box 218 Yorktown Hts., New York 10598 (914) 945-2937 Mr Sherrill Martin Kaye Instruments, Inc. 737 Concord Avenue Cambridge, Ma. 02138 (617) 868-7080

Mr Tom Doran Army Material Command Building T-7 Arlington, Virginia (202) 0X5-5631

Mr Tink Henry Oldsmobile Division of G.M. Data Processing Department Lansing, Michigan 48921 (517) 373-4910

Mr Henry Dolecki Bank of the Commonwealth P. O. Box 2401 Detroit, Michigan 48231 (313) 965-8800 Ext. 8716

Mr Don Stanley Dept. 8031, Bldg. 67, Plant A1 Lockheed Burbank P. 0. Box 551 Burbank, California 91503 (213) 847-7748

Representative to UAIDE Mr James Stubbs

UAIDE Executive Secretary Mr John Logan

Business Systems Mr Harley Brown

Mr Mark Woods

Scientific Systems Mr Rod Johnson

Computer Animation Mr Paul Ressler

Mr Robert Foster

Publicity Chairman Mr Howard Bernstein

All DatagraphiX personnel may be reached at the following address:

Stromberg DatagraphiX, Inc. P. O. Box 2449 San Diego> California 92112 (714) 298-8331

The 1971 UAIDE Annual Meeting will be held October 25-29, 1971 at:

The Biltmore Hotel 515 S. Olive St Los Angeles, Calif. 90013

The Program Chairman is:

Mrs Gina Robinson K & H Productions 3601 Oak Grove Dallas, Texas 75204

For information concerning the meeting write:

Program Chairman UAIDE P. O. Box 2449 San Diego, Calif. 92112

It is indeed a pleasure to be talking to a group of the Users of Automatic Information Display Equipment - in a field in which I have had a strong interest over the years. Today my topic is not, however, information displays - but the organization of a vendor for effective user group communication.

Taking a highly simplified view of the problem, I contend that an effective user group will:

Now for the vendor to effectively work with a user's group, he must:

I believe, that the objective that each member of a user's group should have is:

As you may remember, in the early days user groups were a group of people banded together to help each other. Today the groups are more sophisticated and more diverse. In the beginning every attendee's experience was different from the other man's, and every man could learn from others. Another way to say that is - all were beginners and knew very little to start. Today's users have greatly different objectives. Of course there still is the basic drive to solve problems together. However, it is shaded at times by the placing of too much emphasis on convincing the vendor he should solve a problem - rather than the members of the user's group sitting down and carefully thinking through the problem. Pressure tactics pressed on vendors at meetings I view as a non-constructive use of a user's group. An ideal user's group, in my opinion, is one with a focus on mutual problem solving - not on the solving of problems by the vendor. In my opinion, an effective user's group must be based on sound technical work by the members. It-will do us no good to ask DatagraphiX to tune their hardware to higher accuracy than the state of the art. It has been designed to operate and be priced with a certain reliability and certain accuracy. Any push to achieve higher accuracy by pressure tactics is, in my opinion, futile.

Does that mean we can't ask for improvements from the vendor - Not at all. It means that we ask for improvements that, as a group of users, we can clearly demonstrate as being feasible. And this can only happen by continuity of work by small task forces working, not at the large annual meetings, but working for weeks together to cleanly and precisely formulate problem solutions.

The proceeding holds equally for both software and hardware. The responsibility for technical feasibility of user's group requests should lie in the user's group.

Now suppose a group of users do have a very well thought out, very feasible way to solve a technical problem. Is that enough for the vendor to be convinced and to proceed on the project? No, a user's group, I believe, should try to analyze the benefits to the vendor and show the economic gains he should expect from the projects the increased sales, the decreased maintenance, and the new market potential. Remember one of the objectives of a user's group is the mutual solving of problems - and that should include the vendor's problems of being profitable.

This brings me to my third point. While vendors are corporations, they are also groups of people, with all the problems of people motivation. Remember the computer system we are asking to be improved, and the hardware for which we are requesting changes, were probably designed by the man we are trying to convince to accept the suggested improvements.

Therefore, to be effective we must sell an idea to the vendor. We must convince him that the project is technically feasible and economically worthwhile for him.

Now suppose the user group does do a thorough job of planning, does indeed show technically and economically feasible potential, will the vendor respond - and indeed how should he respond?

I believe the vendor wants to serve. His livelihood depends on it.

Is this an impossible task for a user's group or a vendor? I don't believe so. By its very nature the people at user's group meetings are solution oriented. They want problems solved. I feel given a user's group environment which encourages aggressive, continuous problem identification and a strong task force-type approach to problem solving, can motivate a vendor. He can be motivated by good technical and economic arguments for your ideas.

In my presentation I have essentially placed the challenge of effective vendor responsiveness to a user's group on the user, not on the vendor. My reason is simple. By choice we selected the vendor for the product we are using. We are the ones that identify the problems the user group thinks are important. And we have the freedom of changing vendors. If the vendor does not think the problems are significant, and hence; is not responsive - we can change vendors and user's groups. We do not have to beat our head against the wall.

Summary of talk given by Dr. Howard J. Teas at UAIDE Luncheon, 10/21/70, Carillon Hotel, Miami Beach, Florida

Southeast Florida is in the fortunate position of receiving fresh air from over the Atlantic Ocean during a major part of the year, Miami is near the top of the clean air list of major cities in the United States primarily because of its geography and meteorology. Even though we have open trash burning, smoky incinerators and the expected jet and automobile exhausts, we do not have a critical air pollution problem.

Many of the waterways of South Florida are freshwater canals that originate in Lake Okeechobee or the Everglades. In the native state these canals, sometimes called rivers or creeks, are often crystal clear. Because of the need to control the intrusion of salt water near the coast, the levels of these canals are regulated by water control dams close to where they empty into the bays. As a consequence of the water control structures our canals have little flow except at the time of rain and are in fact stagnant for considerable periods of time. It is the waters of these clear canals that serve in South Florida as depositories for sewage plant effluents, industrial wastes, septic tank seepage and agricultural runoff.

Sewage is the major pollution problem of South Florida. Somewhat more than half of the people of Dade County, the county in which Miami is located, have their waste lines connected to septic tanks. These pose a health hazard because of the very porous underlying limestone and the nature of the fresh water aquifer which is utilized for drinking water. Less than half of the people of Dade County are served by approximately 100 sewage plants. It has been estimated by our pollution control people that about nine out of ten of these sewage plants fail to meet the legally required 90% removal of organic matter from their effluent either occasionally or in some cases continuously. As a consequence of the inadequate treatment of sewage and the nature of South Florida's waterways, extensive banks of sewage sludge as deep as five feet have formed in some canals near the outfalls of sewage plants. The coliform bacteria counts in many of these canals within the urbanized part of their length make the water unfit for fishing, swimming or water contact sports. Indeed, in many cases the odor and appearance of the canals is so unaesthetic as to make their use for contact sports unlikely.

A well run sewage treatment plant gives rise to little or no odor in adjacent areas. However, it is readily understandable that a plant which is doing a grossly inadequate job of sewage treatment may cause odor problems. One plant in Dade County had so many complaints from its neighbors that the operator began spraying perfume into the air in an attempt to mask the odor.

The clear canals that slowly flow from the Everglades and which become the recipients of nitrate and phosphates from septic tank seepage, sewage plant effluent and suburban yard fertilizer runoff are on occasion the sites of fish kills. As the water in these canals is enriched by nitrate, phosphates, and other mineral nutrients and organic materials, it becomes a rich medium for the growth of algae. Algae, like other green plants, release oxygen into the water as they carry out photosynthesis in sunlight. At night algae, like other living organisms, require oxygen for their metabolism. The water of canals that have had nutrients added and have developed heavy algae growth may retain enough dissolved oxygen at night for the fish. However, at high levels of nitrification, with the consequent algal growths or blooms, several consecutive days of cloudy weather may spell trouble for the canal's fish. On cloudy days the algae may not produce enough oxygenation of the water to last overnight and as a consequence the concentration of dissolved oxygen at night may fall to such low levels that fish are asphyxiated.

The canals in the agricultural areas receive large amounts of nutrients in the form of fertilizer runoff during the winter vegetable growing season. The blooms of algae that follow such nutrient additives have been responsible for fish kills during cloudy weather in some of the agricultural area canals where there is no contamination with sewage plant effluent. Clearly, excessive nutrients in our waterways is a problem, irrespective of whether the source of nutrients is sewage plant effluent, fertilizer runoff or septic tank seepage.

Not all sewage in South Florida finds its way, with or without treatment, into the canals or septic tanks. Several communities in the area dispose of their sewage by pipelines into the ocean. The great majority of the approximately three hundred thousand people of Miami Beach are served by such an outfall that is located 7,000 feet out into the ocean, only five blocks north of the Carillon Hotel. At the point where the 30 million gallons a day of raw sewage come boiling out of the pipe there is a large murky area that is locally called the Rose Bowl. Flocks of seagulls can often be seen near the Rose Bowl and it is an area that attracts both fish and fishermen in great numbers. The problem with ocean outfalls is one of health: the ocean currents shift and sometimes carry the sewage to shore, contaminating bathing beaches. Also, contamination might be carried to people by birds or fish.

South Florida's air is not too polluted, but the majority of the freshwater canals and some of its bay and ocean beaches are unfit for swimming because of sewage pollution.

As you are aware, South Florida is an area attuned to tourists. Florida is reported to be the first state in tourist income and within the Florida and southeastern coast is the greatest focus of tourism, In addition to the stream of visitors the area is growing very rapidly in permanent resident population. The influx of tourists and new citizens overtaxes many public facilities and services, including sewers and transportation. The rapid and generally unplanned development that is taking place poses a serious threat to the future of South Florida as a desirable place to live as well as for tourists to visit. Creeping peoplitis is my term for these problems associated with too rapid growth. Creeping peoplitis is the disease syndrome that characterizes the new high rises with only enough space between them for parking lots; it gives us real estate developments that don't even provide solutions for their own sewage, transportation and recreational area problems. Creeping peoplitis is responsible for filling the mangrove swamps and installing concrete seawalls where larvae of shrimp and game fish should be spending their childhood days.

The reactions of those of us in South Florida concerned with environmental problems has been mostly negative, that is, we are active against things that threaten the environment. We fight creeping peoplitis issue by issue. We spend our time being negative, trying to stop things like a poorly located jetport, hold up a large real estate development that would fill the mangrove area, stop new developments that have no plan for sewage treatment other than to further overload inadequate existing facilities. My hope for South Florida is that by winning negative issues we will gain enough support to force regional land use planning before rather than in response to the pressure of developers. Then we may have a partial cure for creeping peoplitis.

Business groups using microfilm to update data in information search and retrieval systems might anticipate that computer animation is a natural extension of a medium with which they are already involved. That is true only in the sense that the output is still on film and that viewing the film can provide business information; the questions the animated film can answer are of a different nature and the personnel interested in that information are usually different.

It is assumed here that the potential business user has a general familiarity with computer animation to the extent that he has seen computer generated films. Contact with the periphery of the field can lead one to the conclusion that the thrust of animation efforts are in areas of a) education and research, b) engineering simulation displays, c) art, d) image quality technique development (halftones, shading, color), e) software development for powerful passive and interactive graphic systems. Few of the efforts seem connected with potential business uses of animation.

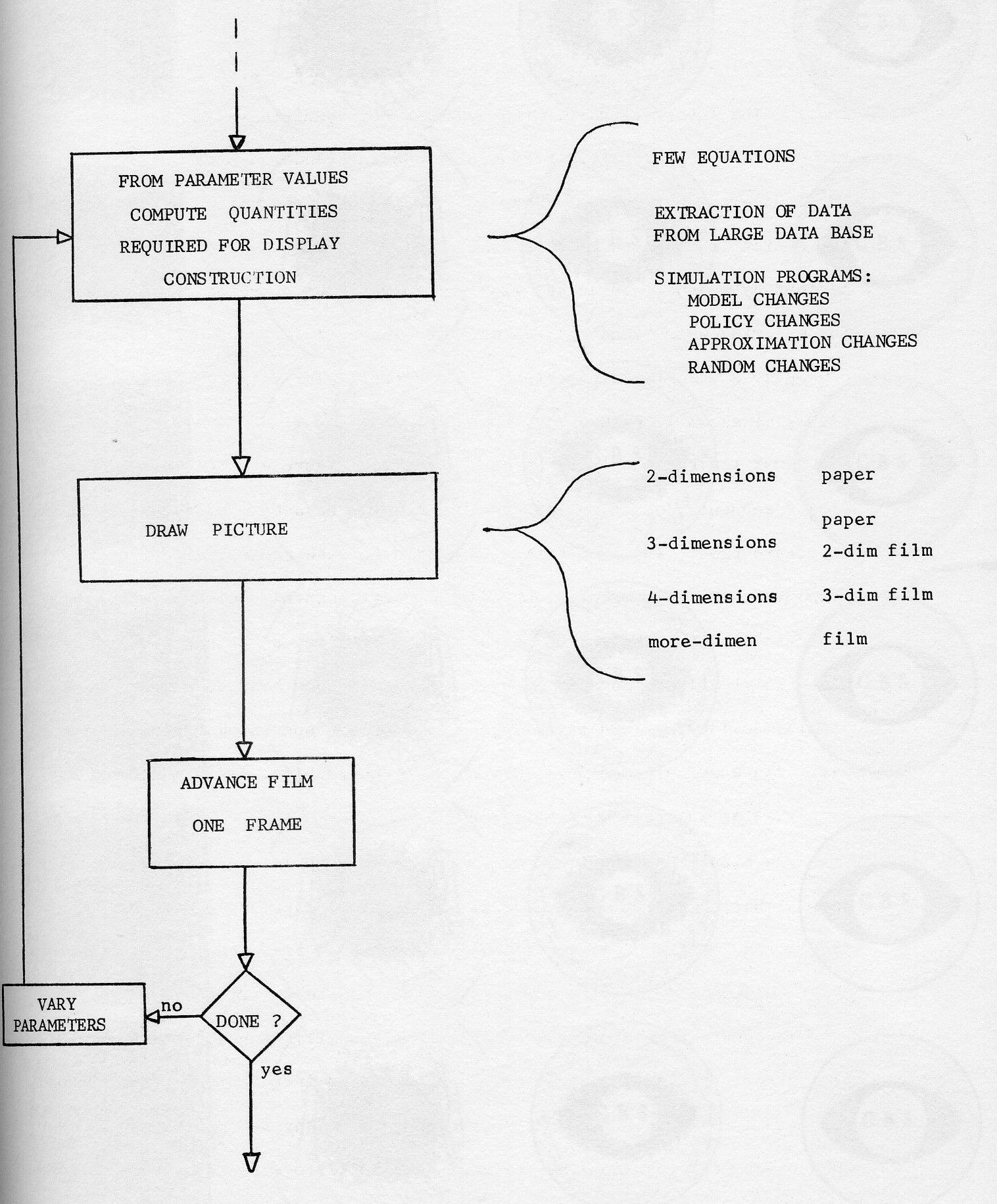

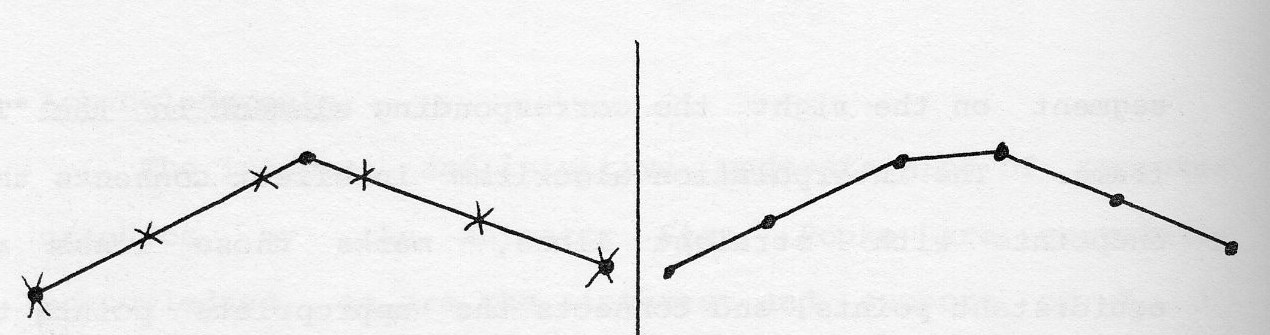

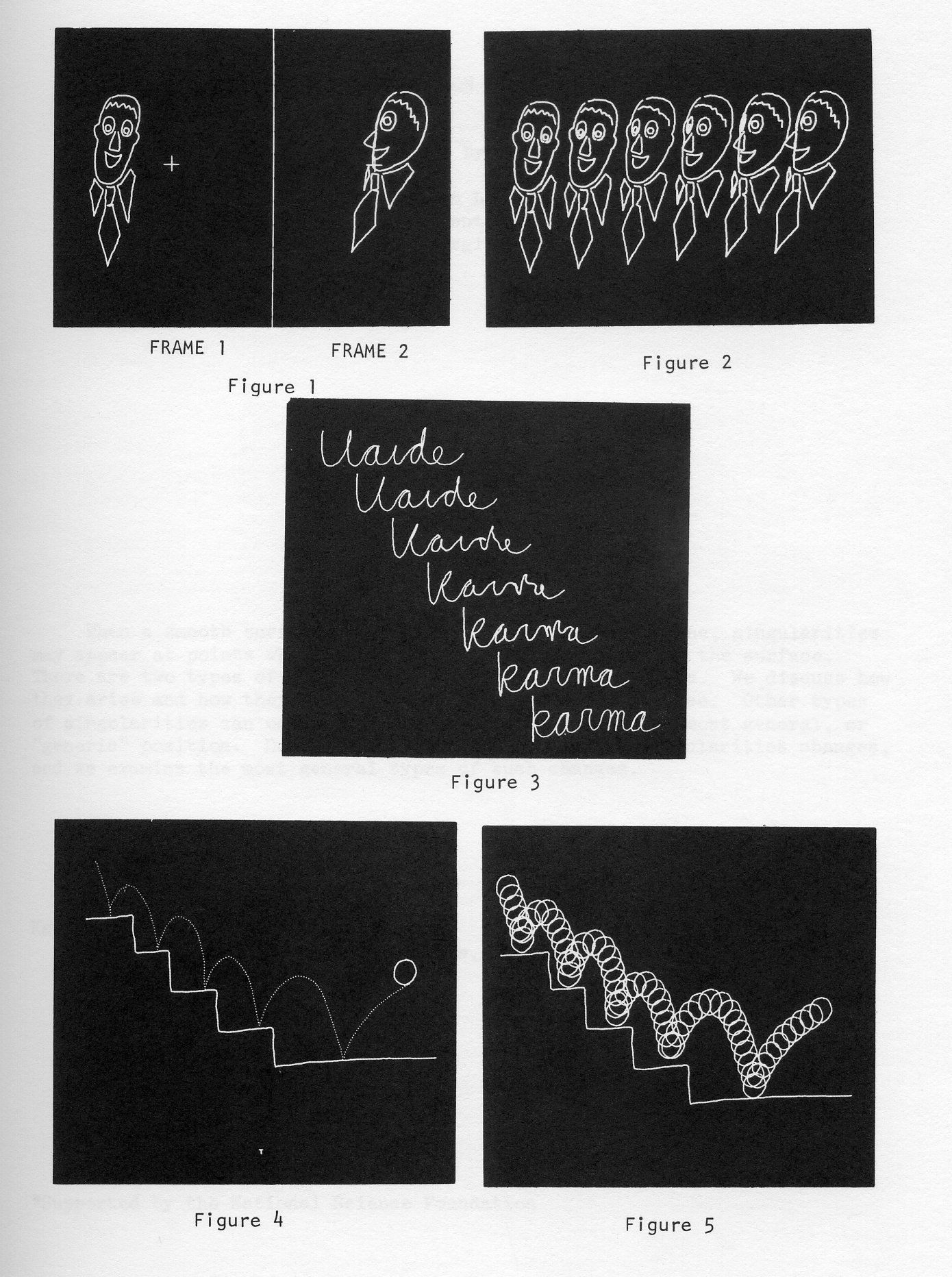

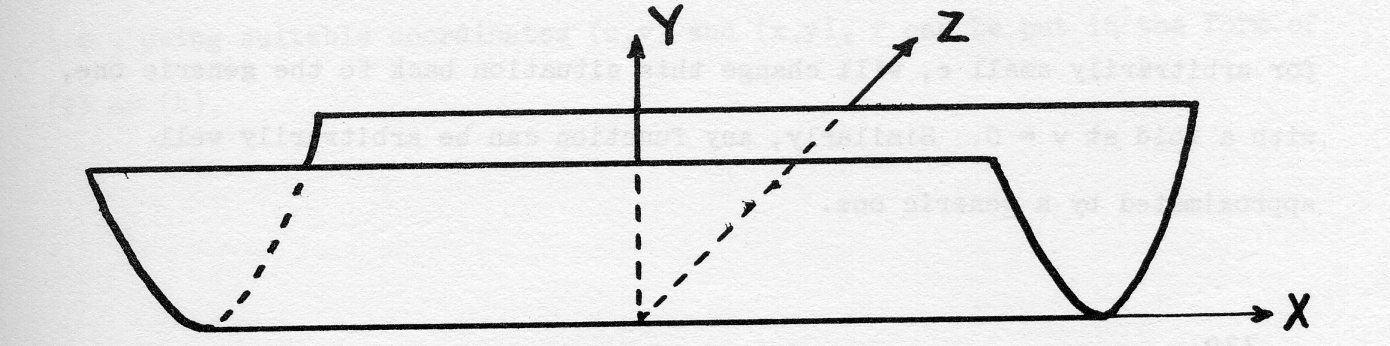

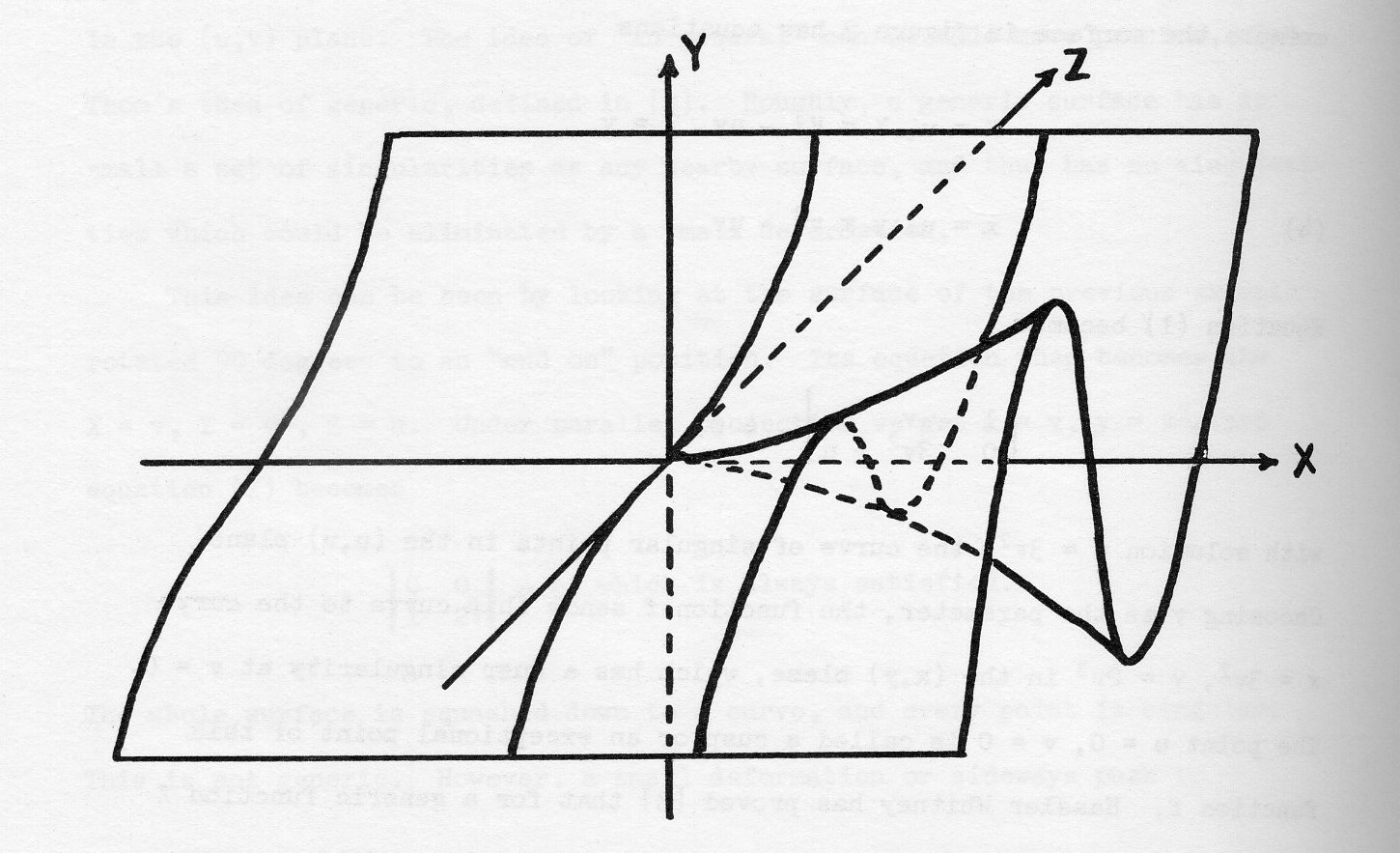

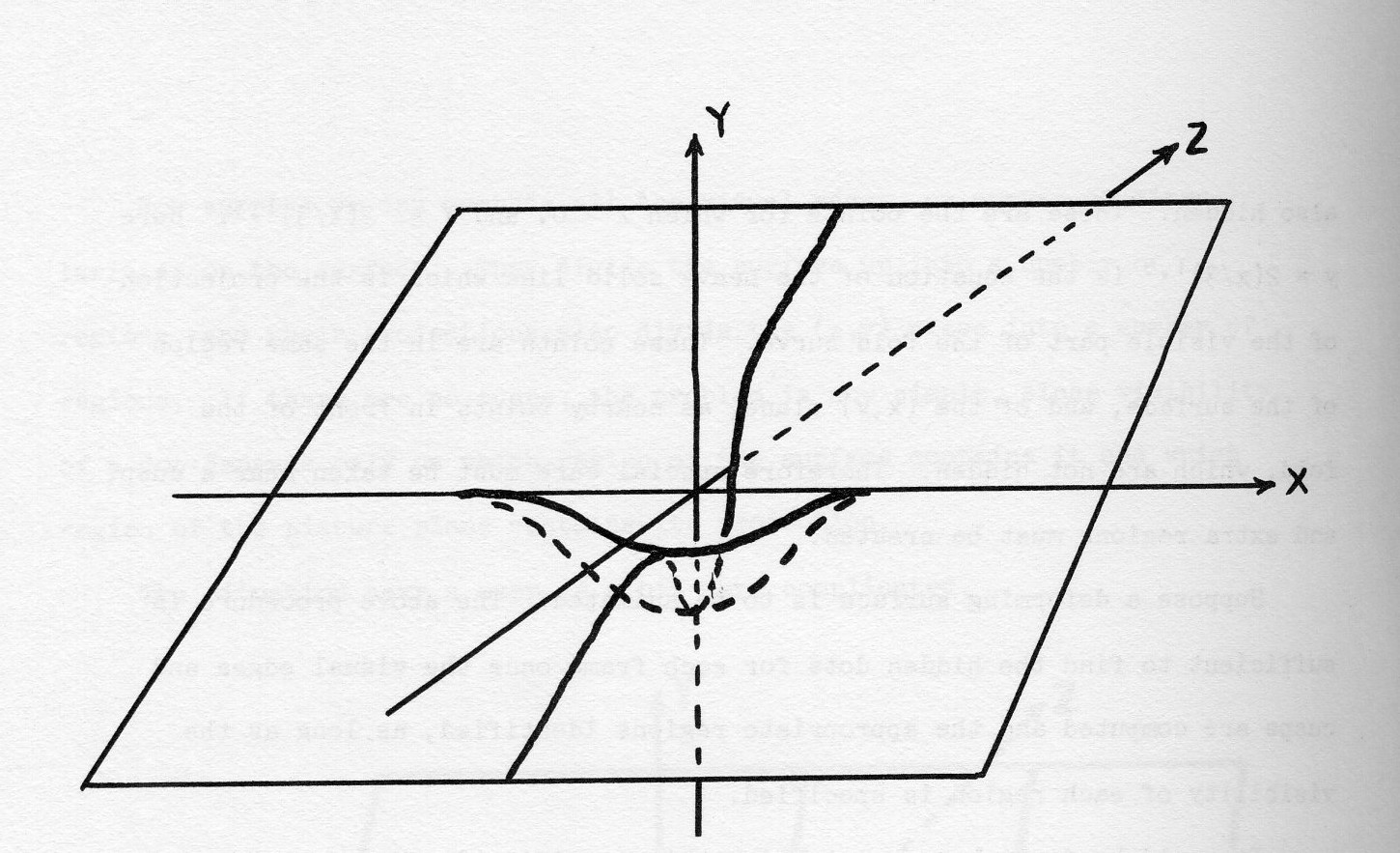

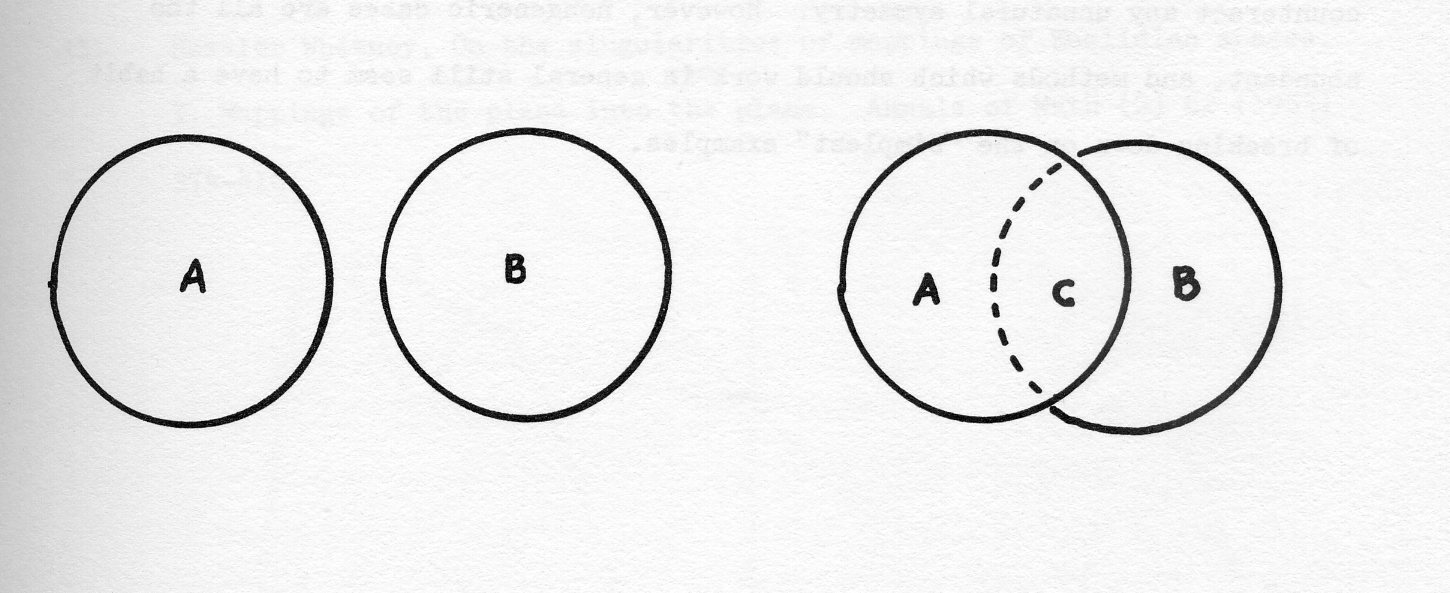

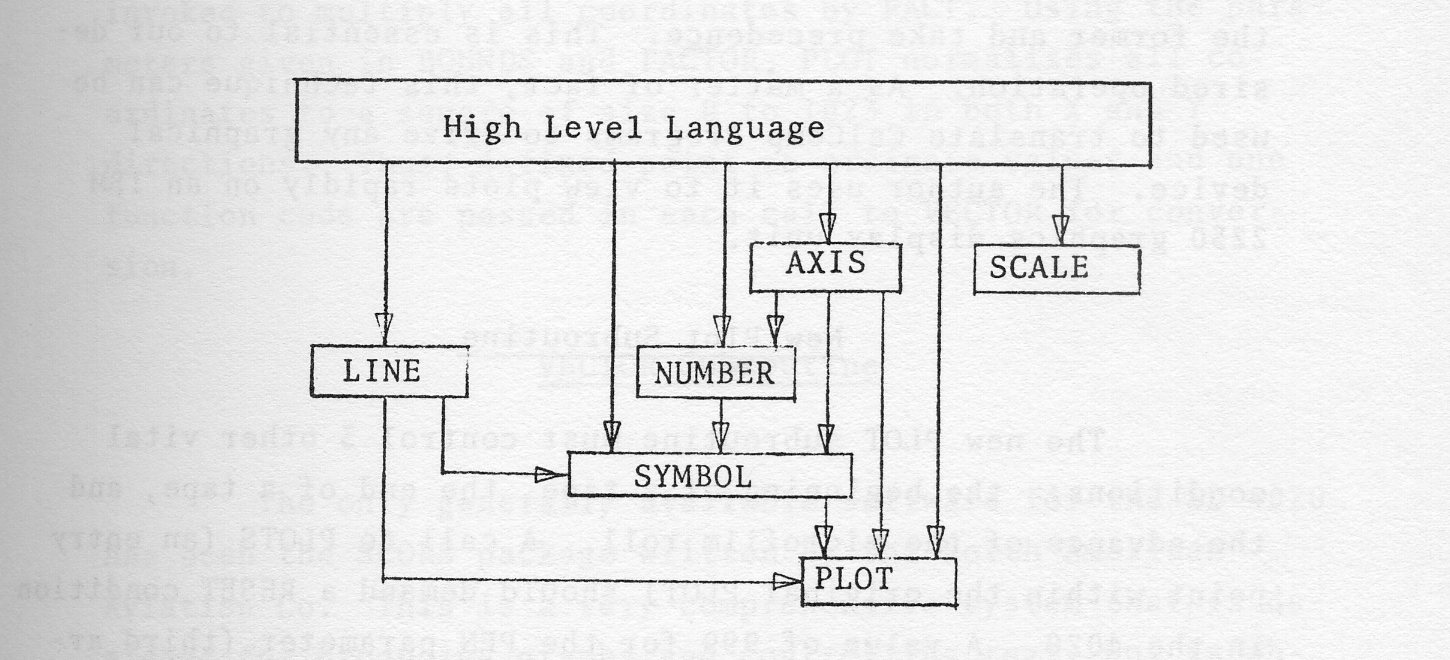

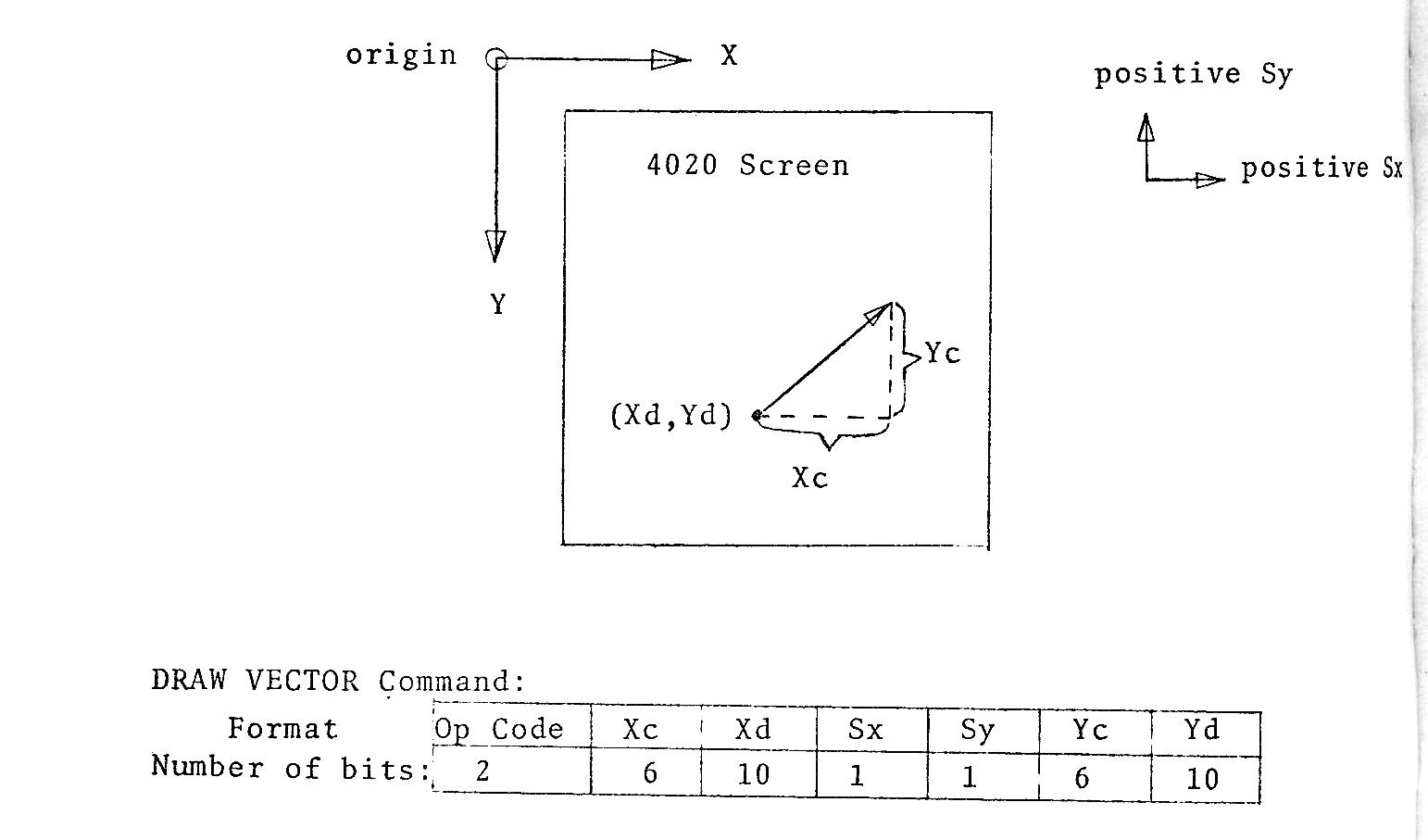

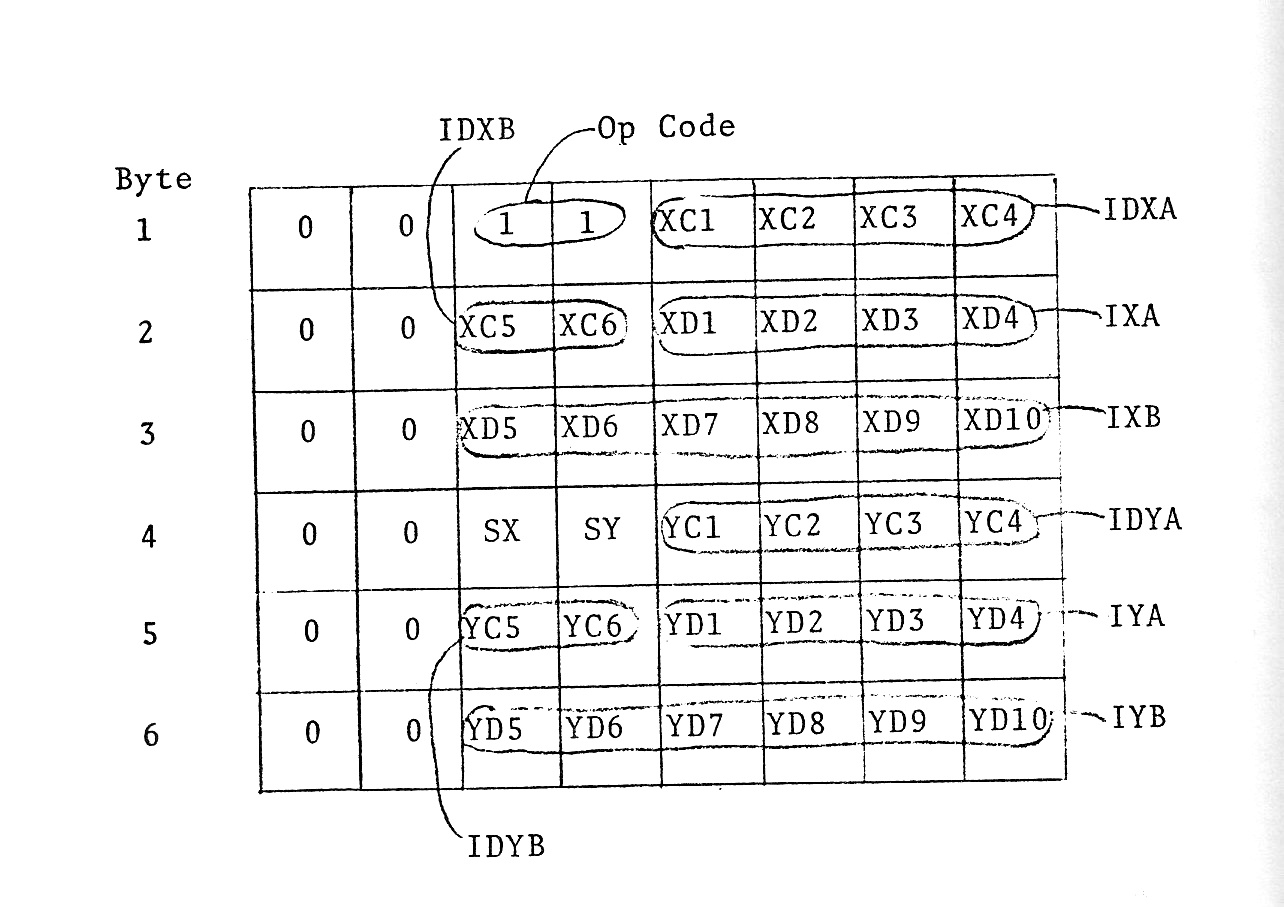

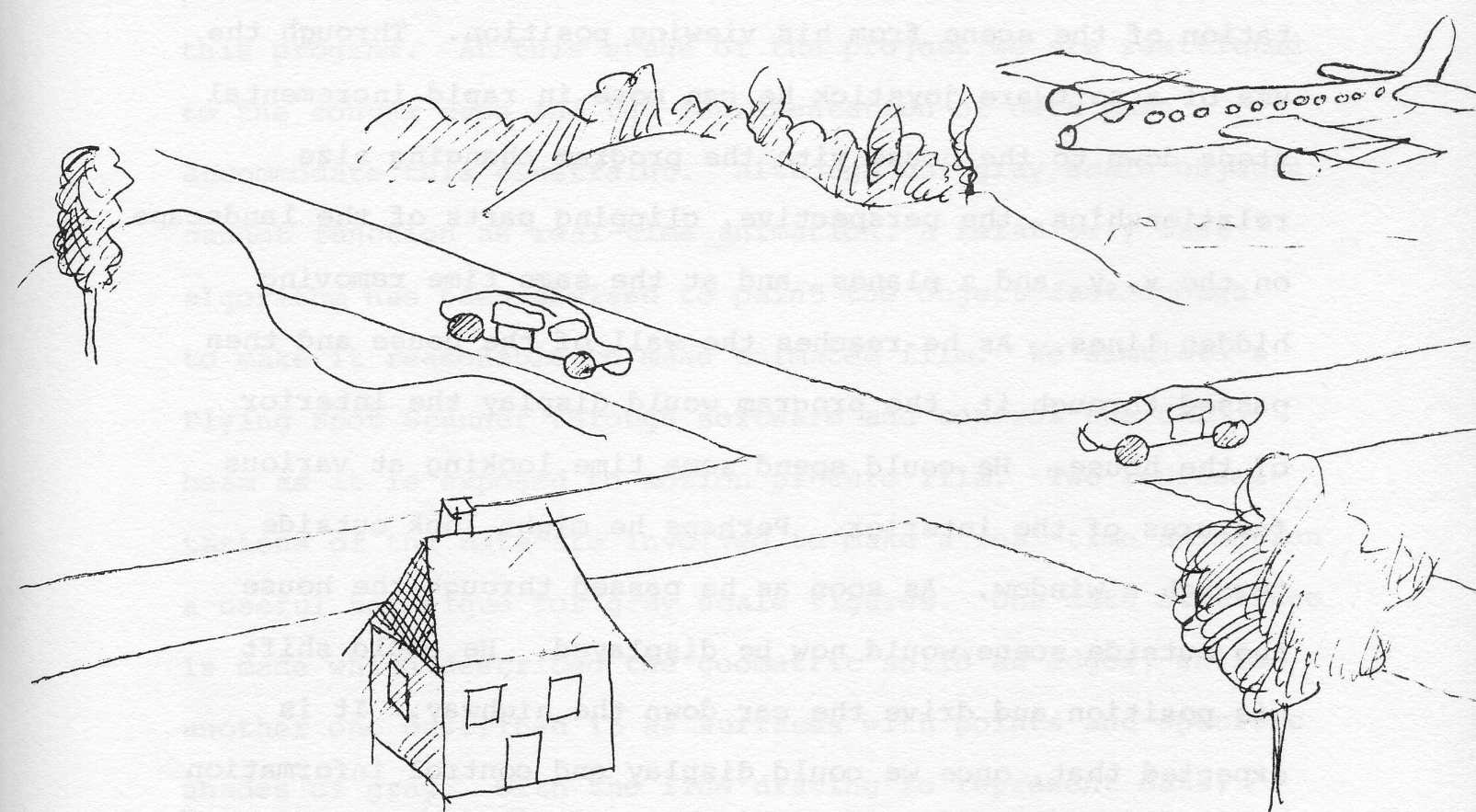

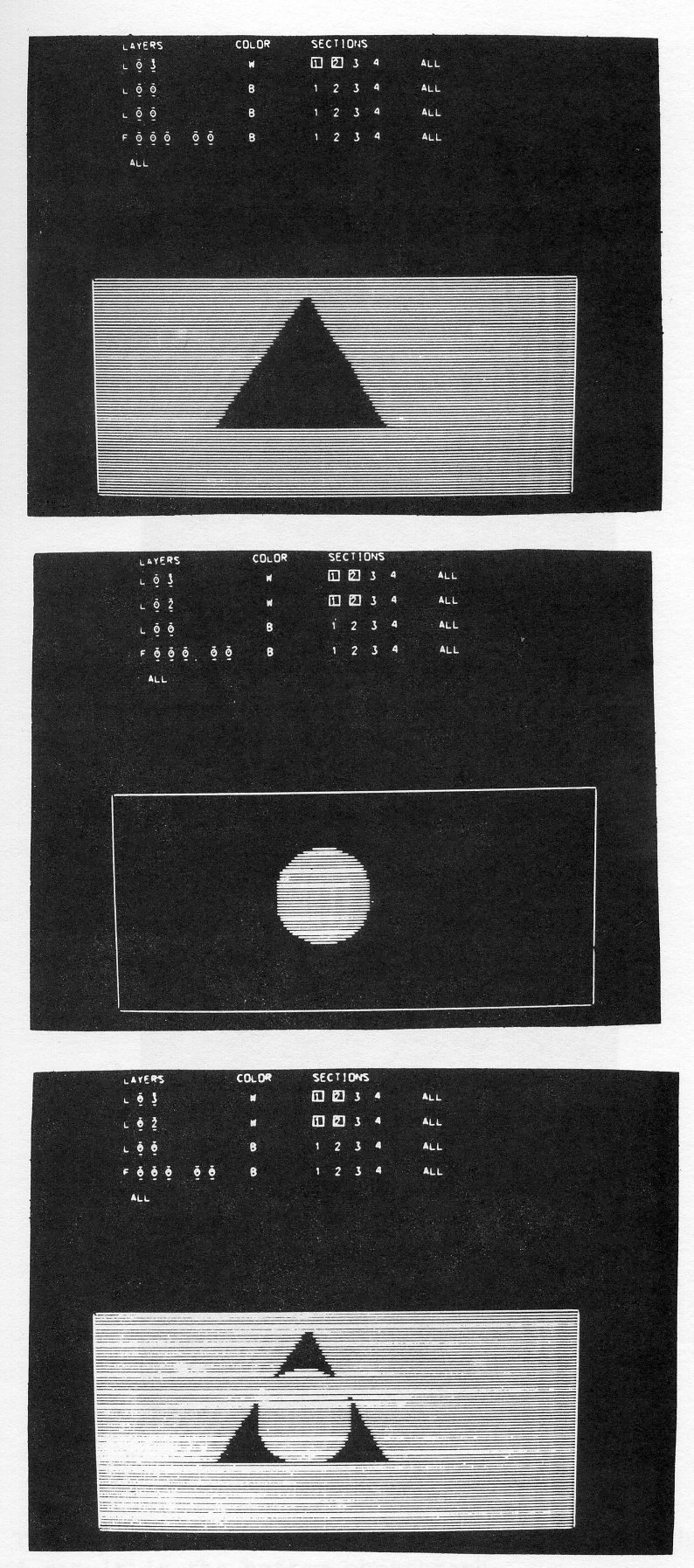

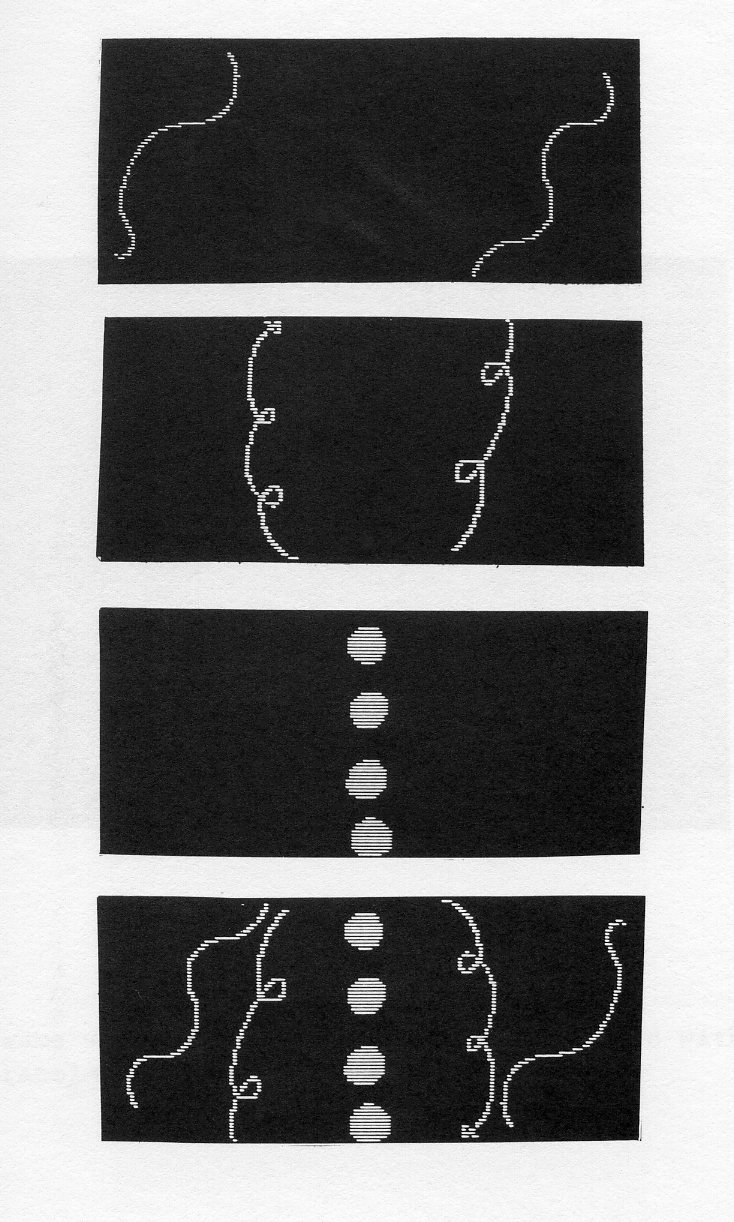

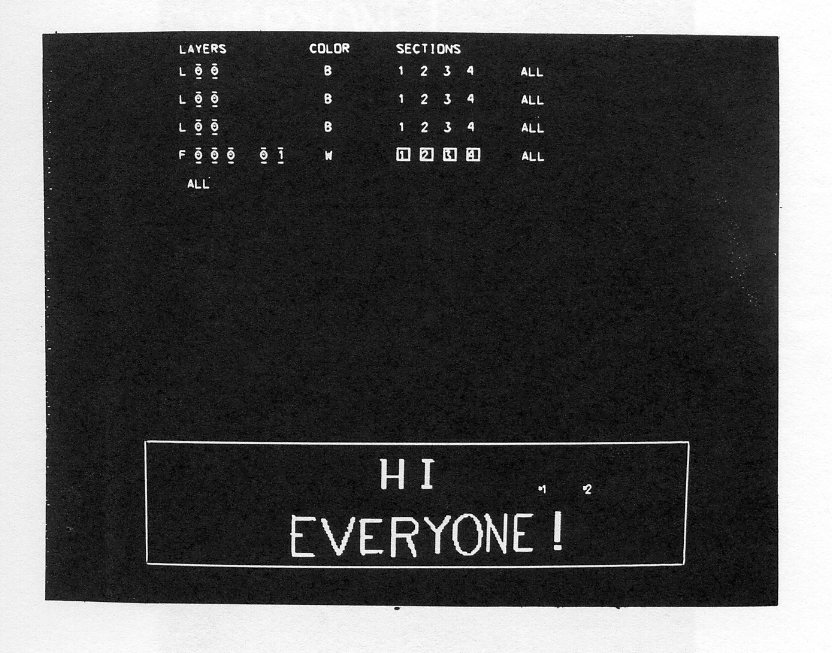

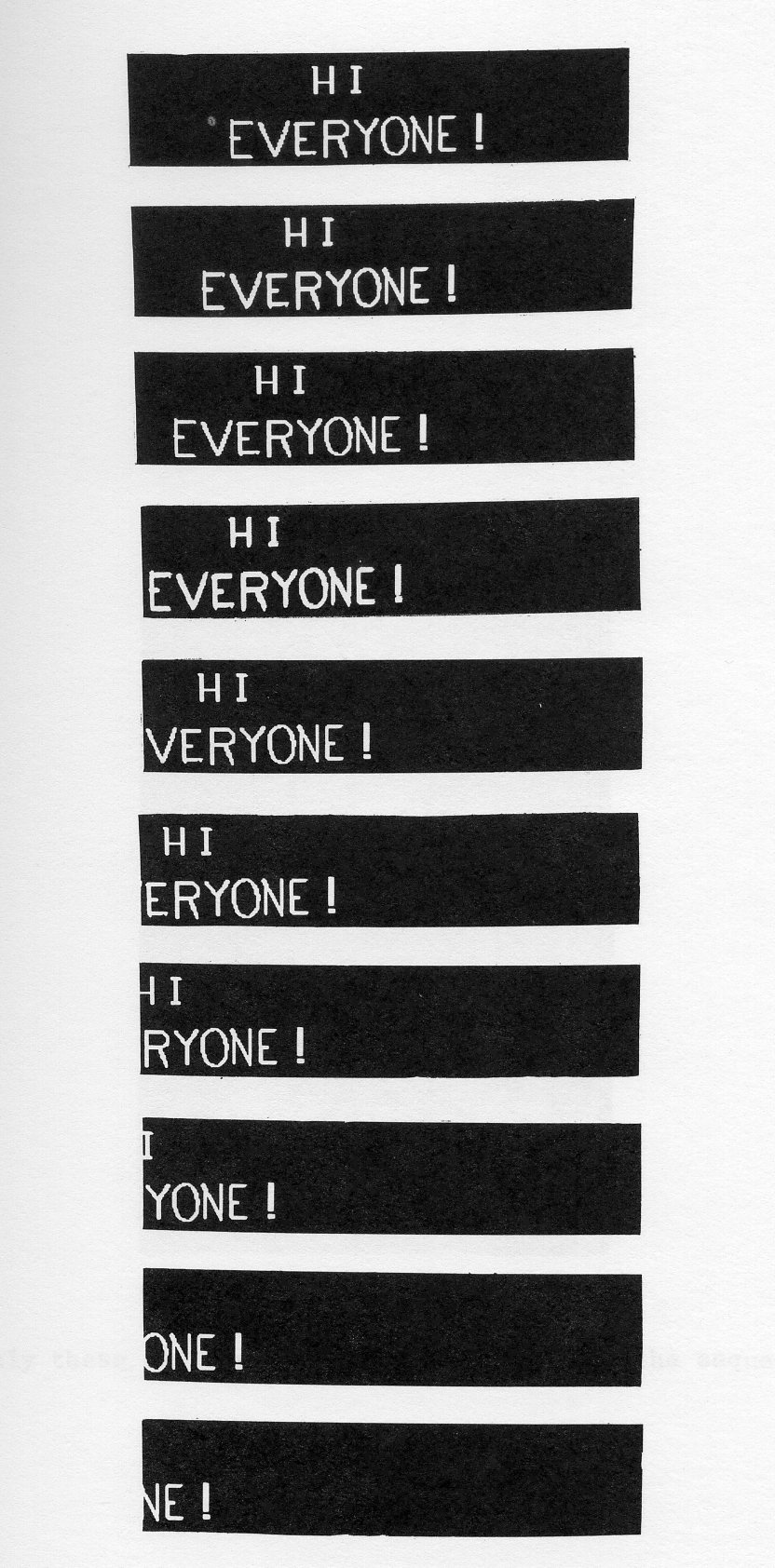

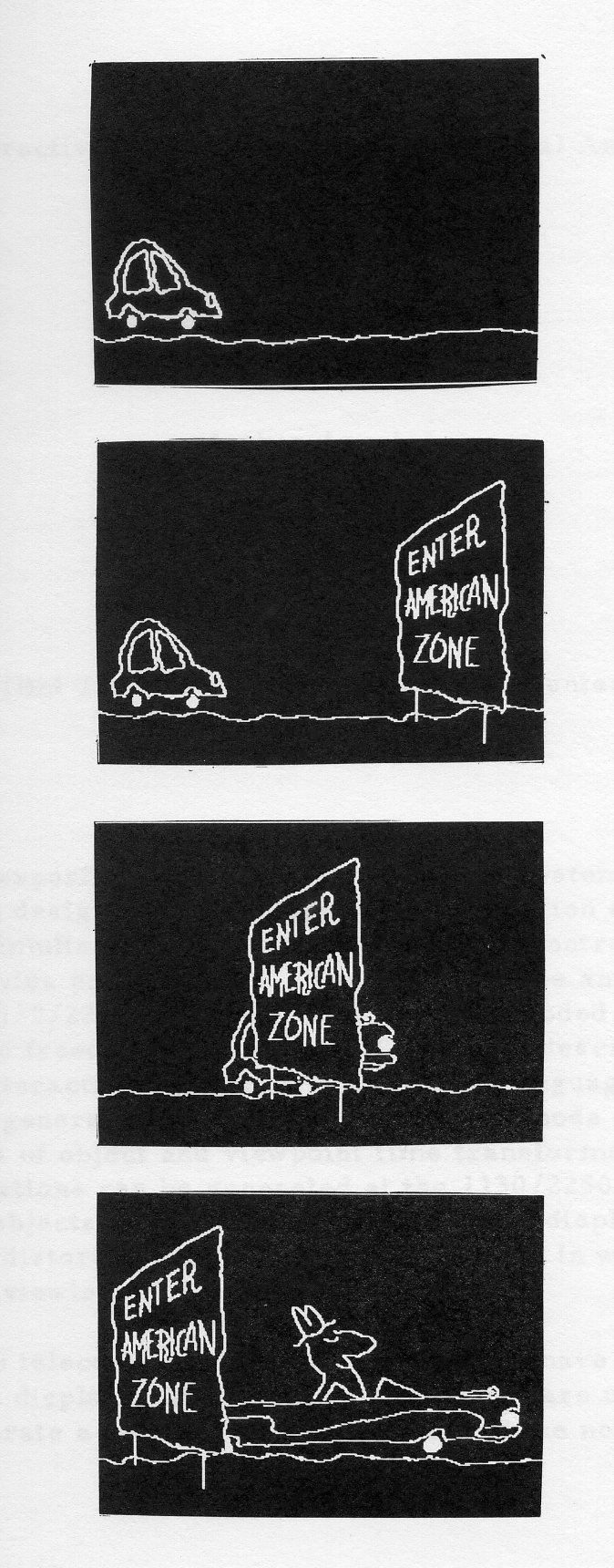

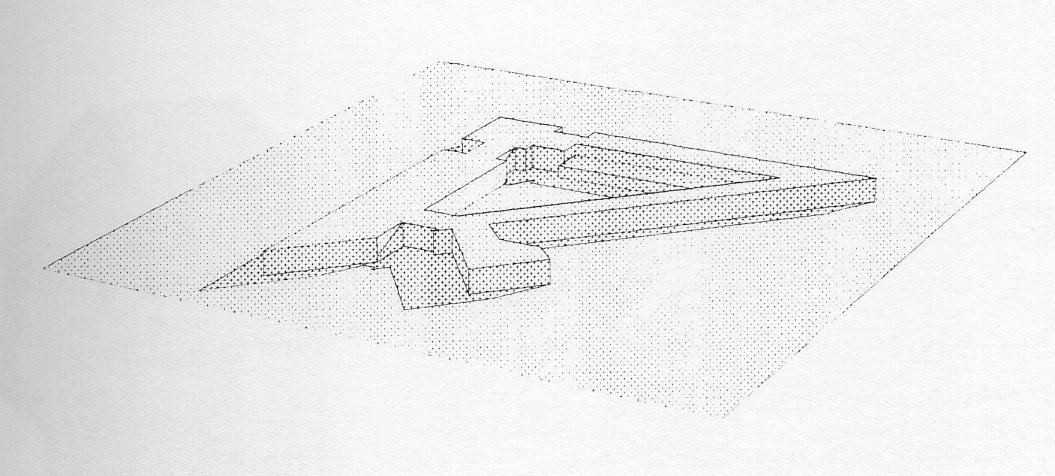

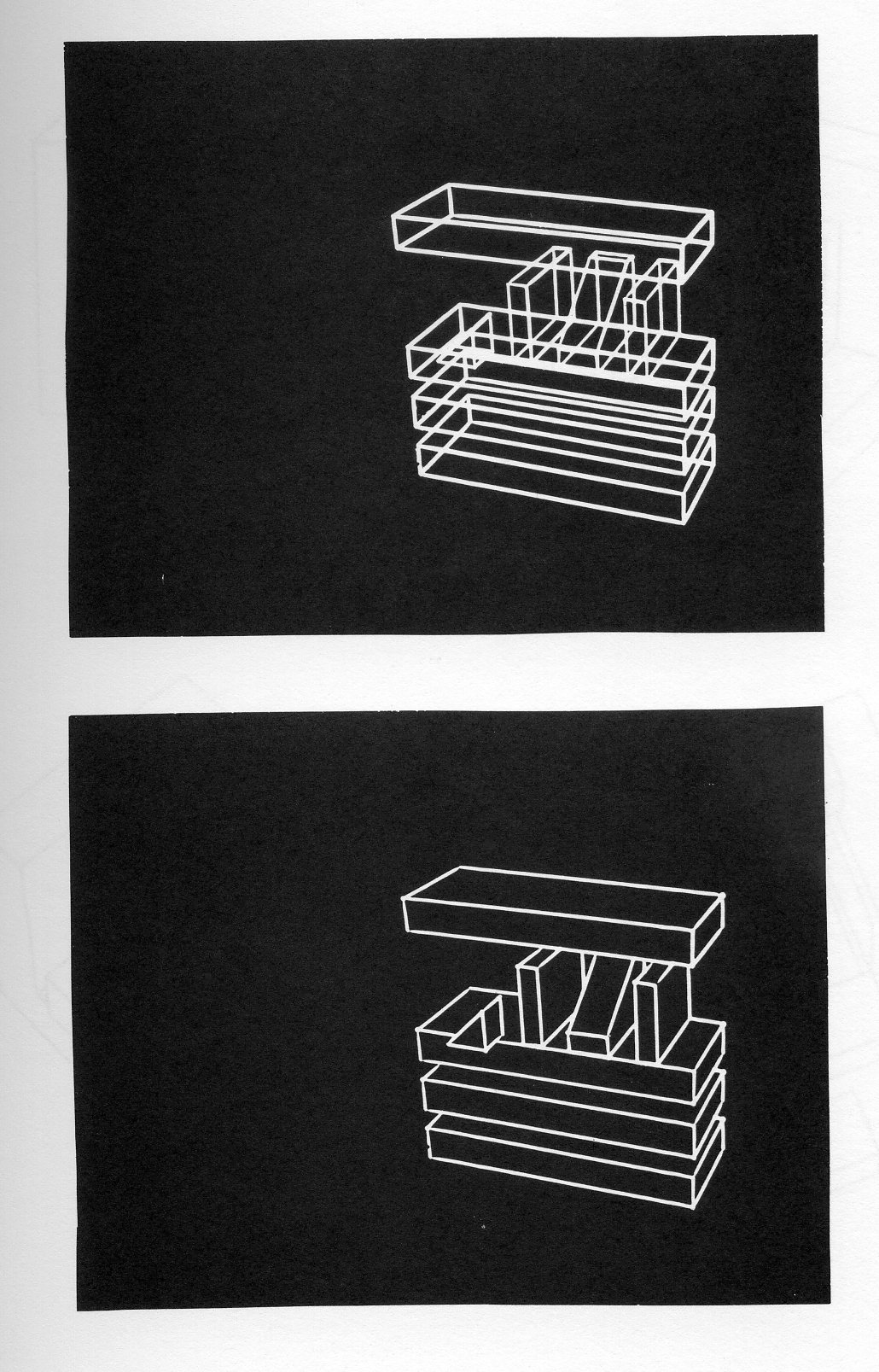

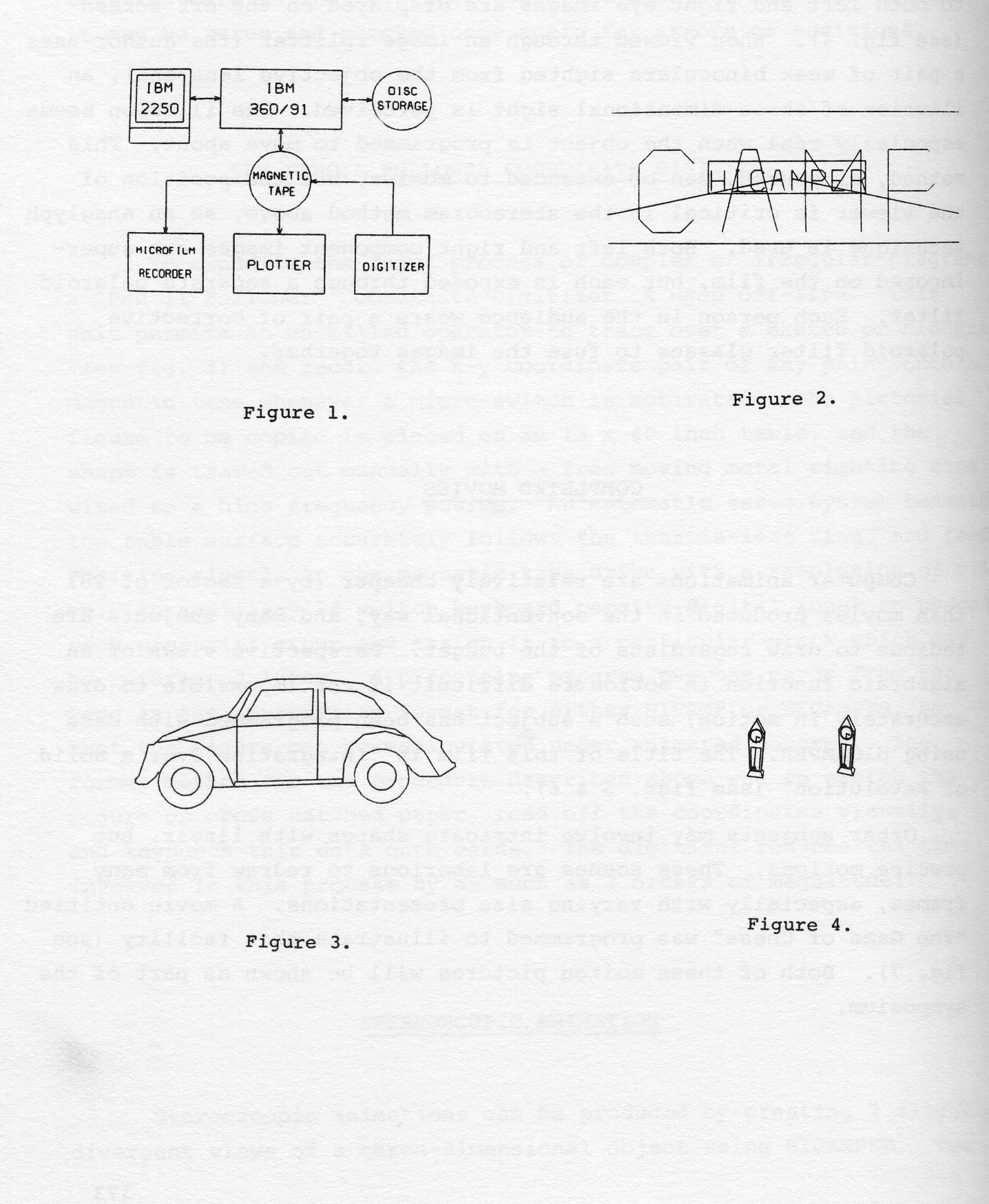

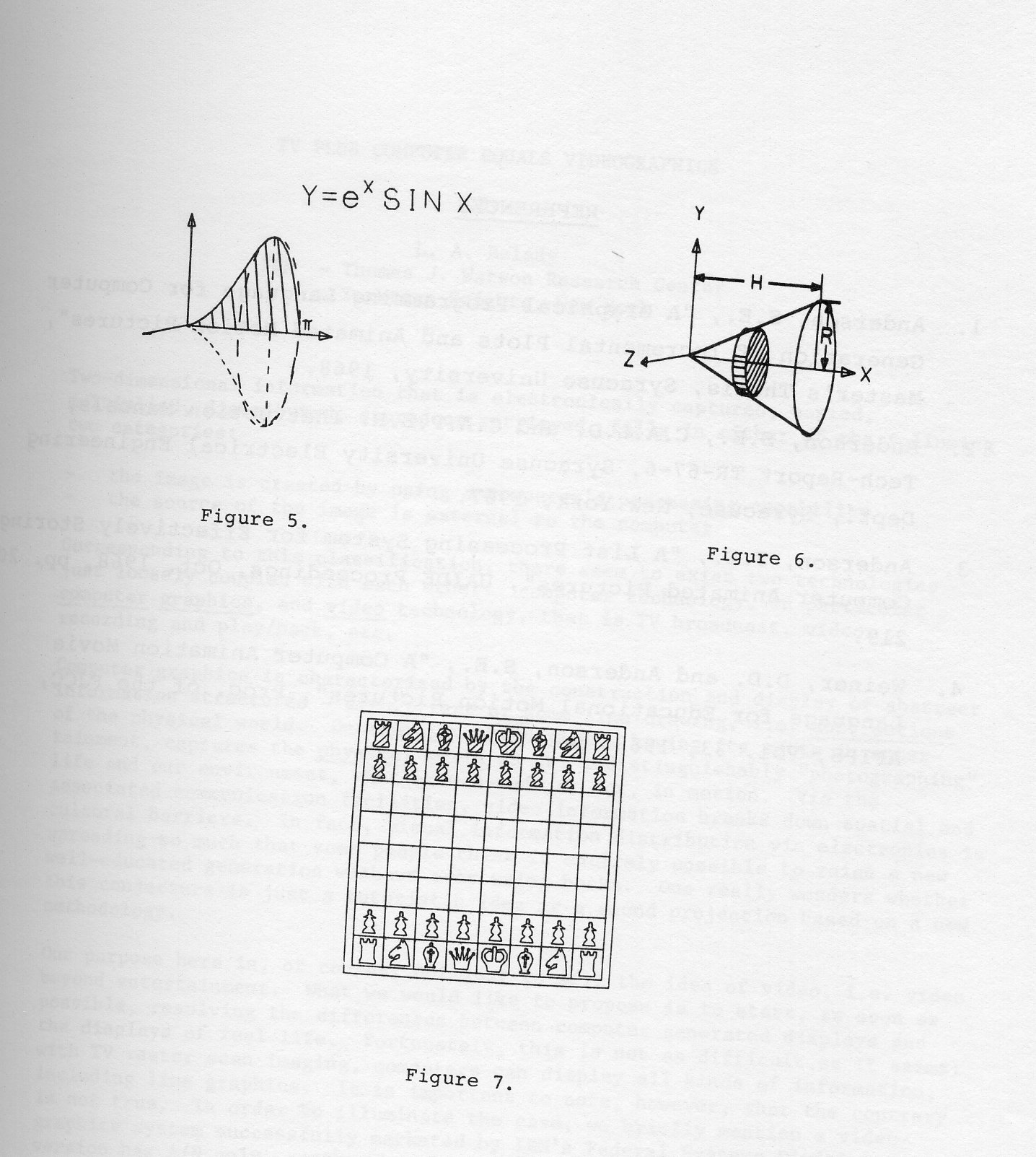

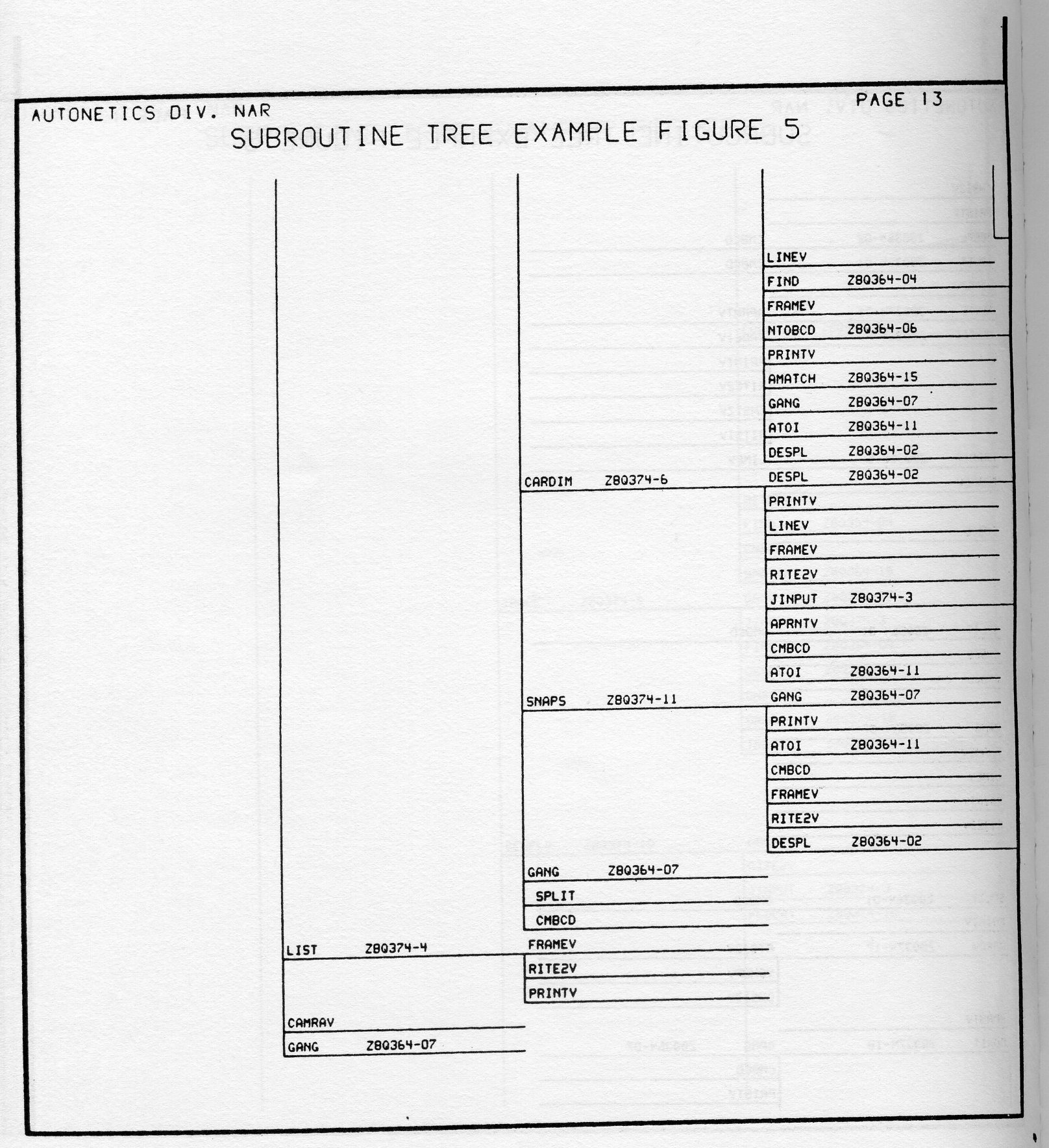

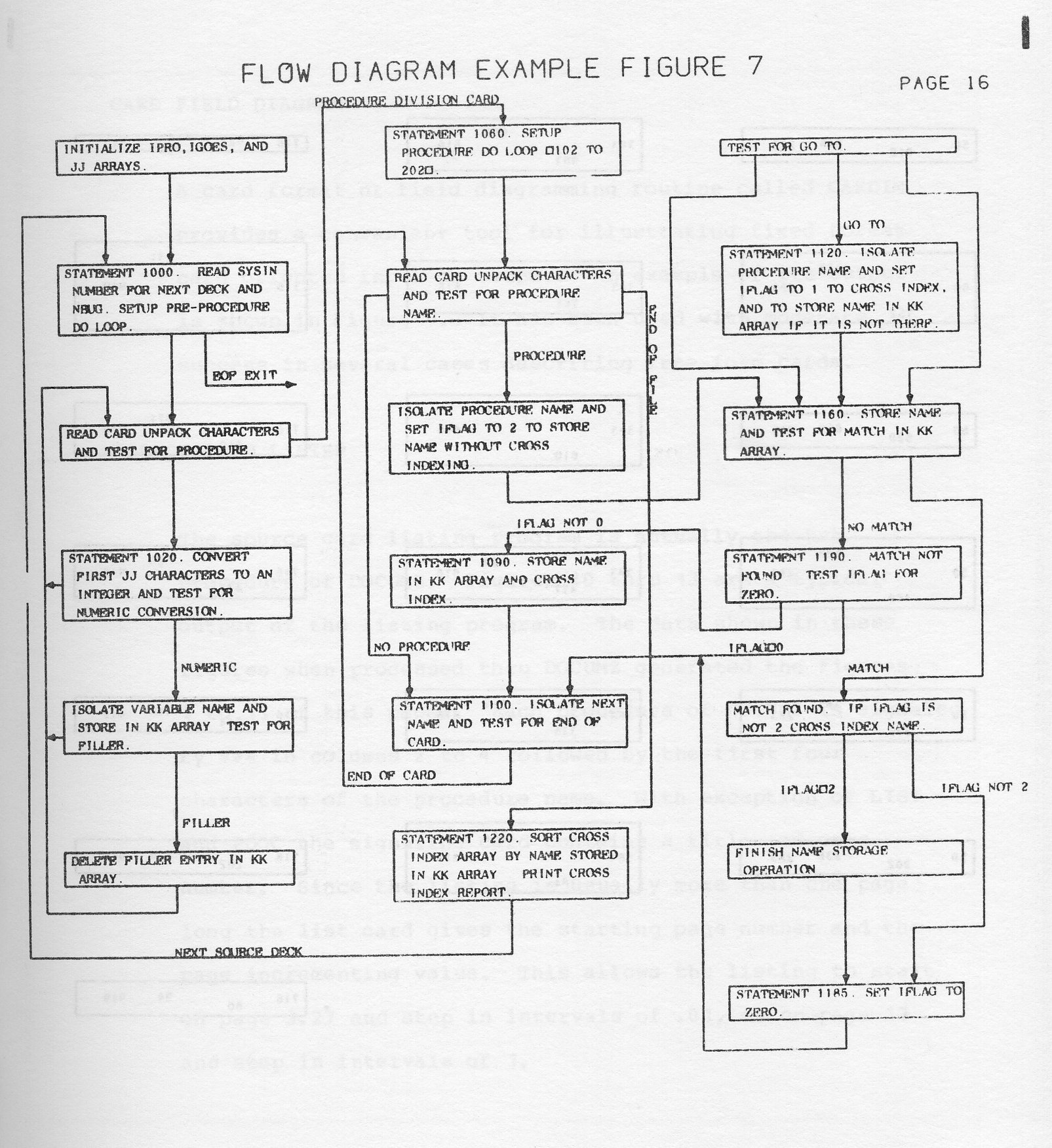

However complex, the variety of uses all reduce at some level to a flow plan that involves the basic elements shown on the left side of figure 1. The loop construction in a computer program allows small changes in variables to be made from frame to frame so that the display characteristics which are a function of those variables are exhibited in a motion whose smoothness depends upon how small one is willing to make the changes in the variables used to construct the display. The mechanics of implementing such a flow vary with the software language, the computer and the display device used. The implementation capability for generating animated footage can be viewed two ways. On the one hand it is simply a technique available to a producer to be used when appropriate. On the other hand, computer animation can be the guts of a biweekly production run to repeatedly extract weakly related cross sections of data from a large data base or bases. In the latter view, you have strong candidates for display in a form different from a fixed graph or bar chart.

A general classification of computer animated business display possibilities would have to include

The variety of fixed graphs possible are well characterized and described elsewhere in these proceedings. Here we will show from the above classes some non-standard display uses made possible by computer animation.

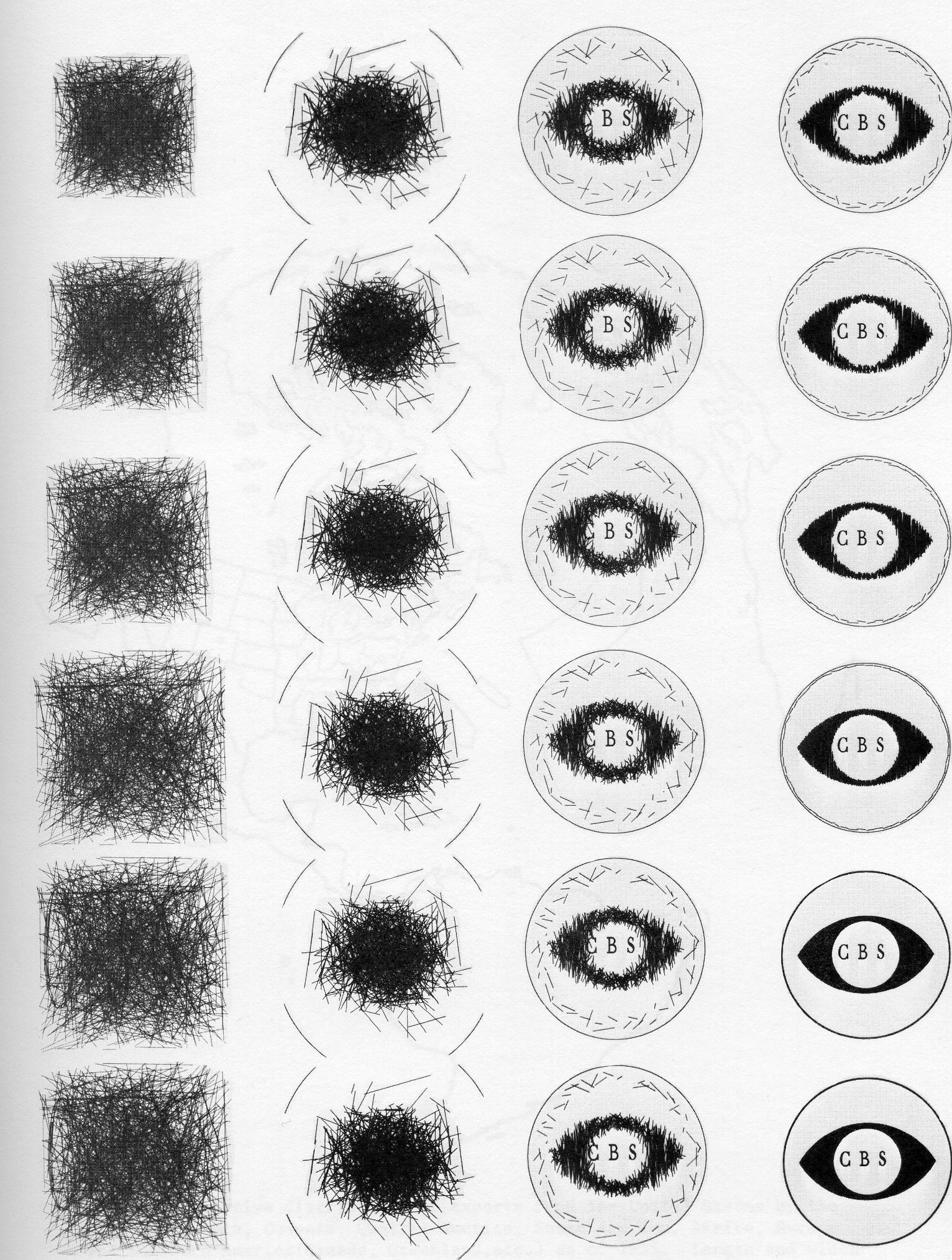

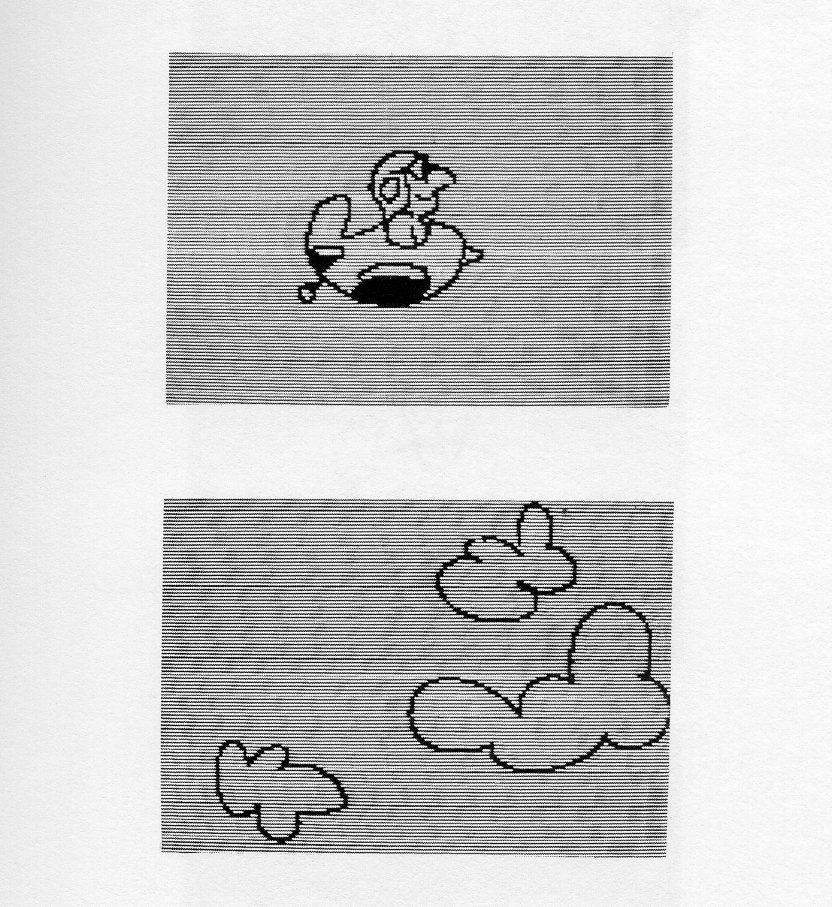

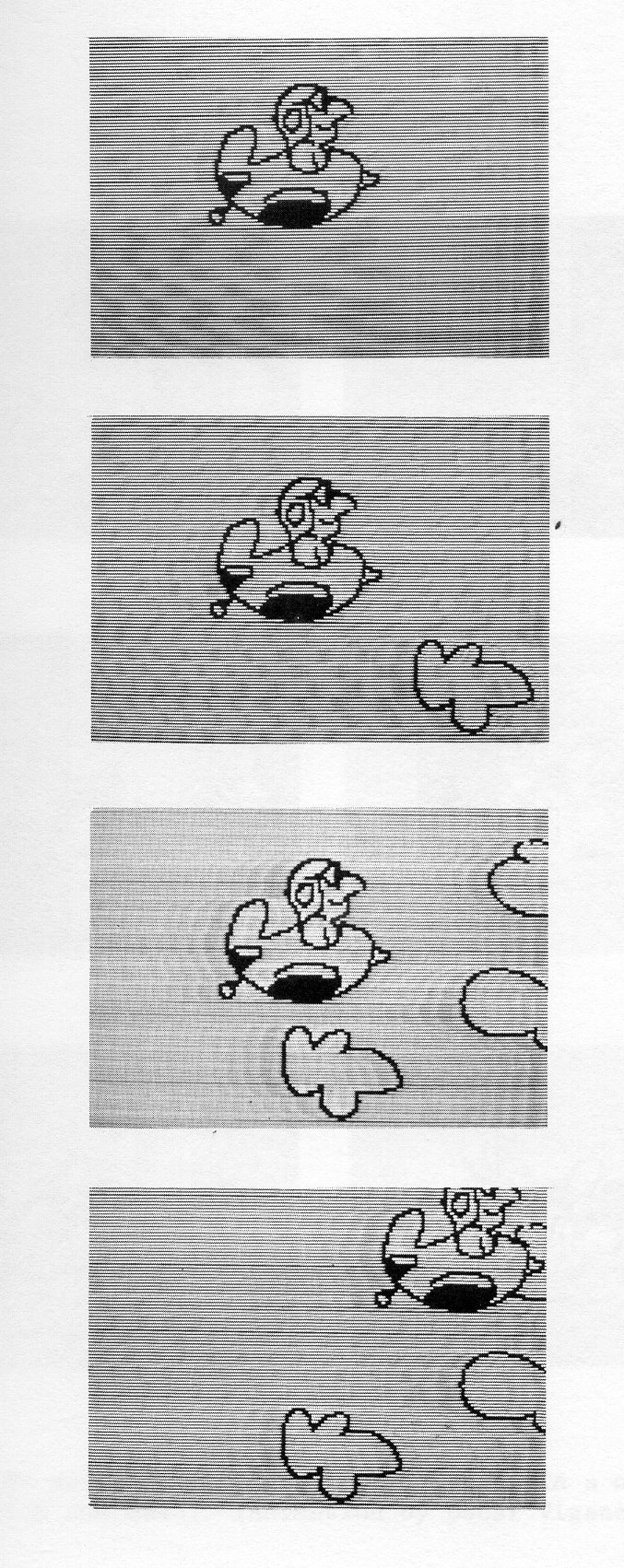

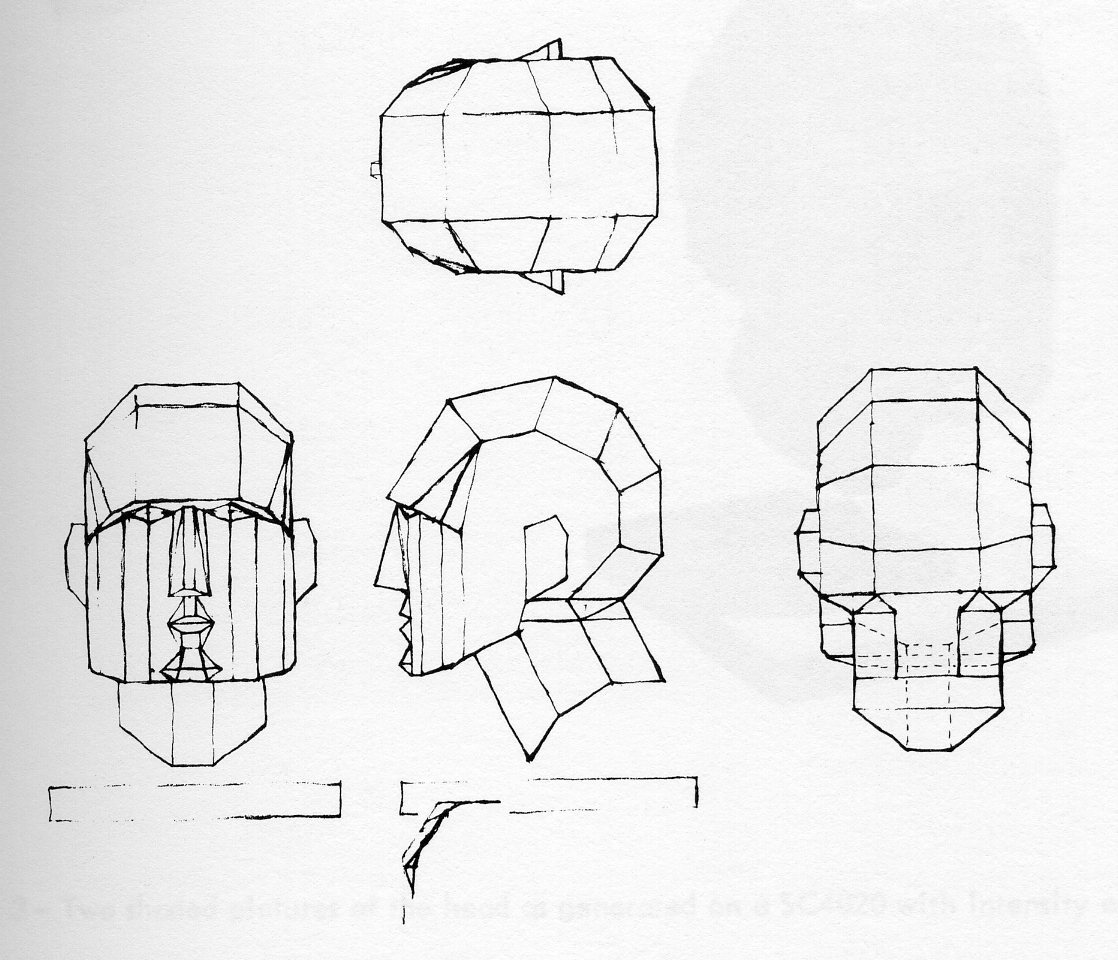

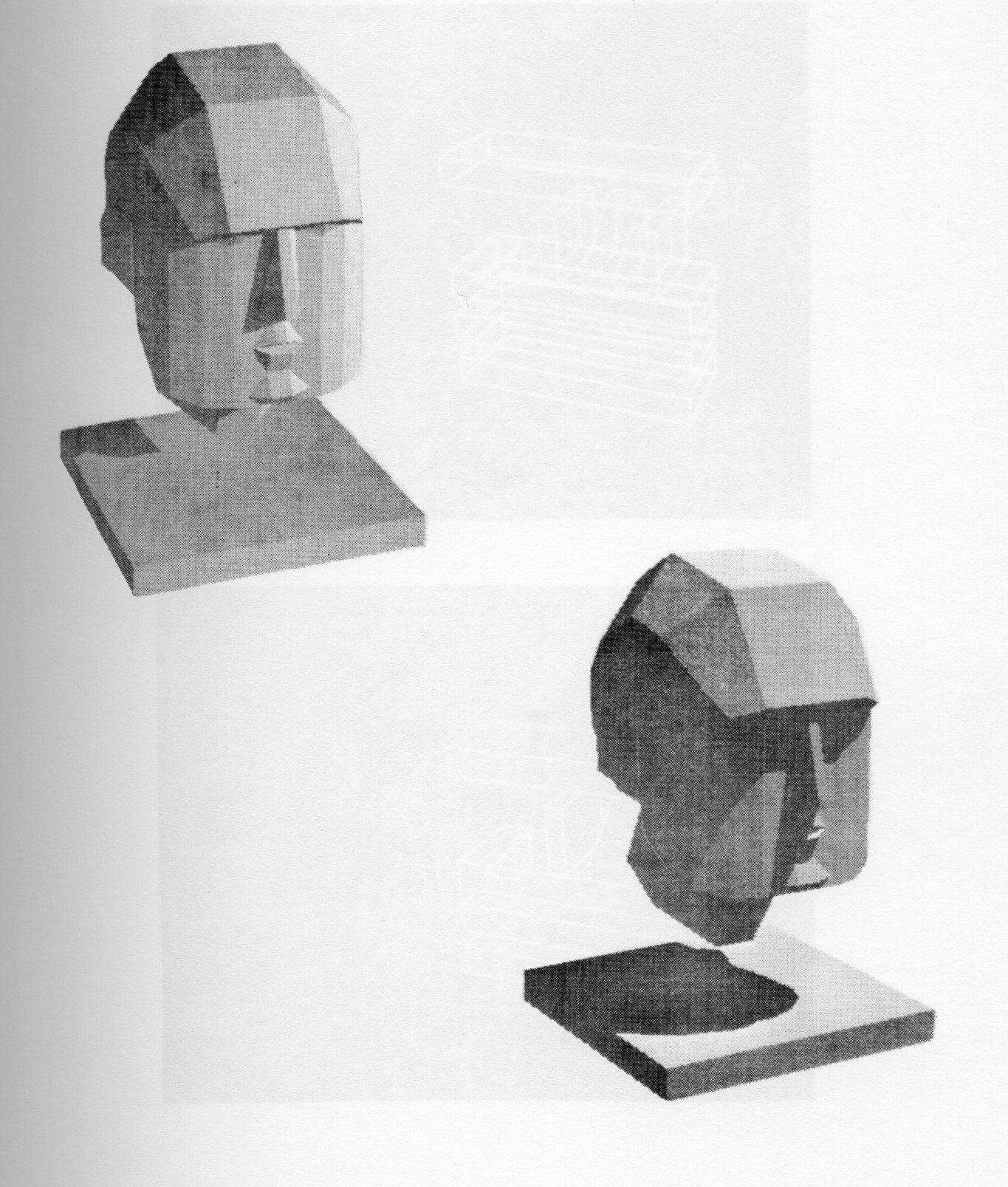

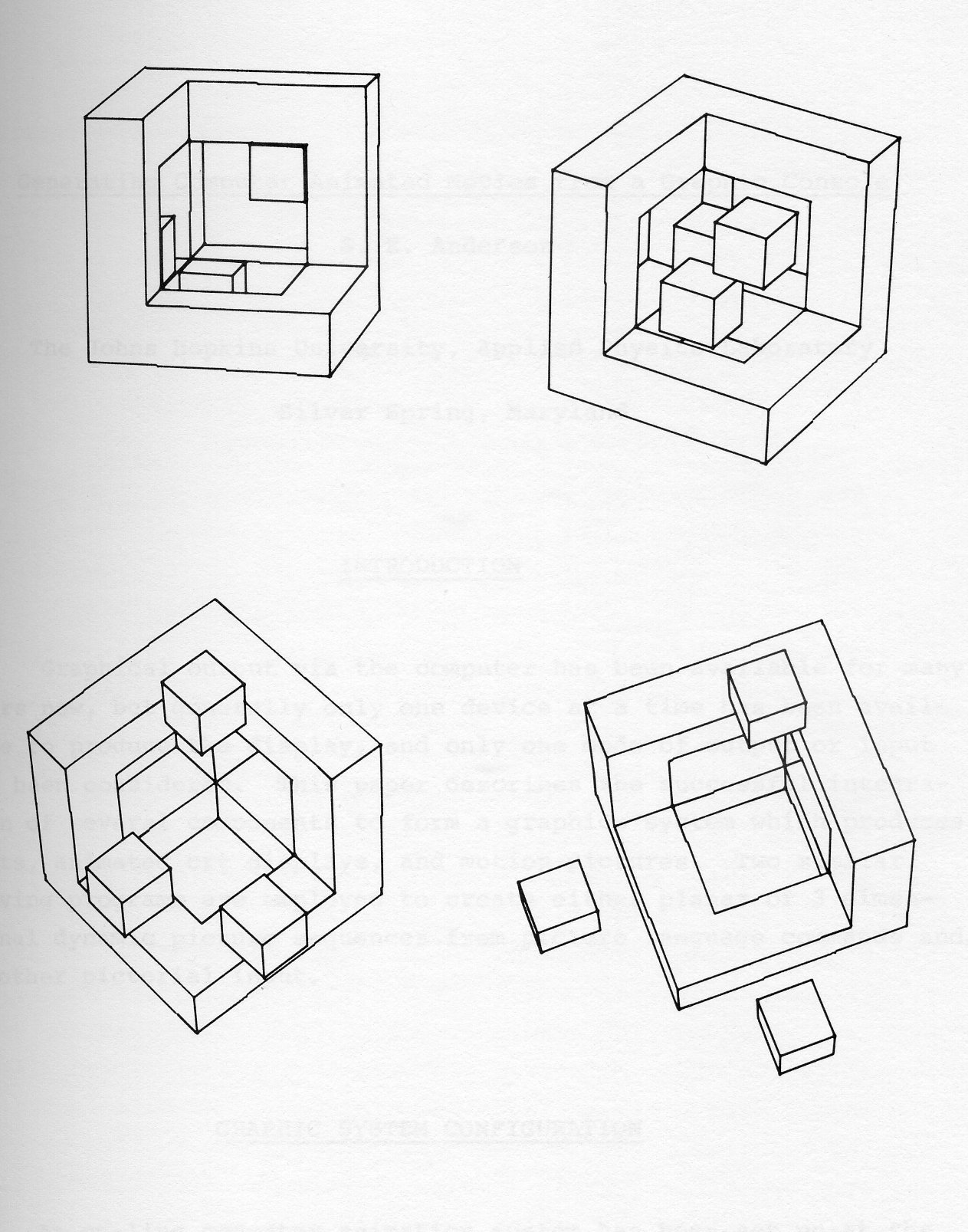

Figure 2 contains several frames of a logo construction from a computer generated sequence of the CBS eye. One can contemplate imaginative, attention getting metamorphoses of product shapes which might otherwise be considered out of the question.

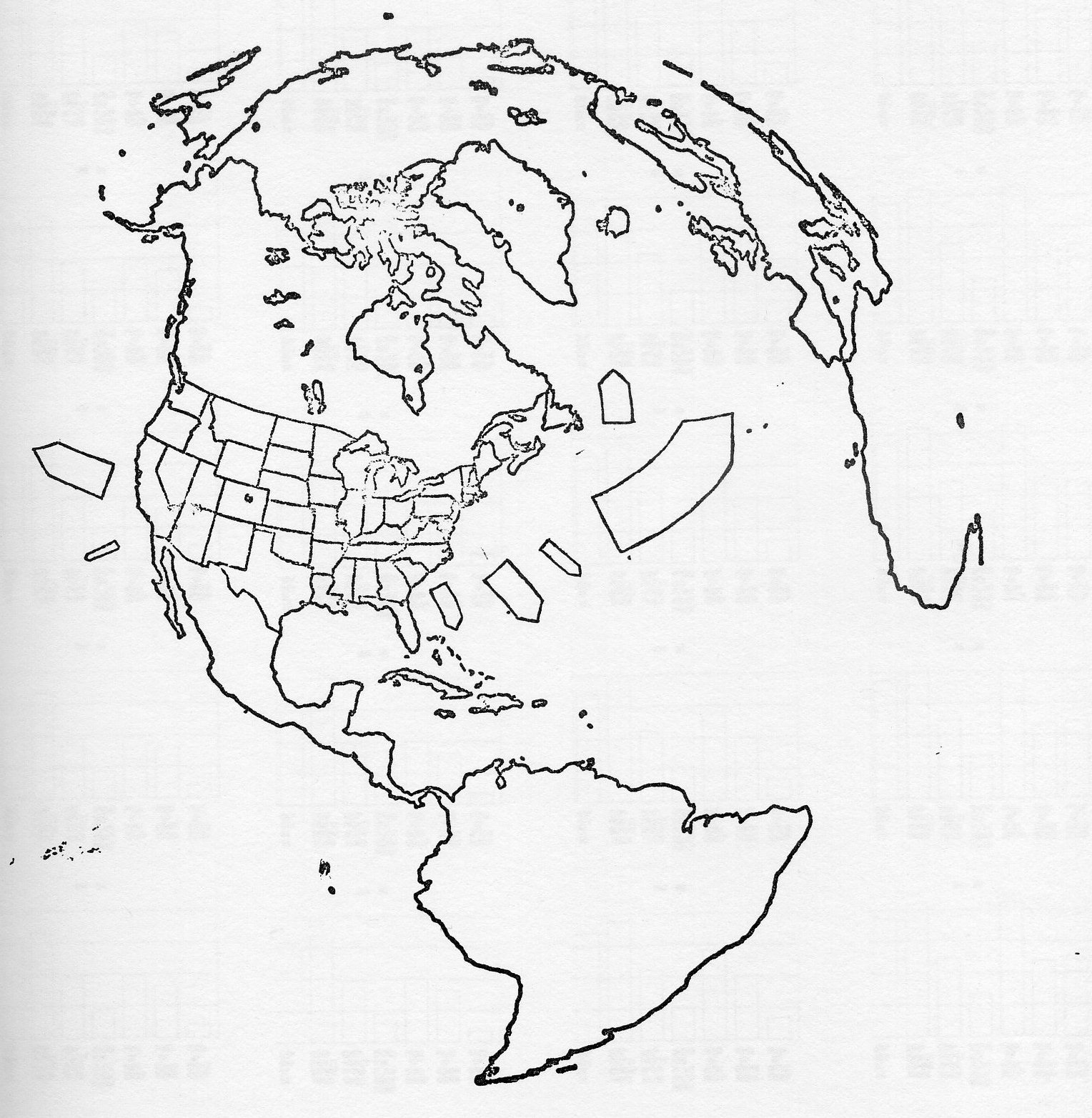

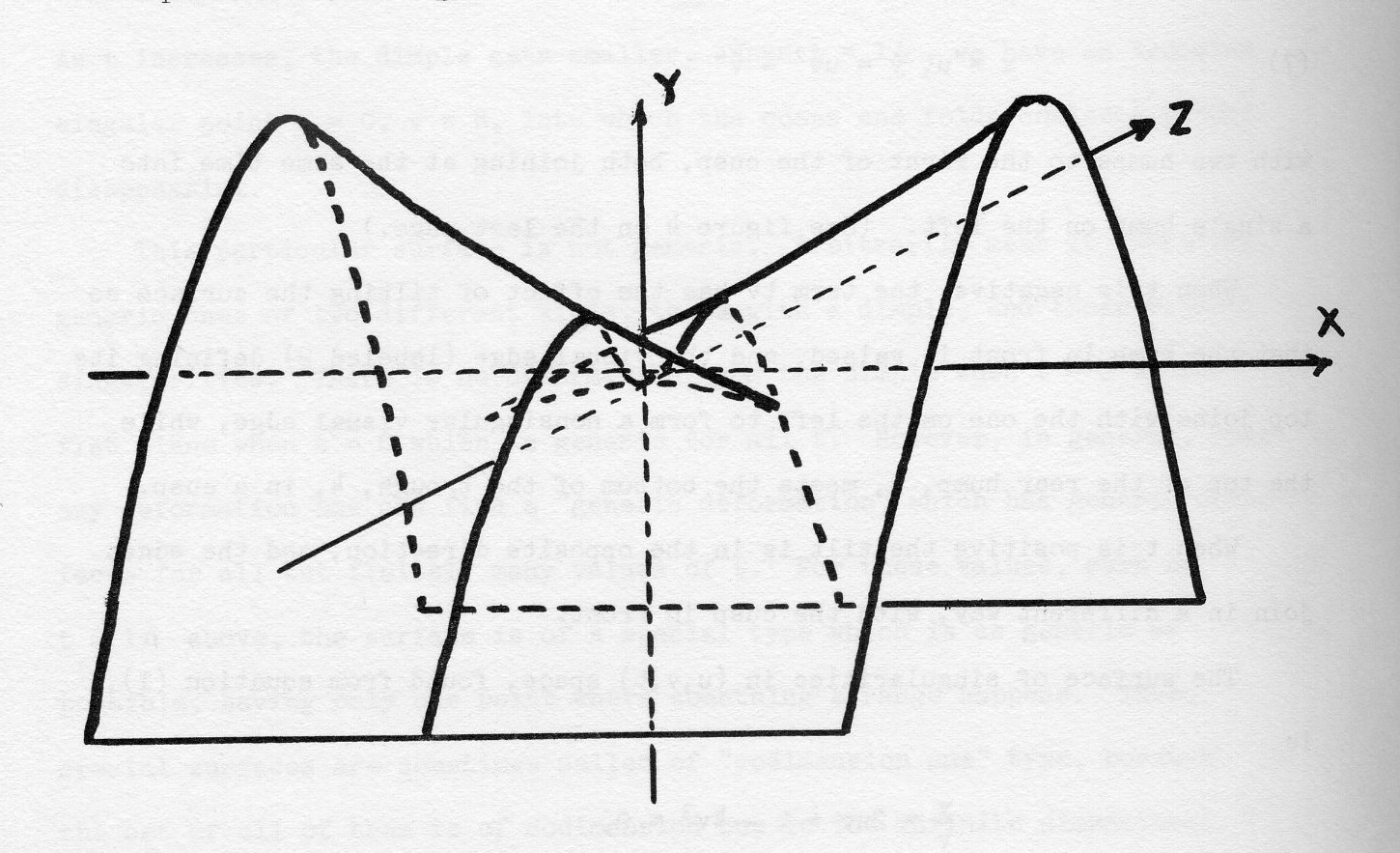

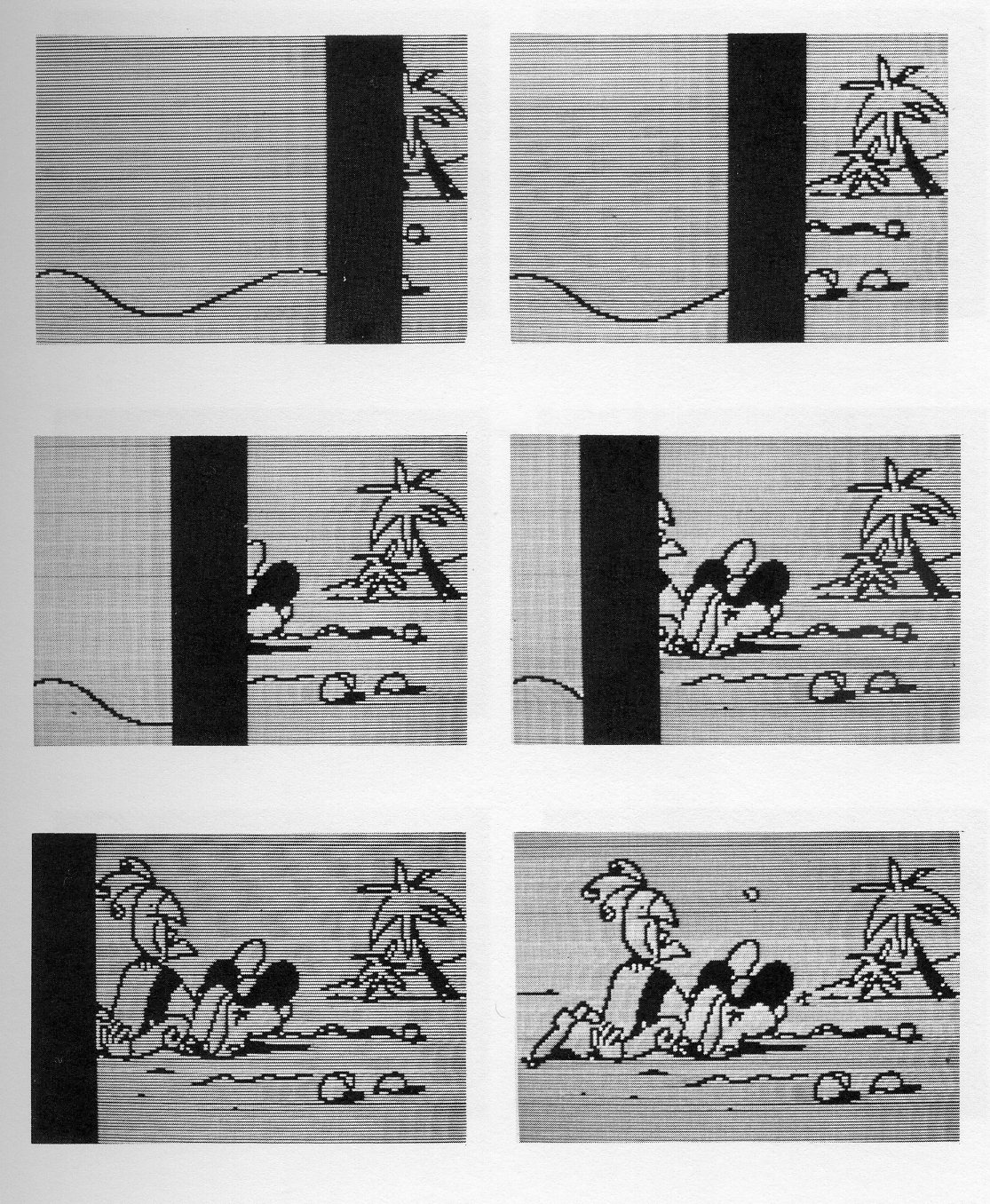

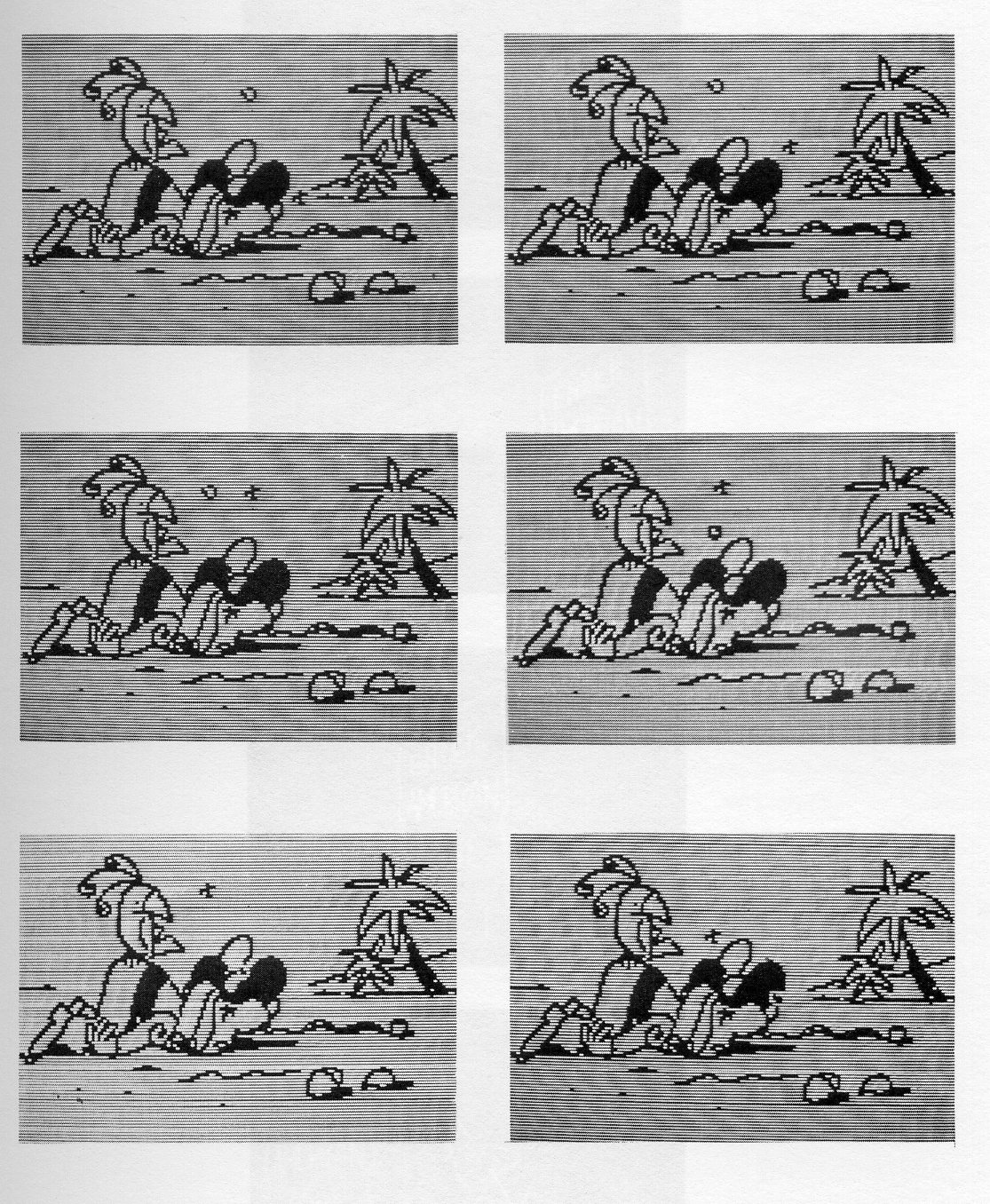

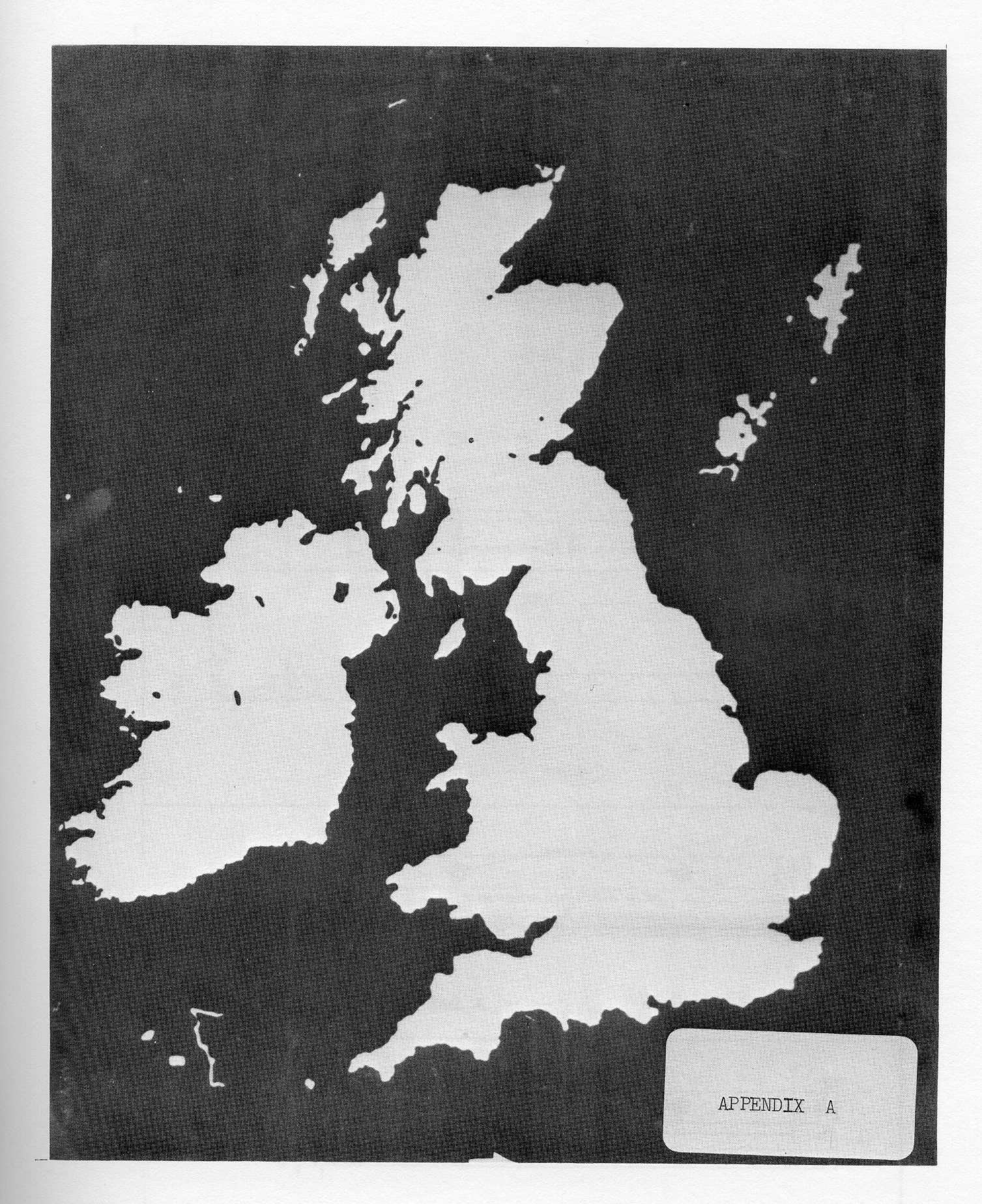

In information retrieval systems using microfiche or roll film, the connection between frames is some indexing method or scheme for ordering successive frames; with the movie, one looks for changes as a single parameter (not necessarily time) or many parameters change from frame to frame. The information sought is usually not specific values but rather trends and relationships. Frequently apparent randomness is observed in local movement but overall order is detected when the total environment of the motion is observed. Topographical displays allow visual comparison of company trends (say sales) with growth patterns or related business trends to determine where geographical deficiencies exist or can be anticipated in a company's efforts to catch part of a market. The view can be local or global as shown in Figure 3.

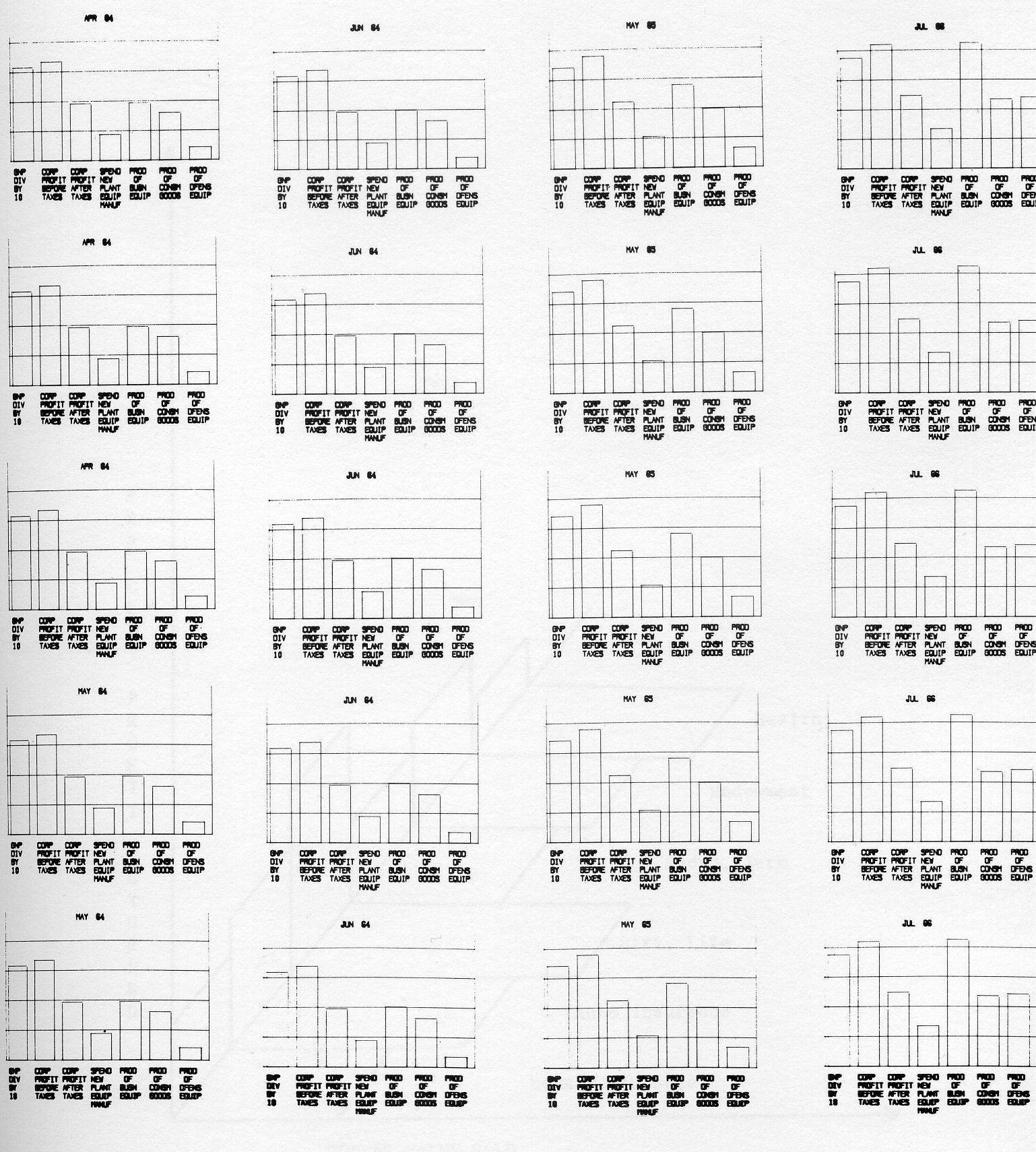

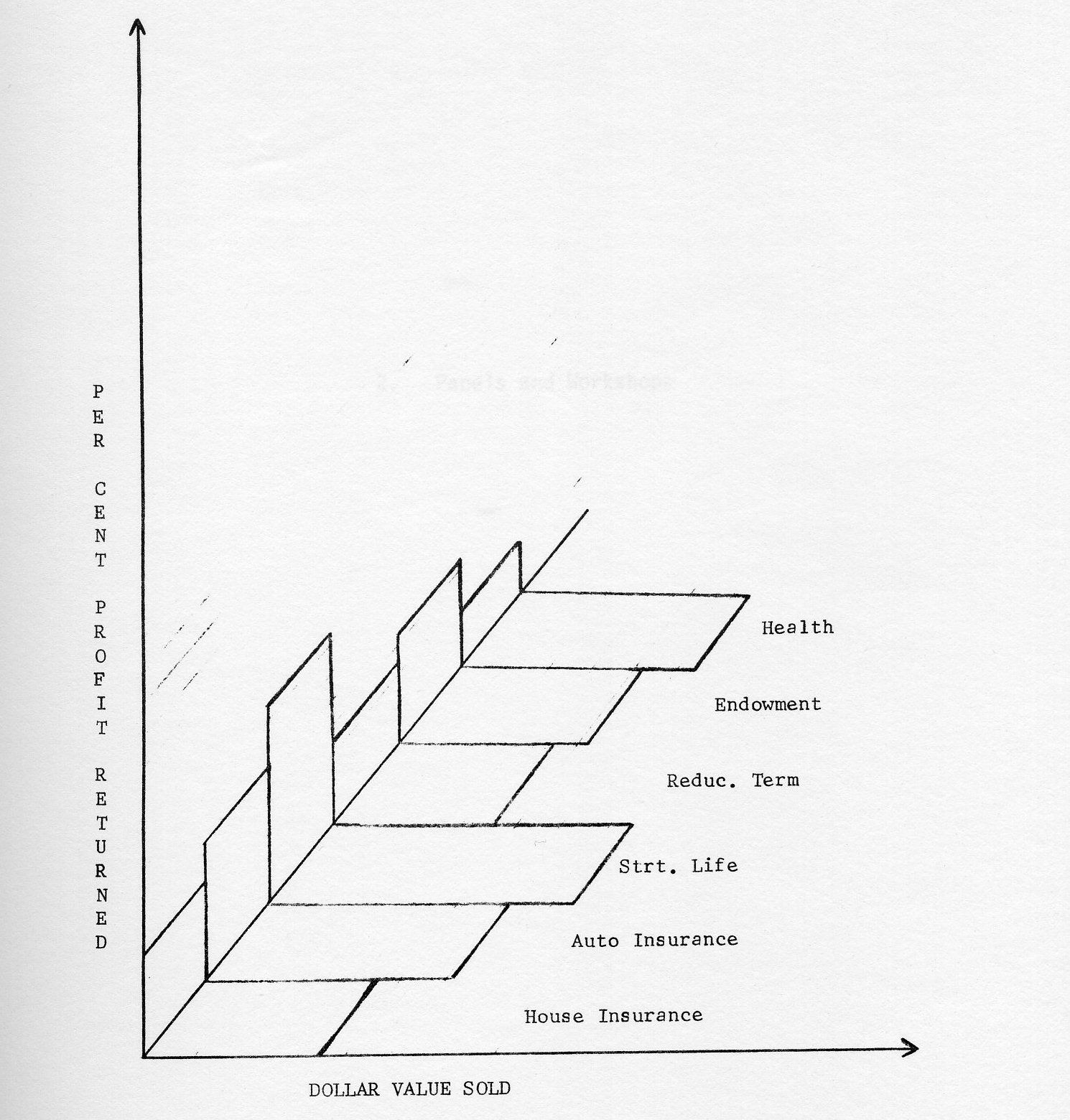

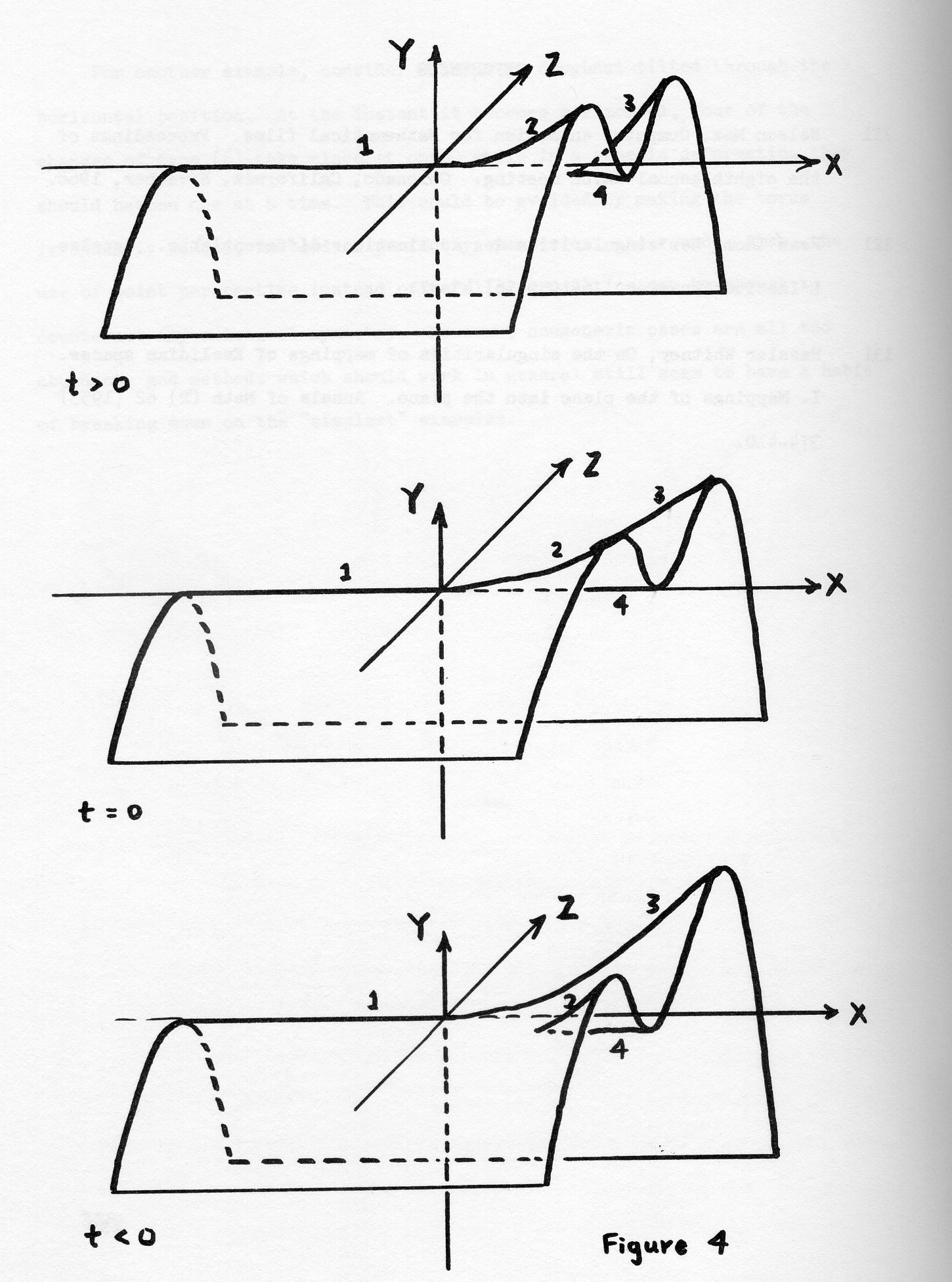

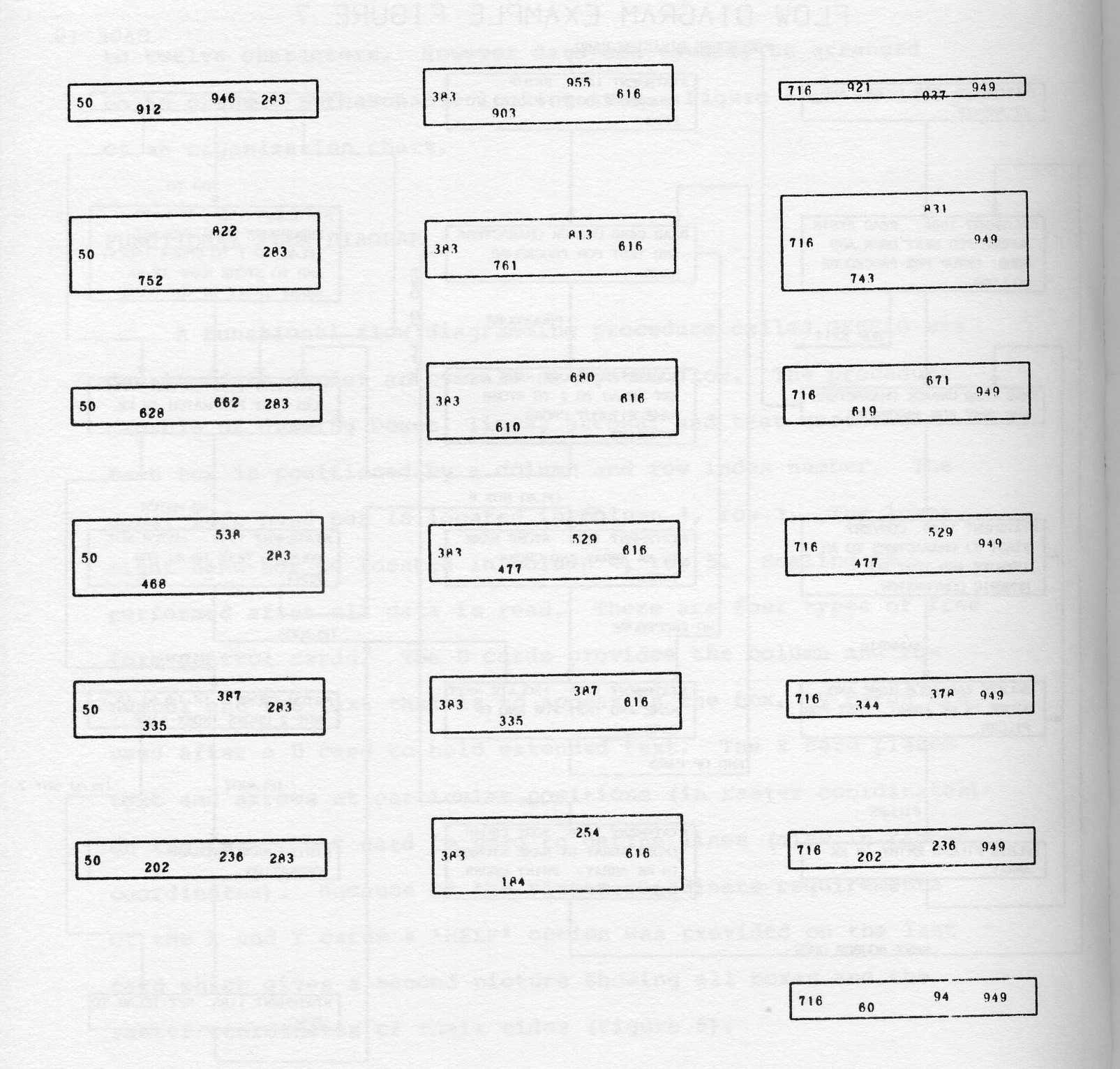

Even the bar chart can be made dynamic. Figure 4 shows several frames from a sequence depicting changes in the GNP over a five year period. Several corporate profit and production quantities for the same time period are displayed. Bar heights are computed to change smoothly between quarterly reported values. The motion of the bars over time can be superimposed with individual company performance records to show how the company is leading or lagging industry wide averages or perhaps not matching them at all. The same information displayed on a geographical basis would be of even greater interest to a management team.

The simple bar chart animation of Figure 4 could be extended to any of several bar chart types, pie charts, statistical maps and charts drawn in projection. Figure 5 is an attempt to show how a frame from such a chart might be designed to allow comparison of disparate quantities.

The cost of generating business animation films depends on a) whether the output represents a new program or merely an increment of effort beyond a currently made computer run, b) the amount, if any, of post computer production work required (say packaging in loops to send to salesmen across the country), c) the general utility of the program for repeated use.

In the end, pursuit of business animation has to be dictated on the basis of an evaluation of the worth of the information displayed versus its cost.

DatagraphiX has announced in the past year a collection of software packages to support its Micromation printers called BEST, Business Equipment Software Techniques. This talk covered the major packages in BEST as far as the purpose, capabilities, limitations, and basic design of each. The individual packages covered included MPS (Micromation Printer System), DOW (DatagraphiX Output Writer), DART (DatagraphiX Automatic Retrieval Techniques), and VIP (Verification of InPut). Each of these packages is better suited to solving a certain class of problem within a particular computer environment. The appropriate types of problems and computer environment was pointed out for each.

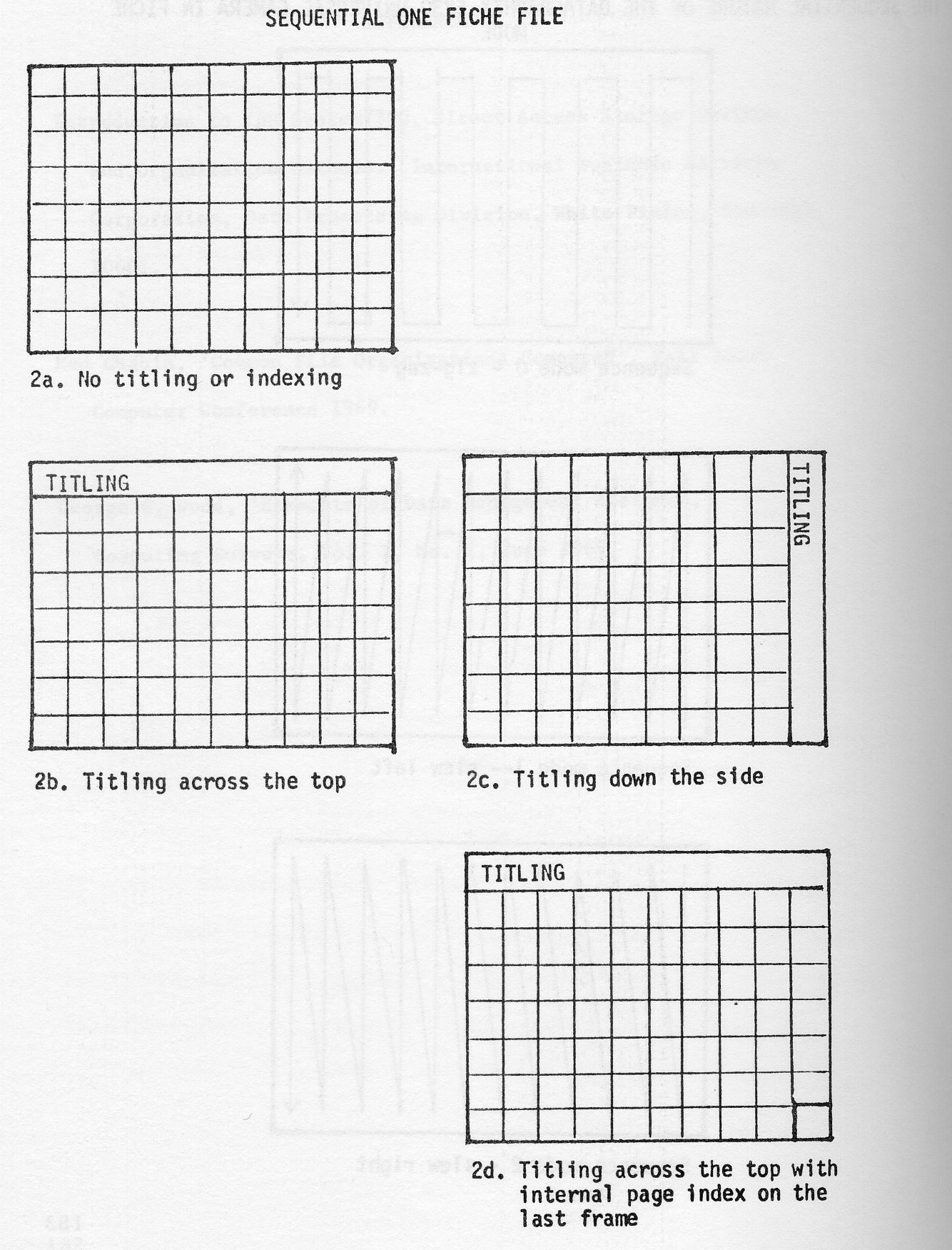

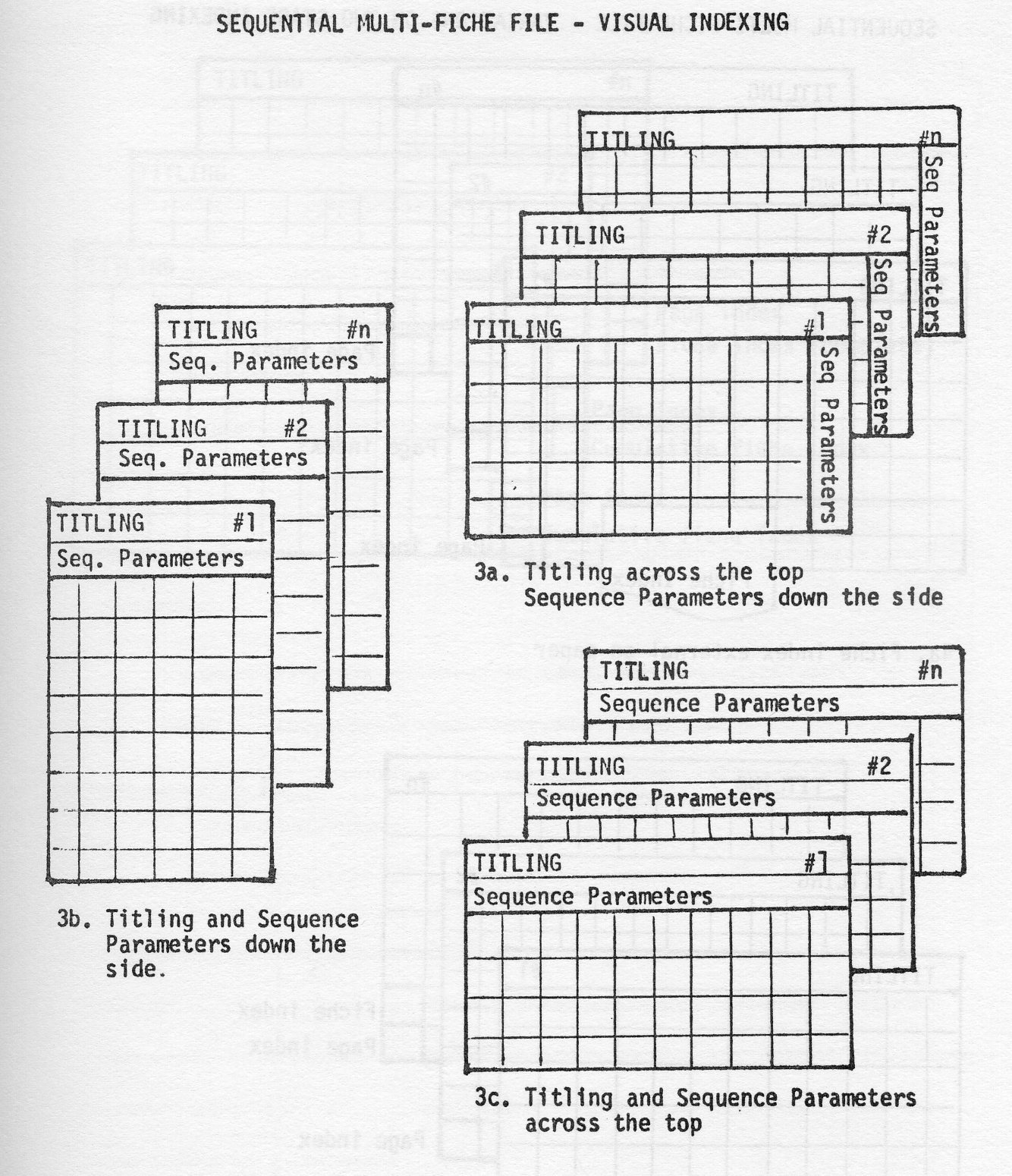

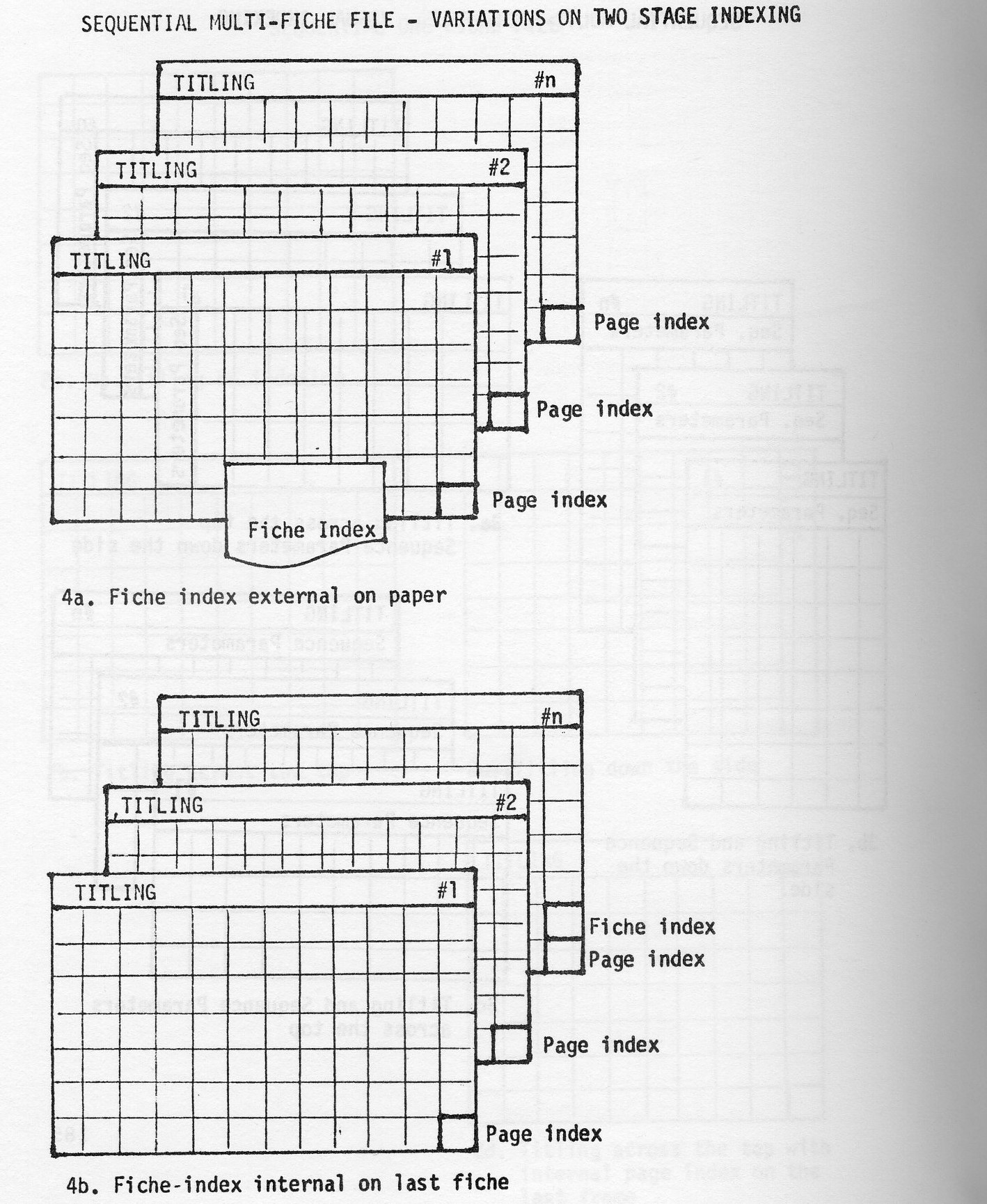

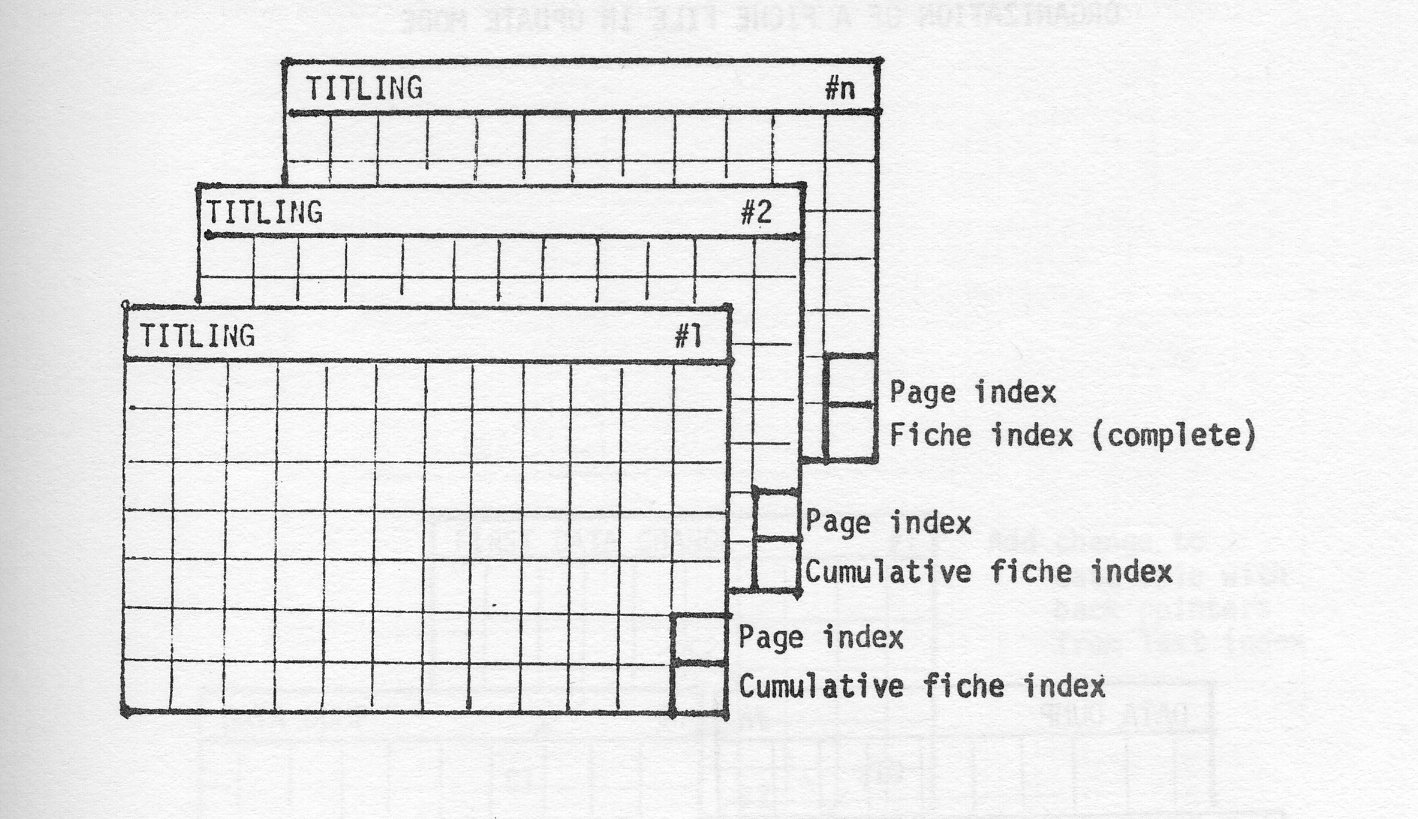

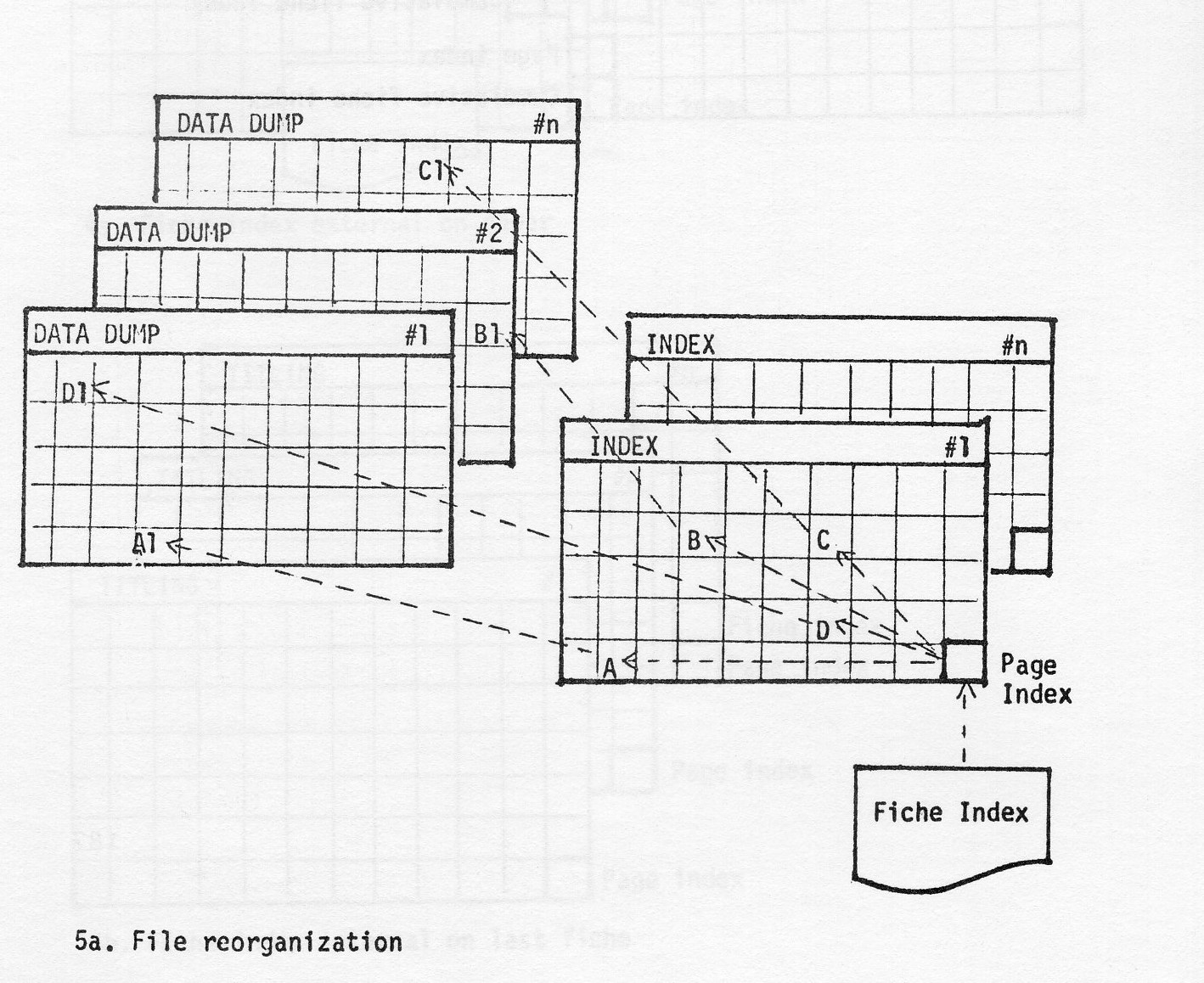

Also discussed at some length was the various general approaches to programming for a Micromation printer. These include use of LPS (Line Printer Simulation) versus standard mode data; use of direct generation of Micromation format from the problem program versus post processing; use of offline recorder versus online. The advantages of the Sysout Writer approach used in DOW was especially noted. The problems inherent in fiche management regarding generation of hand readable titles and fiche indexes were reviewed and approaches to solving these problems were discussed.

The session is divided into three segments:

Throughout the evolution of DatagraphiX microfilm recorders, the customer has indicated the needs of the market, primarily through UAIDE. The 4020, the first DatagraphiX commercial microfilm recorder, although developed for scientific work, has straight printing throughput capability of 7,000 lines a minute, ten times faster than the state-of-the-art impact printers. Through application seminars of UAIDE, DatagraphiX (then Data Products Division of Stromberg-Carlson) realized that a market existed for a faster alphanumeric microfilm recorder at a much lower price than the 4020. This information gave birth to the 4400 - DatagraphiX first total alphanumeric business recorder.

Social Security required some additional features on their 4400 such as a Polaroid Camera, faster transfer rates, tape backspace, and write check, so DatagraphiX built the 4410 to fulfill their needs. Another machine, the 4411 with other special features was configured for the Census Bureau about the same time.

These two requests highlighted the fact that a single machine would not take care of the business spectrum's total needs. The DatagraphiX 4360 and DatagraphiX 4440 resulted.

In the past year, several new features have been announced and installed on these two business recorders:

The above six new options have all been tested, installed, and are working satisfactorily. Some design bugs were found as is the case with any new computer equipment. Mr. Reynolds of Bank of Wachovia, was instrumental in helping us uncover a 360 LPS skip immediate space problem. We had designed it skip immediate print.

Two additional features, not options, were also introduced this year as a result of customer demands:

Recently DatagraphiX had made various special engineering modifications for customers. Some of these may have appeal to the general business COM market.

Response to these features will help mold the future of DatagraphiX present product line and possibly the next generation of Micromation.

One of the tasks of the Business Systems Committee is to provide a medium for the mutual exchange of information concerning business applications. The main objective of this workshop is to determine the problems of micromation systems documentation, and to update the purposes and objectives of current efforts to develop a business system manual within UAIDE.

During the past year, the business systems committee has attempted to accumulate documentation to be used in the proposed manual. A first attempt was made on September 18, 1969 by the Michigan Chapter of UAIDE. At the last board meeting, each of the UAIDE board members were questioned to determined the purpose and objective of a business systems manual. No one would agree on just what was the intent of the manual.

The proposed business systems manual was considered in terms of what role this type of documentation must play. What type of audience are we considering?

(The audience was asked to comment on the role of a business systems manual based on various audience levels.)

After the audience levels were determined, the chairman handed out the objective setting worksheet, and the content sheet of the business systems manual proposed by the Michigan Chapter.

Accumulate and maintain a Business Systems Committee (BSC) Manual. This manual will have five sections, each section being a consolidation of documentation submitted by UAIDE member. The sections and their content follow:

Name: ________________ Company: ________________

The following items are considered to be important in terms of providing information to the interested reader. Circle those topics which you think should be included. Draw a line through the items which should not be included and add new topics to the bottom of the list.

Name: ________________ Company: ________________

The following items are considered to be important in terms of providing information to the Systems Analyst. Circle those items which you think should be included. Draw a line through those items which should not appear and add new items to the bottom of the list.

Name: ________________ Company: ________________

The following items are considered to be important in terms of providing information to the programmer. Circle those items which you think should be included. Draw a line through those items which should not appear and add new items to the bottom of the list.

Name: ________________ Company: ________________

The following items are considered to be important in terms of providing information to the COM equipment operator. Circle those items which you think should be included. Draw a line through those items which should not appear and add new items to the bottom of the list.

The chairman stated that this panel would be a continuation of the workshop on Business System Documentation held on Tuesday, October 20, 1970. The purpose of the panel discussion was to uncover those topics which need to be added to the system manual.

The panelists were asked to discuss:

Tom Doran - Chairman "Tink" Henry Wayne Hilton Anzelo Zanis

R. Peoples - Chairman J. Voltiner R. Quinn E. Zamula M. Bickerton J. Landermilk W. Kidd

R. Conti - Chairman D. Gust M. Woods A. Smith

A. Collard - Chairman R. Reynolds III H. Brown N. Stable H. Wallech B. Walton

The following items are considered to be important in terms of providing information to the interested reader.

CONSIDERATIONS IN DESIGNING A MICROFILM SYSTEM

CONSIDERATIONS FOR PROGRAMMING A MICROFILM SYSTEM

The first seven items listed below are your original suggestions relative to providing information to the COM equipment operator. These are supplemented with the comments of the subcommittee members.

It is proposed that the Business System Committee develop, as part of its manual, some guidelines aimed at managers of entities since they determine ultimately how effective microfilm will be in their organization.

The guidelines would, in non technical terms, explain:

Panelists:

Harley Brown - Datagraphix Robert Conte - Insurance Company of North America G. Tink Henry - Oldsmobile Division of G.M. Harry Wade - Social Security Administration Angelo Zannis - Ford Motor Company

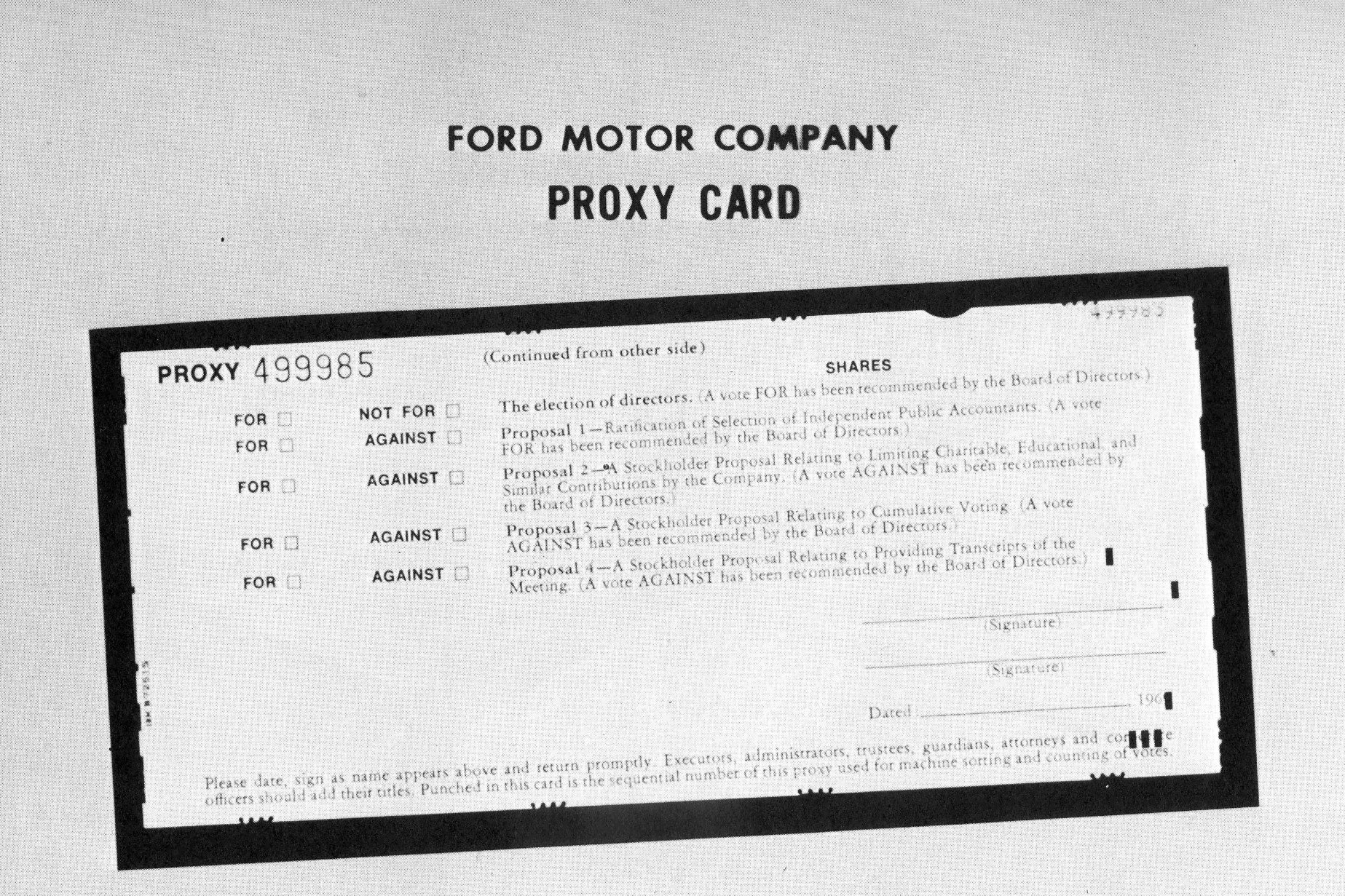

This morning I will be describing to you how we are helping the Stockholder Records Department at Ford get out from under the avalanche of paper produced by today's computers.

During the past five years, the stock market has experienced wide fluctuations in share trading volume. There have been several days in which 19 million or more shares have changed hands. More recently, the market has hit trading volumes where 8 million shares were considered to be a good day's activity. We've read or heard of the paper log jams of 1966 through 1968, resulting from the deluge of persons entering the market - and of the steps taken by the brokerage houses to expand their back room facilities to handle the volume. Brokerage houses today have been forced to curtail their expansion efforts due to a lack of activity. The paper explosion and activity fluctuations in the brokerage houses had a ripple but direct affect on the transfer and record keeping operations performed by banks and by individual companies set up to handle their own Stockholder Record Operations.

At the end of 1966, we at Ford revised our Stockholder Record Keeping System into a highly sophisticated computerized operation that, among other things, is responsive to the demands created by fluctuations in activity volume. In changing the system, we also expanded our Stockholder data base to incorporate far more comprehensive transaction information within one master file. The expanded data base enabled us to develop other sub-systems related to stockholders that had previously been handled manually or on simple EDP equipment. Our discussion today concerns itself with a description of the evolutionary - or perhaps a more vivid adjective metamorphic change brought about in one of these sub-systems - The Proxy System.

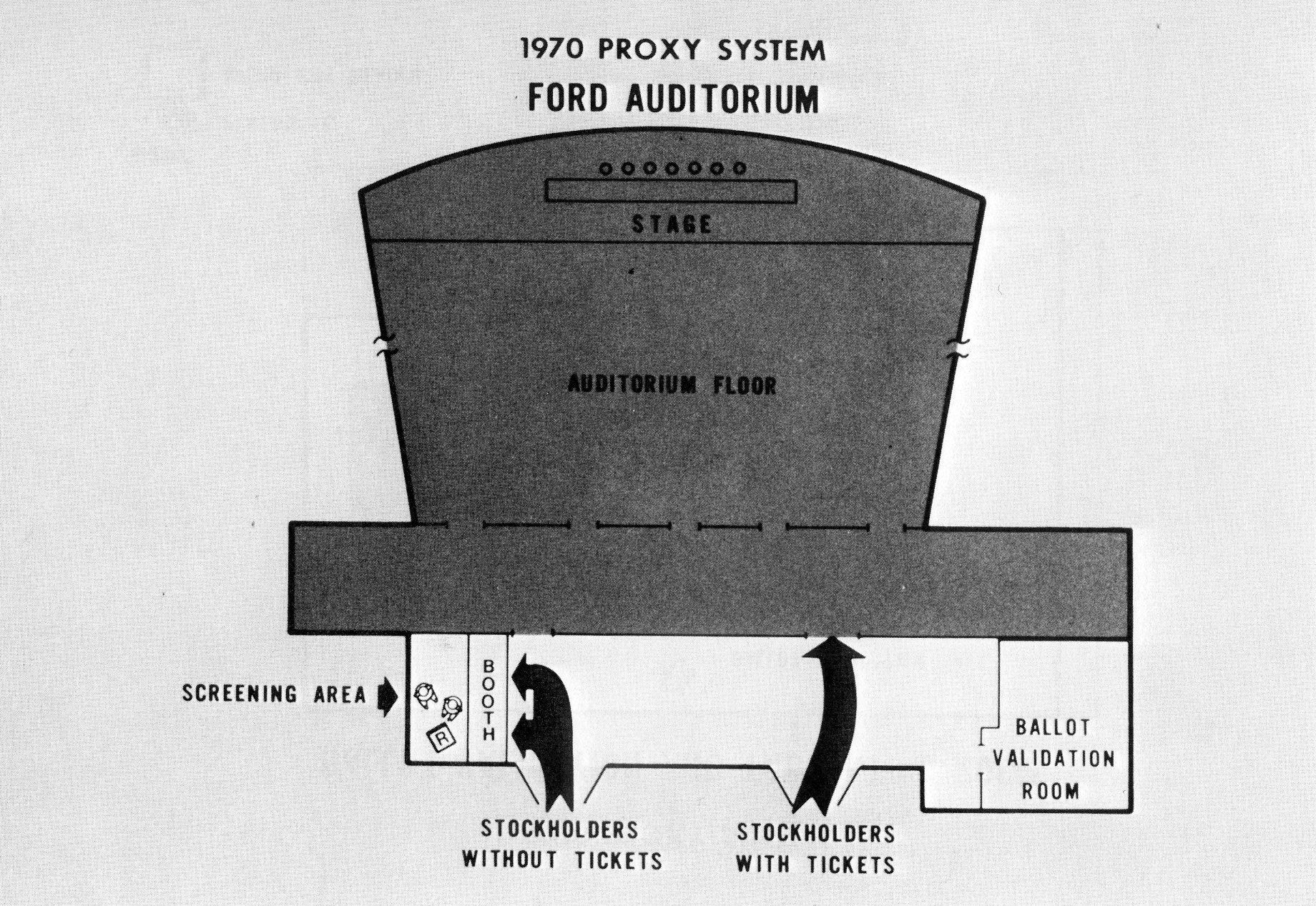

Consistent with other companies, the Ford Motor Company holds an annual stockholders meeting. Every stockholder is invited. Our meetings are held in the Ford Auditorium located in downtown Detroit - about 12 miles from our Central Corporate Office. Approximately 1,200-1,500 people attend these meetings each year. At the meeting, the events of the preceding year and forecast objectives and developments for the coming year are discussed. In addition, a portion of the meeting is set aside to enable stockholders to cast their ballots on proposals brought before them by management and individual stockholders. Stockholders have the option of mailing in their proxies prior to the meeting or casting their ballots at the meeting.

The Administrative activities performed by the Stockholder Records Department with respect to the Annual Meeting can be divided into two time phases.

The first phase includes those activities performed prior to the day of the meeting. They include:

The second phase includes those activities unique to the day of the meeting. These include:

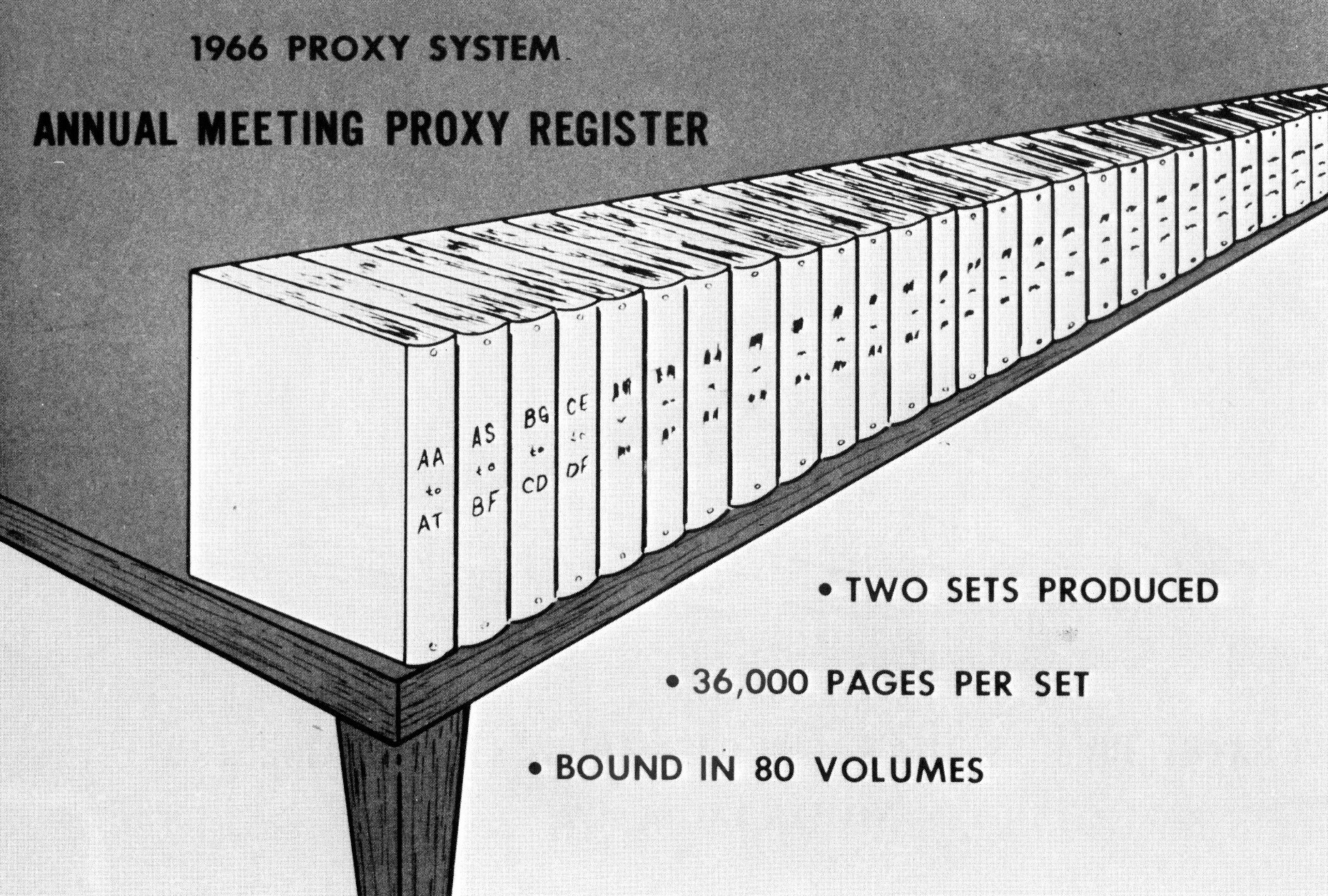

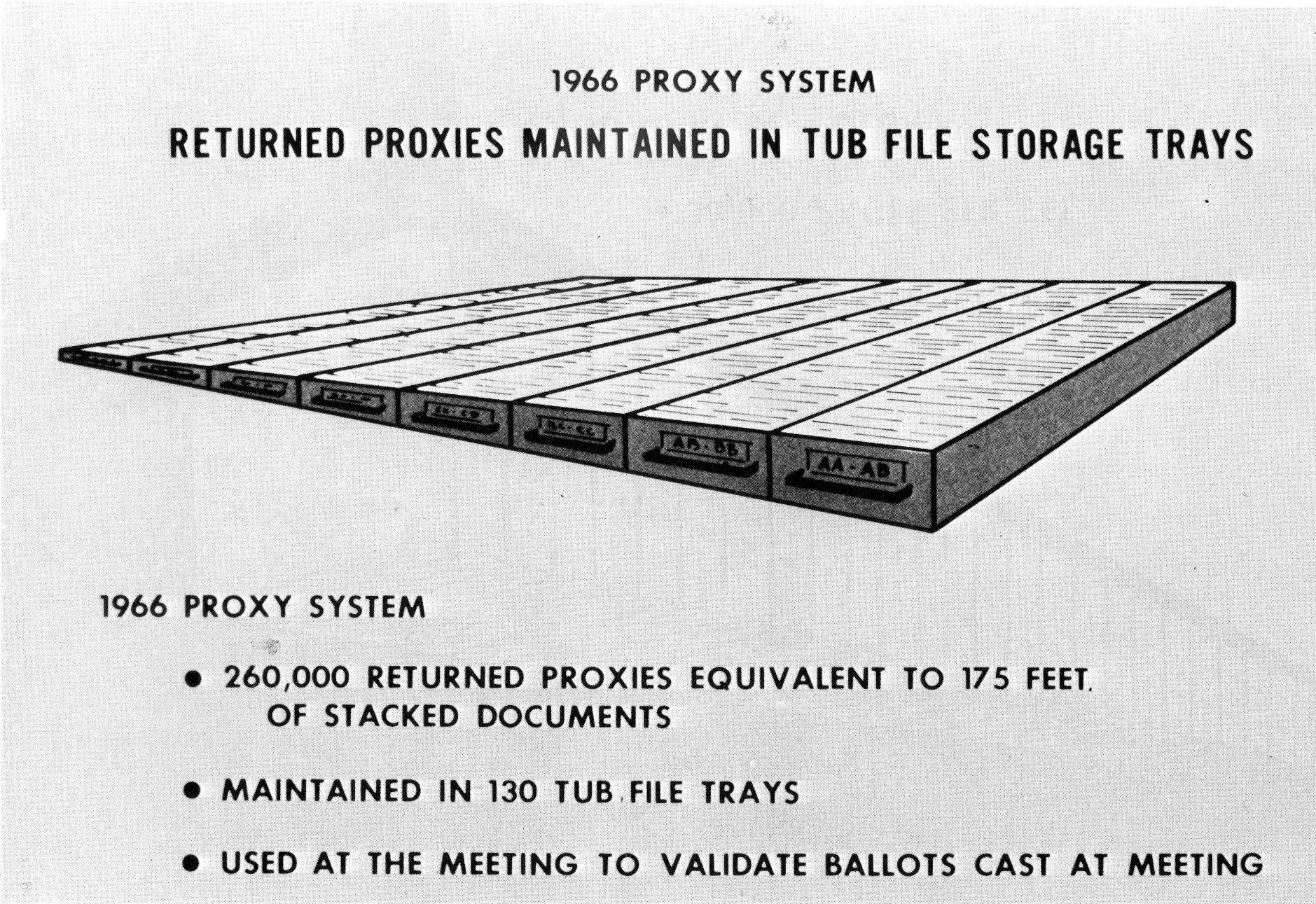

In observing the operating system used in 1966 on the day of the meeting, we noticed:

To summarise, the 1966 operation I have described was costly, cumbersome, required a substantial amount of manual effort, and the retrieval time was agonizingly slow.

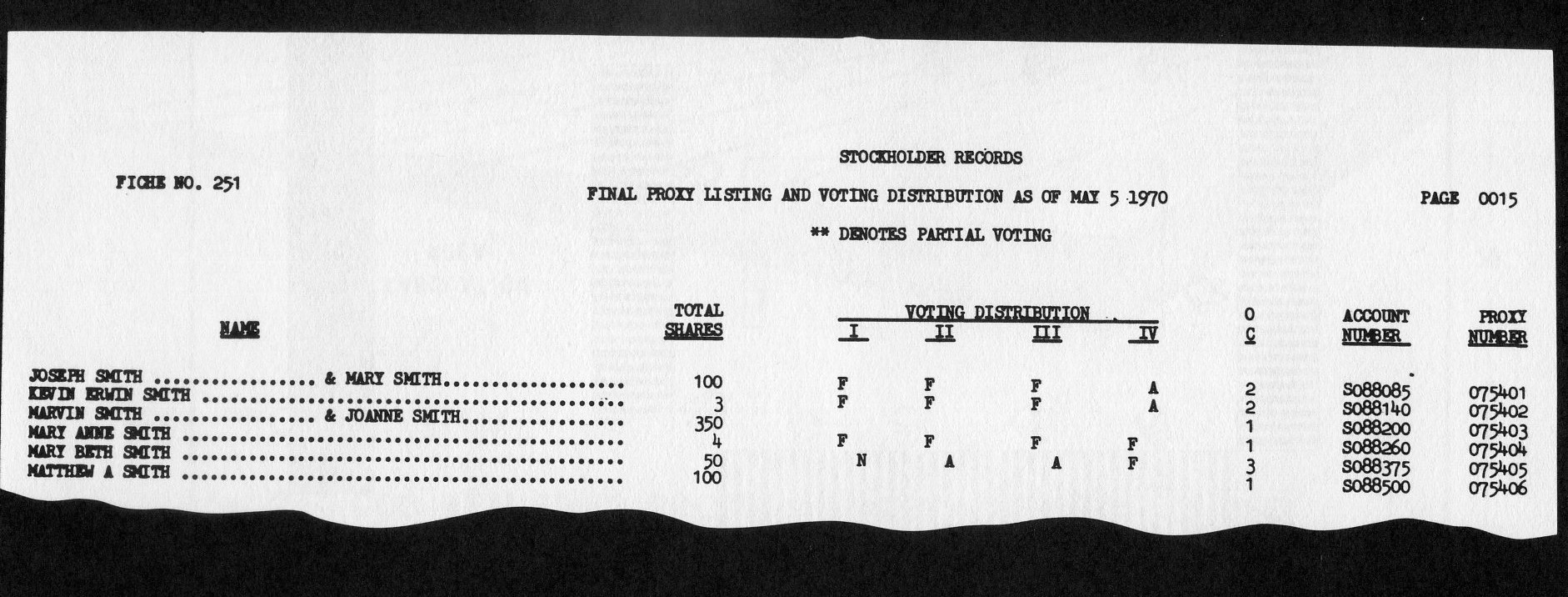

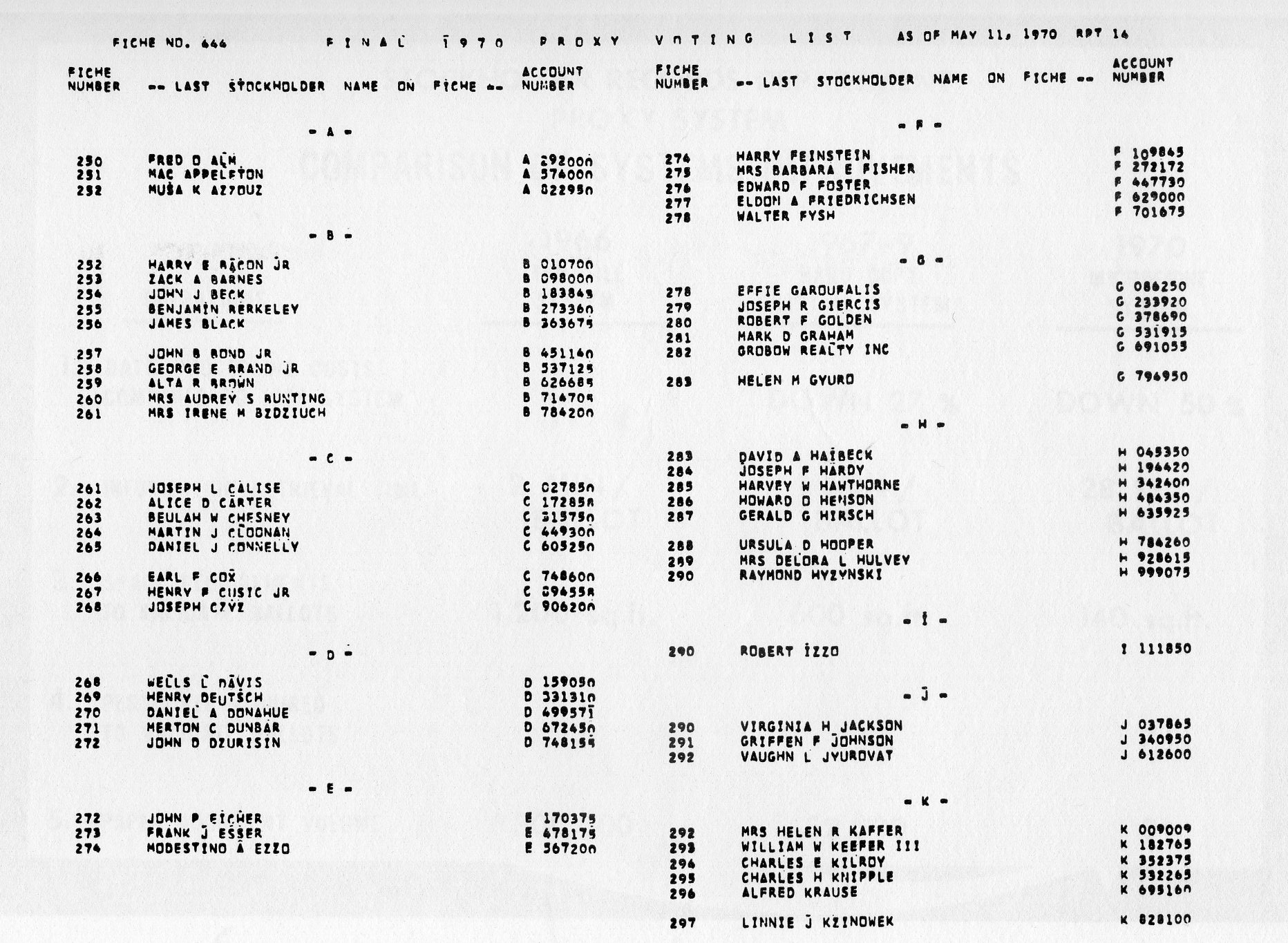

We changed that system. The system designed for the 1967 meeting eliminated the need for using the actual returned proxy cards in the validation procedure. Instead, a 16,000-page Final Voting list produced by computer was provided to replace the quarter of a million proxies. This listing indicated whether or not a proxy had been returned for each stockholder. It also showed how each proposal appearing on the proxy card had been voted (Fig. 6).

Installation of the revised system provided several benefits:

Subtle modifications to the computer programs in 1968 and 1969 improved computer process throughput time and also provided the Stockholder Records Department with more comprehensive statistical reports. Although the changes brought about by the revised paper system improved the efficiency of the operation, the large volume of printed data still made stockholder identification and ballot validation both costly and cumbersome. During the winter of 1969, we began exploring the possibility of using microfilm to replace the paper-generated Proxy Register and Proxy Voting list.

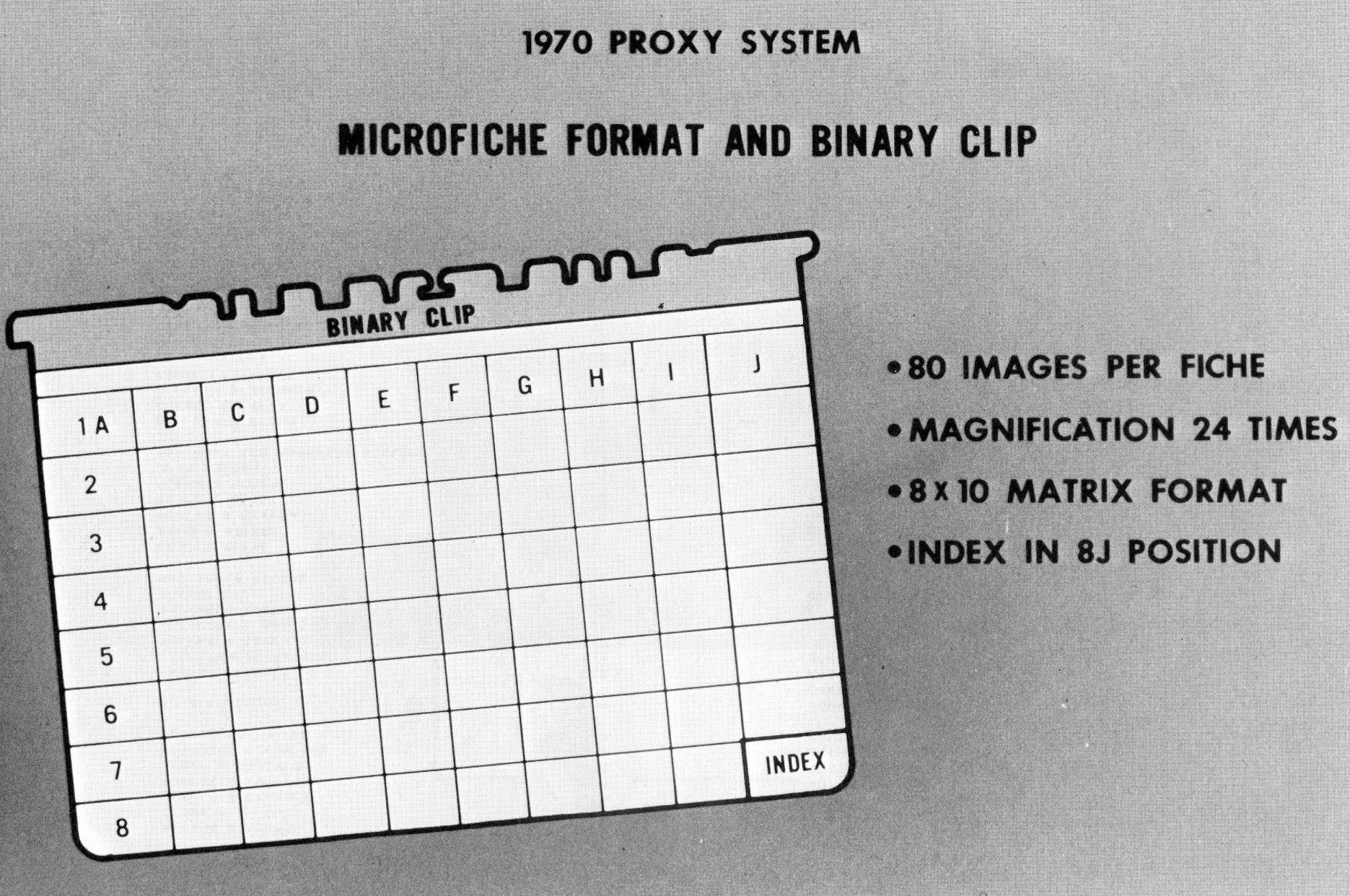

The heart of this system was, of course, the microfilm reader. Our specifications were very demanding - the reader had to be portable, easily operated, have random access capability, have absolute file security, be reasonably inexpensive and reliable. After evaluating several microform methods and readers, we decided that the system be designed around an Image Systems Microfiche CARD reader (Fig. 7). For those of you unfamiliar with this equipment, allow me to digress a few moments to describe it to you. As you can see on the slide, the device is a desk-top, self-contained microfiche reader. Up to 750 microfiche, equivalent to 60,000 11" × 14" pages, can be stored in a carousel housed within the reader. Accessing a page of data is accomplished by pressing a combination of three fiche locator keys positioned on the left-hand side of the control panel located at the front of the machine. When the keys are pressed, a selection cycle is initiated, activating the carousel until the desired fiche is located and projected on the screen. The first microfiche image brought up on the screen is normally the index page for that particular fiche. To find a specific page, one need only press the X and Y page coordinate keys on the right of the keyboard and the desired page will appear. Depending on how good one is at pushing the buttons, one can locate any page within 20-30 seconds. The fiche is located by a binary-coded metal strip attached to the top of the fiche. A total of eighty images can be placed on one fiche in an image matrix of 8 down and 10 across (Fig. 8).

In January 1970, a Stromberg DatagraphiX 4400 COM unit with a universal camera, giving us computer data microfilm microfiche capability, was installed at our central data processing center.

Having selected the reader and having in-house capability to generate COM microfiche, we undertook with Stockholder Records to put in a system that would produce the 36,000-page Proxy Register and the 16,000-page Final Voting List on microfiche instead of hard copy. It was obvious that the use of microfilm would lower data processing costs, increase file security, and reduce retrieval time for stockholder identification and ballot validation.

As a by-product of the rapid retrieval feature of the system, we were in a position to make a positive identification of every person wishing to enter the meeting without a ticket. This rapid retrieval feature appealed to our management who were concerned about having unauthorized people attending the meeting. Quite frankly, we thought we might experience demonstrations just as other major companies were experiencing during their 1970 annual stockholder meetings. Approval to install the system was given about three weeks before we had to mail out the proxies.

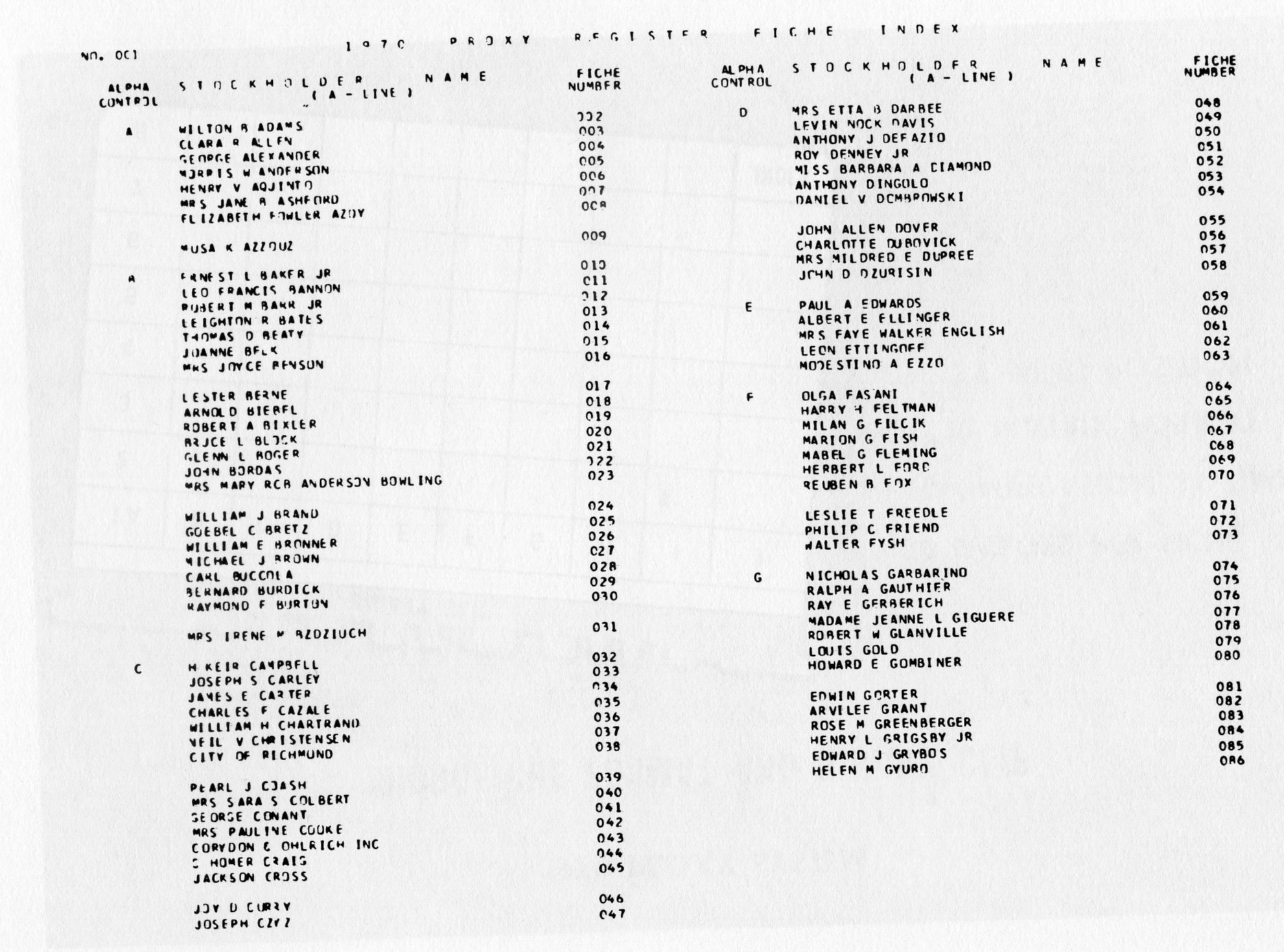

Our first step in designing the system was to develop a set of indexes to be used in retrieving individual stockholder proxy data from the 400,OOO proxy records contained on the file. The logic used to develop the indexes for both the Register and the Final Voting list was identical. Since all of the fiche were housed in the reader and fiche titling could not be used to select a particular fiche, a master fiche index had to be developed. To do this, we captured the name of the last stockholder appearing on each image of the Proxy Register and the image coordinate in core as we formatted the report on magnetic tape. When the 79th name, representing the 79th image, was accumulated, a page index was written for that particular fiche. At the same time, the last name contained on the fiche was placed in another work-in-storage area together with its corresponding fiche number. When the last data fiche was formatted, a master fiche index was produced (Fig. 9). The fiche master indexes for both the Proxy Register and Final Voting List were also produced on hard copy.

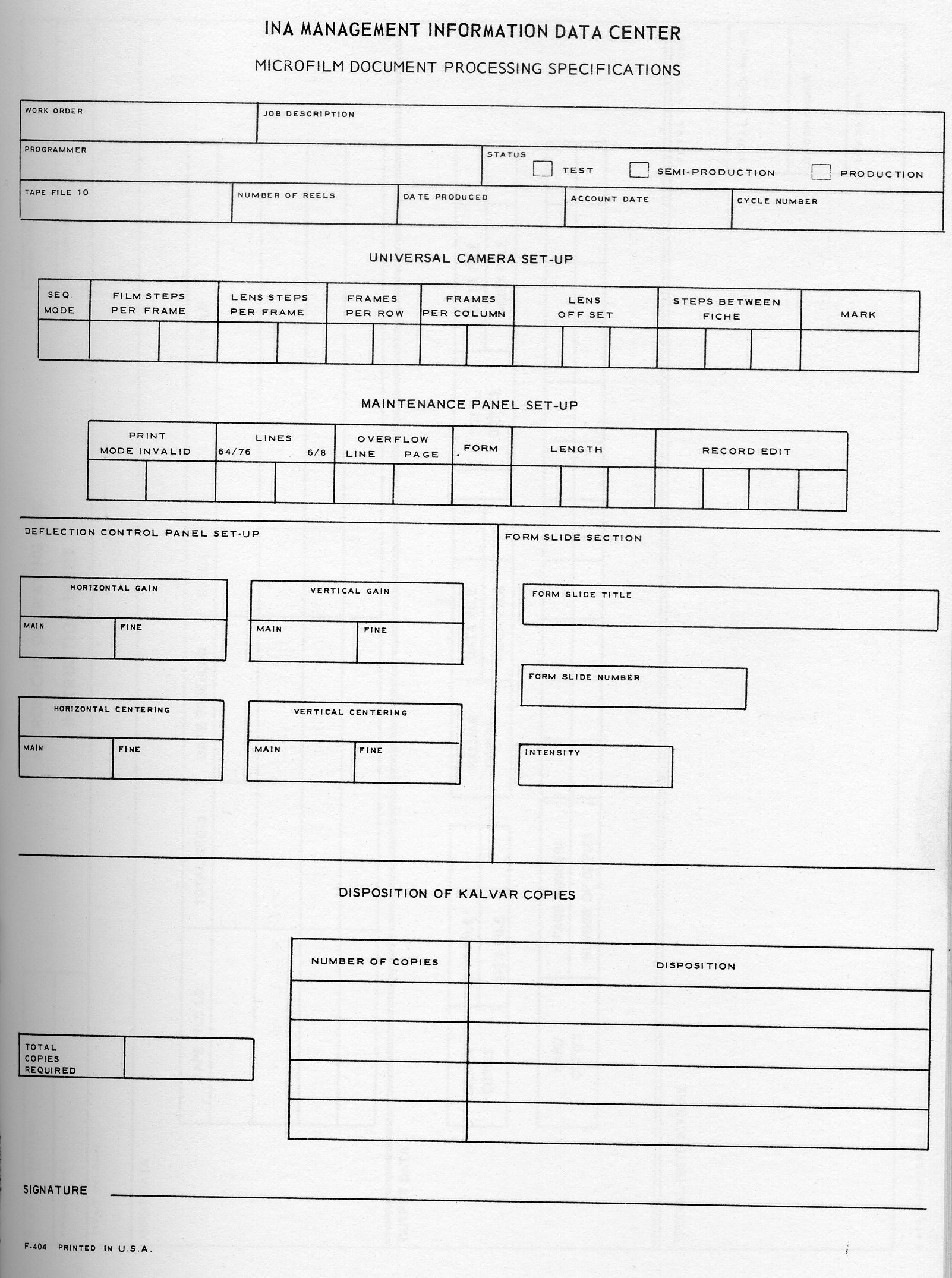

Most new systems experience a certain amount of launching problems. The Proxy System was no exception. The systems design and program development phases were accomplished without incident - and ahead of schedule. Our problems lay with the new COM device and the limited amount of processing equipment we had within the Company to manufacture microfiche copies. We found, for example, that the universal camera could not hold image registration properly, the microfiche cut mark, located at the bottom of each fiche, was so large it obliterated some of the data; film skewing was common; and the images were not sharp. Since we were dealing with confidential data, film processing had to be handled by our Microfilm Services Department. The equipment to process 105mm COM output in-house was extremely limited. Although-there was no difficulty in developing the silver originals, cutting the fiche, producing the diazo copies, and attaching the binary clips to the fiche was a painstaking manual operation. One by one, the hardware problems were overcome and the methods of producing microfiche copies were improved. On the day that the Proxies were mailed, we produced the Proxy Register on microfiche and loaded the fiche into the reader - The System was Launched.

There was about a five-week span between the mailing of the proxies and the Annual Stockholder meeting. This gave us enough time to have the clerical people get accustomed to the reader, evaluate the idea of having the master index on fiche instead of hard copy, and develop the program for producing the Final Voting List on film. Two days before the meeting, we processed the last of our returned proxies through system and generated the Final Voting List on microfiche.

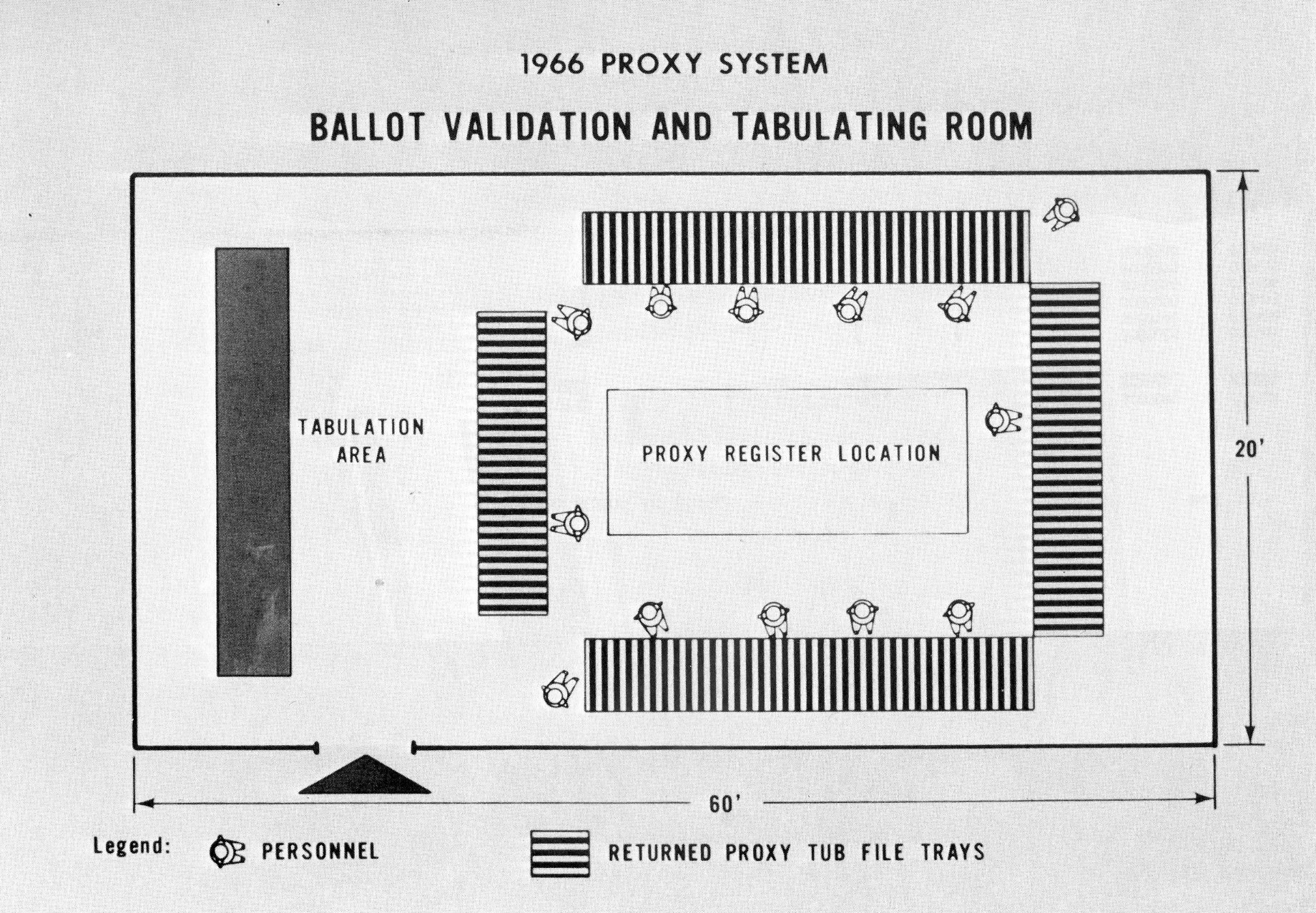

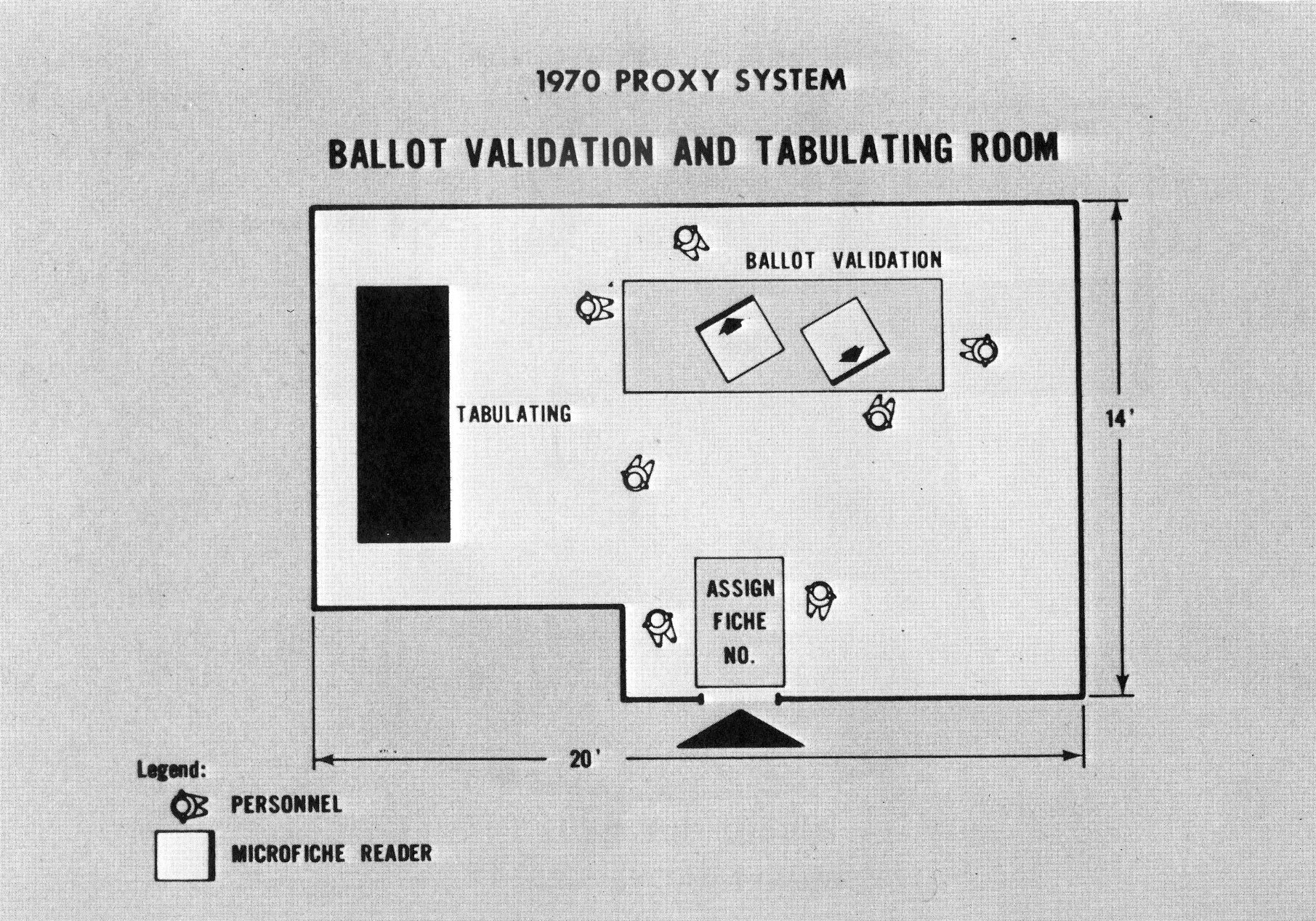

Since the Annual Meeting was to be held in the morning, we shipped our readers and tabulating equipment to the auditorium the day before the meeting. We placed one reader containing the Proxy Register on a cart behind a row of tables in the outer lobby of the auditorium. This reader would serve to screen those persons wishing to attend the meeting who came without their invitations. The other readers, together with the tabulating equipment, were located in a 14 × 20 foot room adjacent to the outer lobby. These readers contained both the Proxy Register and the Final Voting List (Fig. 10).

At 9.00 a.m. the doors of the auditorium were opened to admit the stockholders. Signs were posted to direct those persons having their invitations directly into the meeting. Persons without invitations were ushered to the registration area. There they were asked to fill out a registration form with their name and address. As each form was completed, it was passed to the people handling the reader. Two people were stationed by the reader. One member of the two-girl team would write the appropriate fiche number for the name appearing on the registration form from a hard copy of the Proxy Register fiche index and hand it to the other member of the team for look-up. When the person was found on the Proxy Register, he was admitted to the meeting. Approximately 100 persons were screened by the system in an elapsed time of about 35 minutes. The entire operation never experienced a queueing problem.

When the time came for the stockholders to cast their ballots, instead of having to negotiate the long maze of corridors and stairs as in previous years, they merely walked out of the auditorium and to the tabulating room adjacent to the outer lobby. There, they handed the ballots to one of two people stationed at the door (Fig. 11).

These people assigned the proper fiche number on the ballot from the hard copy of the Final Voting List Fiche Index (Fig. 12). Once numbered, the ballots were then passed back to a team of validation clerks who performed the look-up and marked the appropriate information on the ballots. Total elapsed time to validate each ballot - 28 seconds. Under the old system, ballot look-up took two minutes. The entire operation was accomplished in under eleven minutes using a total complement of seven persons.

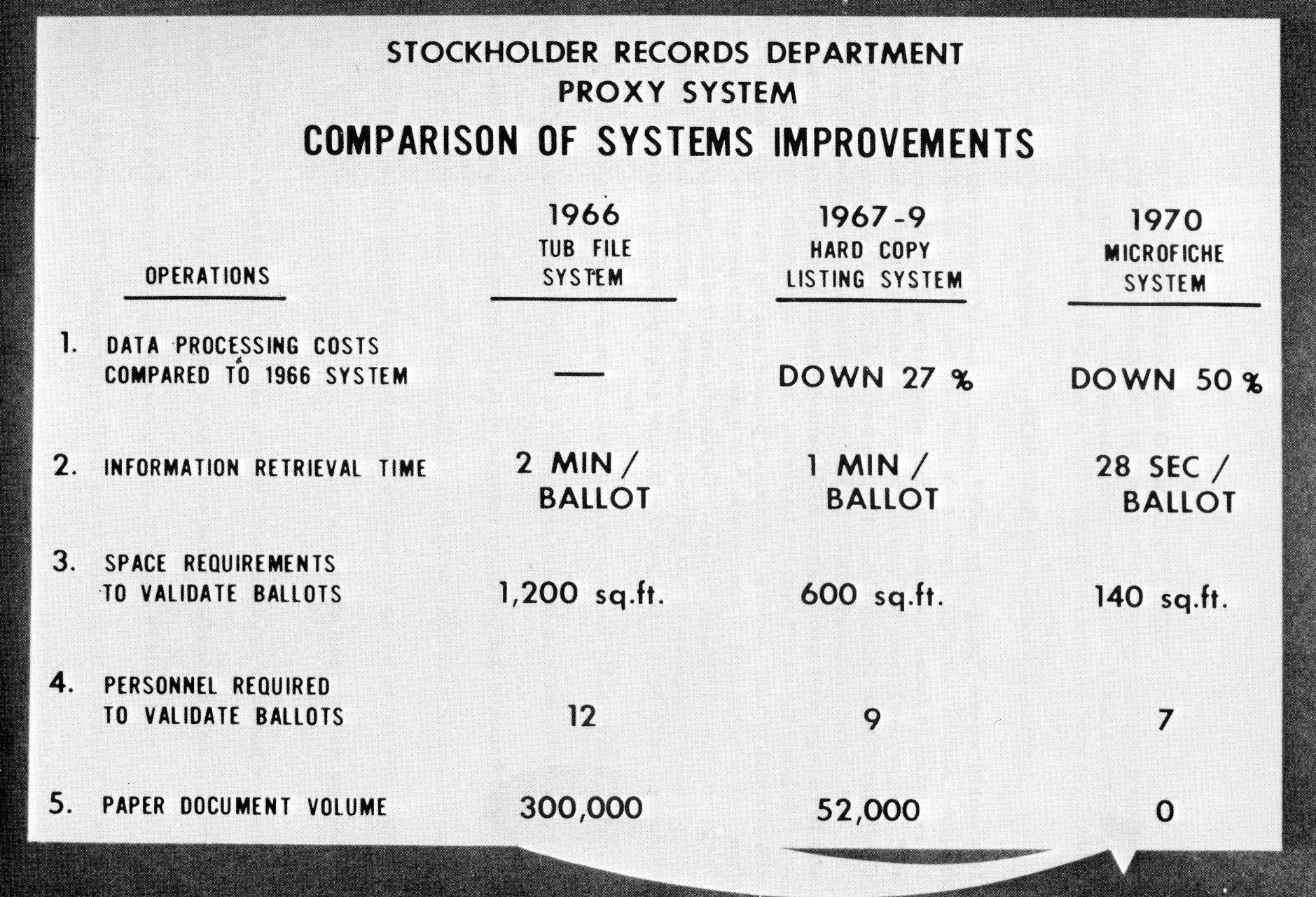

I've just described to you three different systems, all designed to accomplish the same end result. Fig. 13 shows a comparison of the three systems and illustrates how technological improvements made in microfilm and microfilm retrieval techniques have enabled us in this application to lower data processing costs by 50%; decrease information retrieval time to 28 seconds from 2 minutes, or about 77%; compress space requirements to 140 square feet from 1,200 square feet, or 88%; reduce clerical personnel by 5 persons, or 41%; and cut the need for paper by 100%.

In summary, this application is but one of several microfilm applications installed at Ford - and is but one example of how microfilm, as a dynamic tool, can be used in today's modern and demanding business environment.

In a world where bangs per buck count, most corporate managers cannot be expected to focus disproportionate amounts of their limited resources on Computer Output Microfilm. Yet, to switch a company from paper to film and to change well established EDP behavior pattern requires a massive educational effort. Users, computer people and top managers need to be sold on the advantage of film. Certainly microfilm equipment manufacturers have tried but the lack of sales penetration proves that these efforts, individually or as a group, have not been overly successful. Much more must be done in the form of generic public relations programs before film will become the common vehicle of information exchange. Unless the massive public relations program is launched soon, users will turn to cathode ray tubes as an answer to the paper implosion, since CRT's are receiving widespread attention.

In the few hundred organizations where devoted advocates have adopted COM, users praise its benefits. But even there only a few select applications are on film. Thus, unless many more top managers and scores of users learn about and demand microfilm from EDP departments COM will not achieve the success it deserves.

Maybe no single COM manufacturer is financially strong enough to mount the required effort and maybe ways have to be found to combine forces. One thing is clear - no user company, regardless of size, can do it alone.

Union Carbide Corporation can be cited as a case in point. In this company an intensive educational program has been underway for over two years. Now, nearly thirty different printouts are on film. This endeavor might be considered highly successful when measured against those companies which have not invested the equivalent time and effort, but it is a drop in the bucket compared to the volumes of paper still pouring out of computer rooms.

Computerized microfilm devices have not caused much of a ripple in the business world and there is little indication that the picture will dramatically change in the future. It's a sad fact of life that the COM industry has not succeeded in making COM sexy, exciting and worthwhile. And, unless something dramatic is done about it, printers will continue to spew out tons of paper and people will get increasingly more steamed up about on-line systems and cathode ray tubes.

Let's face it, COM leaves EDP people cold, and the rest of the world really doesn't know too much about it. EDP people see microfilm as an old technology containing many pitfalls and problems which they neither understand nor see the necessity to learn about.

As they see it COM offers little glory or personal rewards. In fact, the opposite may be true. Evidence seems to indicate that most men who were responsible for the introduction of microfilm in their company find themselves behind the eightball. They become specialists and their personal growth in their company levels off: The reason for this seems to be that they are held responsible for the creation of one additional set of headaches by a management that already has inherited many more than it can handle.

Top managers must become convinced that COM will bring about real and tangible improvements and users must become sold on the potentials of film. Obviously, this type of sales effort is exceedingly costly but it seems necessary if COM is to gain wide acceptance. It becomes even more costly when it is recognized that a continued company by company liaison is required. It must be continuous since no manager stays in a same slot for very long and yesterday's manager cannot help - in fact his association with COM may hurt - today's effort.

If COM manufacturers want to reverse this trend they must find ways to tune in top managers as well as users. Today, most marketing efforts seem to be concentrated on the EDP personnel and this type of marketing approach alone will not work. This is not to say that EDP people ought to be ignored, but the emphasis must be on two levels.

So, the one-shot sales efforts can actually hurt a COM sale. Yet this is what is frequently done. A manufacturer or service bureau will spend a concentrated amount of time trying to line up a contract. After a while, successful or not, he will disappear only to be followed by others who will follow the same pattern.

One thing is certain, unless ways are found to maintain the interest level for COM in an organization, this technique will not gain the hoped for acceptance; and if a single organization can not afford to stay with a potential or real client for too long, then other ways should be found to accomplish this. Presentations at conventions and workshop sessions are a beginning, but they will never replace the personal contacts at high levels or the hand holding at the user level which seems to be required to introduce COM into a company and to assure its continued acceptance.

Having drawn this rather glum picture, let me cite an example of how one company took the initiative to switch some of its computer output to microfilm.

Ironically, the first application and the one that set the pace for the future acceptance of microfilming in Union Carbide Corporation did not involve computer output. Some customers and stockholders complained to our top management about the way we handled phone inquiries about our products, personnel, and services. As a result of these complaints, a task force was established to find a solution.

Soon thereafter information centers were established in New York, Chicago, San Francisco, and Houston. At each of these centers microfiche CARD Image Systems were installed containing identical information on a variety of subjects. These units stopped all complaints, since anyone desiring information can normally receive accurate answers within ten seconds. Now when you call the Union Carbide Information Center, a well trained young lady will direct you to the proper salesman, head of a department, or committee, give you the name of a product, or tell you where you can buy it.

Success of this action oriented application caused top management to initiate an effort aimed at discovering other opportunities for filming. A two man team was formed to identify other areas for improvement. This task force produced a report which quantified the film potential estimated cost saving potentials and outlined a course of action. This report was approved and one man was assigned to initiate the use of COM. To launch the program, a slide presentation was prepared for prospective users, who manipulated large amounts of computer produced paper and thus could realize the largest benefits through COM. In addition, each of these applications was typical of a number of others throughout the Corporation.

This effort is now complete and nearly 1/8 of the total COM potential initially identified has been realized. The point I am trying to make is that this success would not have happened without the moral and financial support of top management at the start of this program.. For example, the first few slide presentations were preceded by discussions at top levels which obviously helped. Additionally, a special budget was established which absorbed most of the initial costs for the development of computer programs, the computer processing time, the filming of the dozens of conversions, as well as the purchase of a number of microfilm readers.

The presentation was put together by combining visuals of pertinent in-house facts with slides obtained from some of the manufacturers. It was shown to many potential users, their managers, and EDP personnel.

At each of the initial sessions we stated that Union Carbide Corporations' top management had approved this presentation and that it recognizes that the active use of microfilm offers tangible opportunities and that it was the purpose to show what advantages can be achieved through the use of sophisticated film-based retrieval techniques.

The Corporation had utilized microfilm for over 20 years as an archival medium as well as for the storage and retrieval of engineering drawings, but the active use of film - where information is required to perform work - is new to us. Essentially, we explained some of the reasons why microfilm for active use is better than paper, showed the different microfilm formats and their specific benefits, cited some of the retrieval techniques, mentioned some of the applications already on film and some others which ought to be considered. A summary of the presentation follows:

Before the turn of the century entries were made manually into ledgers. As business became more complex, forms were devised which made the recording as well as storage and retrieval of information somewhat easier. With the advent of computers, information could be manipulated quickly and made available quite readily.

However, as the demand for information increased, it soon became apparent that high speed printers were the bottlenecks which inhibited the ready access to the wealth of data available in the computer. They were not only slow, their product was bulky, heavy, hard to read and costly.

Some of the problems with paper are that:

A relatively new technology overcomes the drawbacks of the printer by producing computer output on film instead of paper. Now printing is done with light instead, of ink, one page at a time instead of a line by line and it is approximately 10 times faster. These machines take computer output either from magnetic tape or directly from the central processor and expose it on cathode ray tubes which are linked with high speed cameras.

Special forms can be created simply by projecting a transparency onto the film as the data is generated which makes it possible to use special forms for every computer output at practically no cost at all. In addition, there is no limit to the number of clear and legible film copies which can be made.

These computer output microfilm devices can be utilized to make charts and graphs, in black and white or in color. They can create three dimensional effects and animation. Some can even produce letters in upper and lower case, in regular, italics and bold and in a large variety of fonts.

The animation capability can be used to create inexpensive movies, display simulations of queuing problems as might be found at airports or warehouse loading docks, or to show changes in density patterns of populations or markets. Some people have even created prize winning movie cartoons.

Thus COM offers not only a great deal of flexibility in the display of information, it does it faster and cheaper than line prints. On the average, these machines use approximately l/8th of computer time, l/10th of print time, and the film costs 1/8th that of paper.

In addition microfilm saves up to 90% on space, and mailing. Also, images on film can be retrieved 50% faster than paper and in most cases require almost no time to file. (In fact, film can be said to be an inviolate file).

Costs are also very favorable when microfilm viewers are compared with time-sharing system, as shown in the chart below:

| MICROFILM VIEWERS | Typical Monthly Rental ($) | Time to Display Full Page (8000 characters) (secs) |

|---|---|---|

| HF Image Card System | 160.00 | 4-6 |

| Stromberg DatagraphiX 1700 (Automatic Magazine) | 42.00 | 4-15 |

| Kodak PVM (Manual Roll) | 21,00 | 8-20 |

| Micro Design COM 200 (Fiche Reader) | 8.50 | 20-30 |

| COMPUTER TERMINALS | In all cases plus transmission cost and time. | |

| Sanders 720 (Video) | 468.00 | 0.2 |

| IBM2265 Video (Plus Control) | 478.00 | 3.1 |

| IBM Selectric | 130.00 | 533.0 |

| Teletype KSR 33 | 90.00 | 800.0 |

There are many reasons why micro-photography is gaining acceptance in Union Carbide. Users have adopted microfilm for the following reasons:

| PAST | PRESENT |

|---|---|

| Many people searched for identical information available only at one location. | Microfilm is disseminated to them eliminating the waiting time heretofore required to obtain the information. |

| Bulky heavy earmarked reports were used. | Compact microfilm is being used. |

| Work is performed at one location while the bulk of the information is at a different place. | Information is available at the people's desk. |

| Large volumes were mailed overseas. | Microfiche can be mailed by air mail. |

| Bulky reports were disseminated widely. | Microfilm is disseminated at a much lower cost. |

| Quick access of information was required by management. | Microfilm in automated readers makes information available in seconds. |

| Fifth to sixth hard to read copies were used. | Regardless of the number of copies, each is as clearly legible as the original |

| Information had to be frequently updated. | Microfilm makes it faster, easier and cheaper to update information. |

| Information was crammed into over-stuffed file cabinets which had to be retrieved frequently. | Microfilm makes it possible to retain the information in a desk drawer. |

The feasibility study indicated that many applications for microfilm exist throughout Union Carbide such as: Accounting, Accounts Payable, Accounts Receivable, Adjustment Sections, Advertising, Banking, Business and Technical Libraries, Corporate Secretary, Credit, Distribution, Engineering, Finance, In-Process Control Locations, Insurance, Inventory Centers, Maintenance, Manufacturing, Market Analysis, Medical Departments, Order Processing, Payroll, Personnel, Public Relations. Purchasing, Realty, Research and Development, Sales Analysis, Stock Transactions, Systems and Computer Work Areas, Traffic, Travel and Work Scheduling.

The applications selected for conversion reoccurred throughout the Corporation and shared the following conditions:

The conditions described above, prevailed in the Adjustment Departments of all of our various businesses. The largest of these was operated by Consumer Products and we, therefore, started our COM program by assisting this user with his conversion. Here approximately 80,000 additional invoices and adjustments were added monthly to the files, frequent retrievals were made, larger and larger file space and more and more clerks were required to keep up with our growth and misfiling began to hurt our business as the requests for documents increased.

These documents are produced on 16 mm film from magnetic tape. At the same time a number of indexes are created which contain sufficient detail to answer a great many questions alleviating the need to go any further.

Basically two types of indexes are created. Each week as approximately 20,000 new documents are added to the file, an index describing the content of each roll of film as well as cumulative year-to-date are created.

When a copy of an invoice or adjustment memo is required, Image Control or Page Search machines facilitate retrieval. Normally, an inquiry is answered in less than one minute. The Adjuster makes his own look ups. Clerks are no longer required to file, search, and re-file. No documents can be misfiled, lost, or rendered unreasonable. Storage has ceased to be a problem.

Today, most of our Adjustment Departments utilize film instead of paper. The systems employed by Linde, Chemicals and Plastics, as well as Carbon Products are very similar to the one described for Consumer Products. This makes it possible for the Corporate Credit Department to receive a copy of every roll of film produced by the divisions eliminating one additional time loss and expense. They frequently send a copy of an invoice to the customer. Before, they had to contact the division, now they make their own copy.

Another set of reports used throughout the Corporation are reports concerning the market, customer, product and sales penetration. These analysis are usually arranged geographically and widely disseminated. Most of our businesses utilize microfiche to distribute the information to its regions.

Users of these reports prefer it over paper. They find that fiche are cheaper, can be mailed, stored, and handled easier and are cleaner to handle. The one drawback in this set of applications is the unavailability of inexpensive and light portable readers for use by salesmen. But when these readers become available, our salesmen will be freed from the stacks of paper, reports, and catalogues they must handle.

Payroll references can be cited as another example. For instance, the Corporation's central payroll register shows all pertinent information with regard to each employees pay record such as employees name, number, gross, net, tax deductions, etc.

This Payroll Department is responsible for paying 22,000 people in the New York area. Before the introduction of microfilm, the payroll register was a monthly computer printout consisting of approximately 5,000 pages which had to be broken into sub-sets before it could be handled. Even when broken down the size of the report precluded desk side accessibility. As a result of a girl receiving a telephone or written question she had to scurry to a cabinet to find and carry back to her desk a book of more than 400, 11" × 17" computer printout pages.

After she found the answer she has to retrace the steps to the cabinet. Because of the size of these books, it was possible to keep only 2 months reports easily accessible to payroll clerks, All other reports were removed to a secondary storage area and reference to them was physically time consuming In addition to the inconvenience of each look-up, either in the nearby cabinet or in the secondary storage area, there was significant time loss.

Now the payroll department gets a complete set of microfiche for each of four teams of four girls. Each team has its own reader Any payroll clerk can answer questions quickly and without trouble by simply pulling out appropriate fiche cards from a file next to the reader and inserting it. Each file situated in front of the reader contains twelve complete payroll registers thus even questions relating to earlier pay periods can be dealt with while the caller is still on the phone.

Union Carbide does its own stock transferring and all the records pertaining to a stock transfer activity are maintained on microfiche. Here again bulky reports were eliminated, costs were reduced and far faster retrieval resulted.

Corporate Accounting uses microfiche for all of its research. Such reports as Paid Files, Vendor Code Lists, Trial Balances, Invoice Registers, Customer References aid them to make their operation more efficient.

A secondary benefit of the use of microfiche has been the low cost distribution of copies of the Corporation's monthly Trial Balance to Divisional Accounting Departments. Timely access to this source of information has helped improve their operation.

Generally speaking we have standardized on two film formats: 16 mm and microfiche. All our 16 mm have opaque marks (image control or page search) below the image area which makes possible fast retrieval through the use of keyboards. We use Eastman Kodak and 3M Retrieval Stations.

16mm film is selected where large files are centrally maintained and where prints have to be created for use outside the company. In all other cases we utilize microfiche consisting of 84 pages or data frames for fast retrieval. The computer produces an index for all of our COM output.

In the 16 mm film applications an index is provided summarizing the contents of a particular roll. Also, a cumulative year-to-date index tape is maintained and reproduced on film periodically. For microfiche we show an index on the 84th page of each fiche. There is also an eye legible area on the fiche which gives the name and date of the report, category of the report, key name or number of the first and last image areas and a sequential fiche number. The eye visible area is color coded with a different color for each of the month of the year. This color coding has been standardized throughout the Corporation.

Microfilm may offer compactness, availability, control, accessibility, security, low cost, ease of reproduction, high quality, and speed, but what really matters is the enthusiastic responses users have to film.

Girls like to use film because they do not have to lug 12 pound books anymore. Their managers like it because the information flow doesn't get bogged down for days because a document has been misplaced. They also prefer film since any clerk can address a whole fiche file instead of only a segment. People who acquire information like it because they can get the data immediately instead of having to wait for 15 minutes or even 15 days. In fact, in most instances, users can get the information themselves.

Over and over again, people who use film state that they prefer it over paper. One analyst recently said: "It's just a matter of finding the right image and recording the information that is on it." Responses such as this obviously are exceedingly important since it makes it possible to refer potential users to those who have adopted film with assurance that they will have nothing but unhesitating accolades for their system.

Union Carbide Corporation uses today well over thirty different COM outputs which are produced regularly and the users are well satisfied with the results. But I am convinced that very few of these printouts would have been converted without the approval of top management. I am equally certain that a change in attitudes even a loss of interest by top management would immediately cause a slowdown in the acceptance of COM by new users.

Thus it is imperative that microfilm equipment manufacturers as well as service organization seek ways to keep top management informed and interested in this medium.

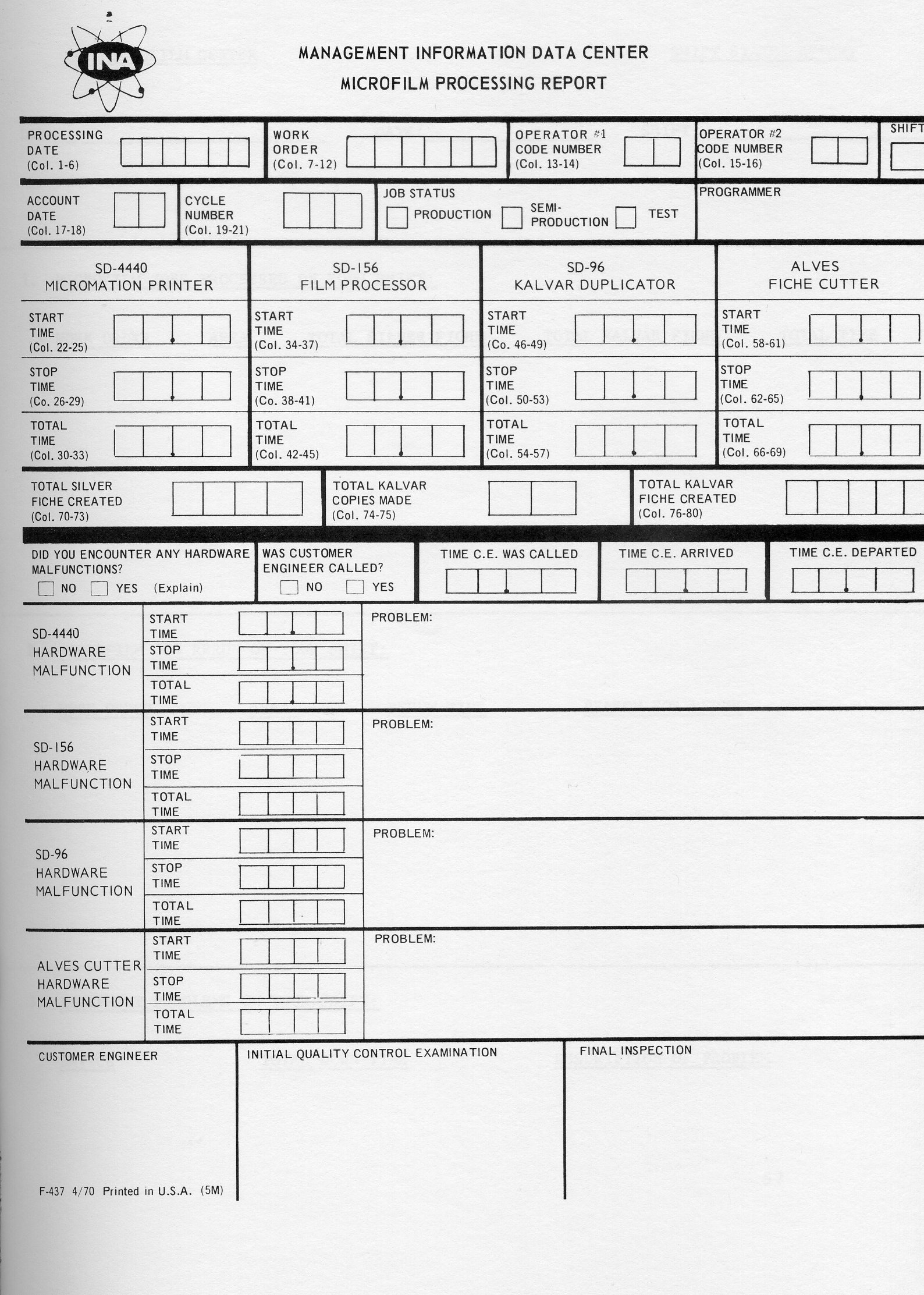

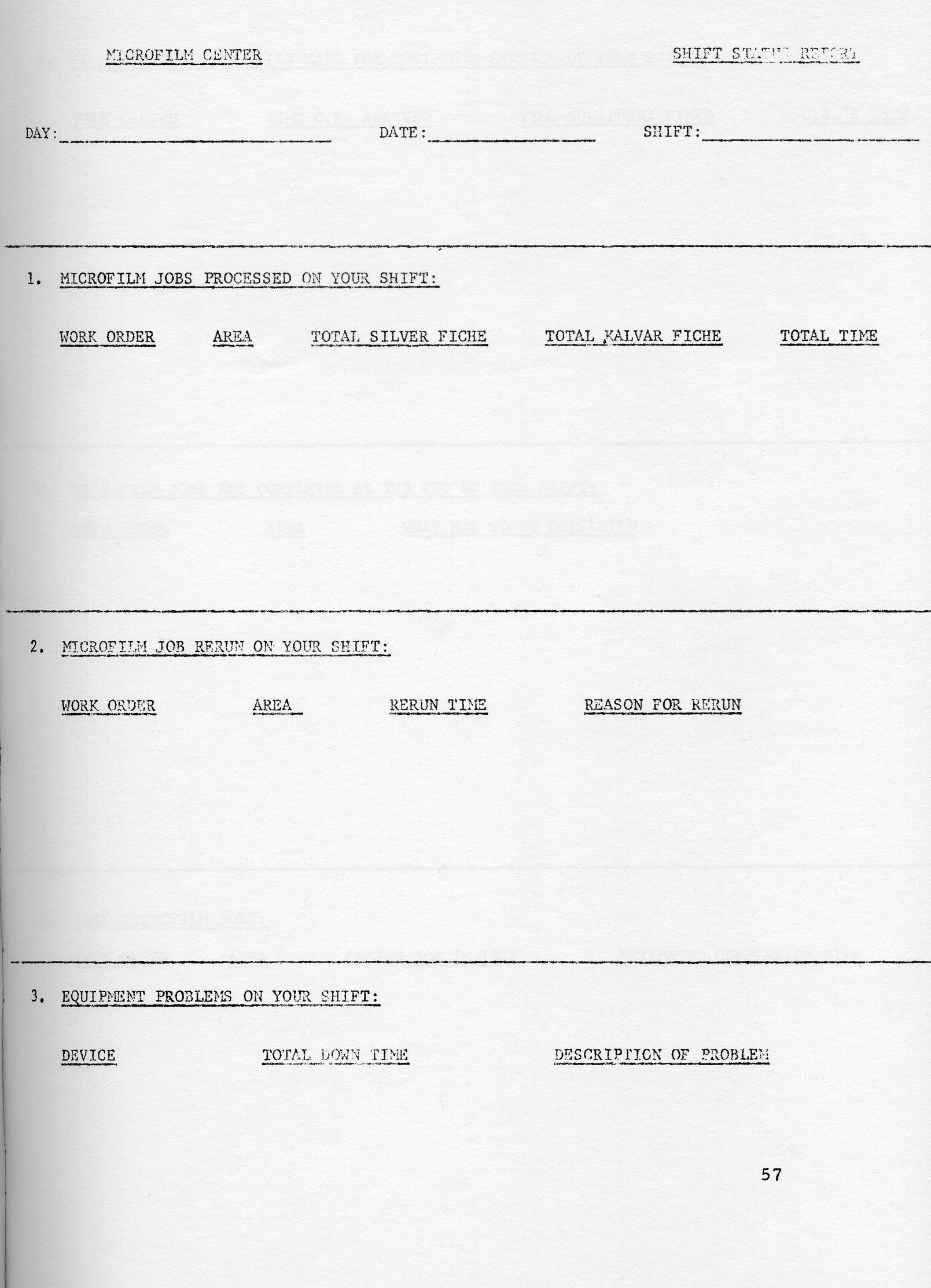

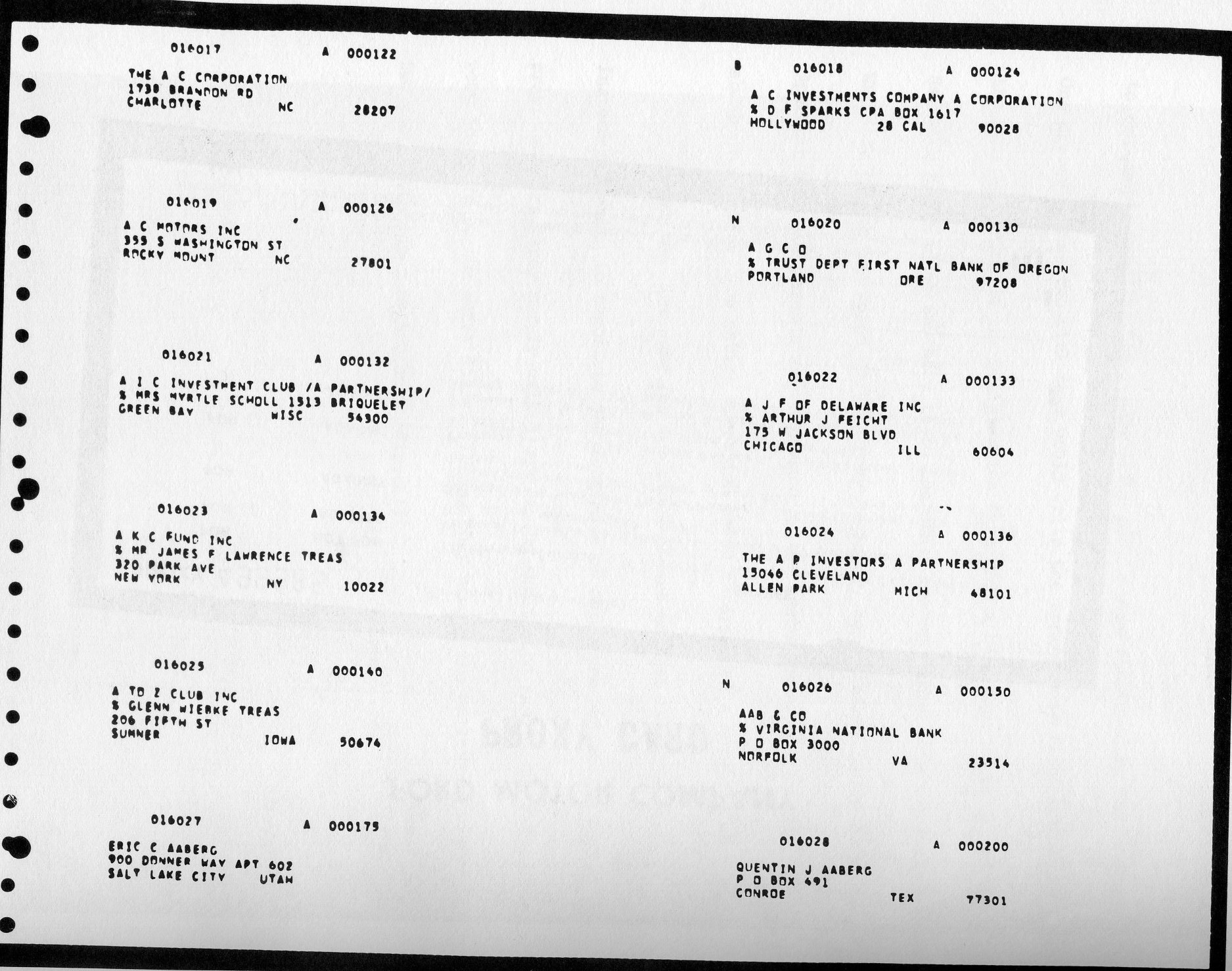

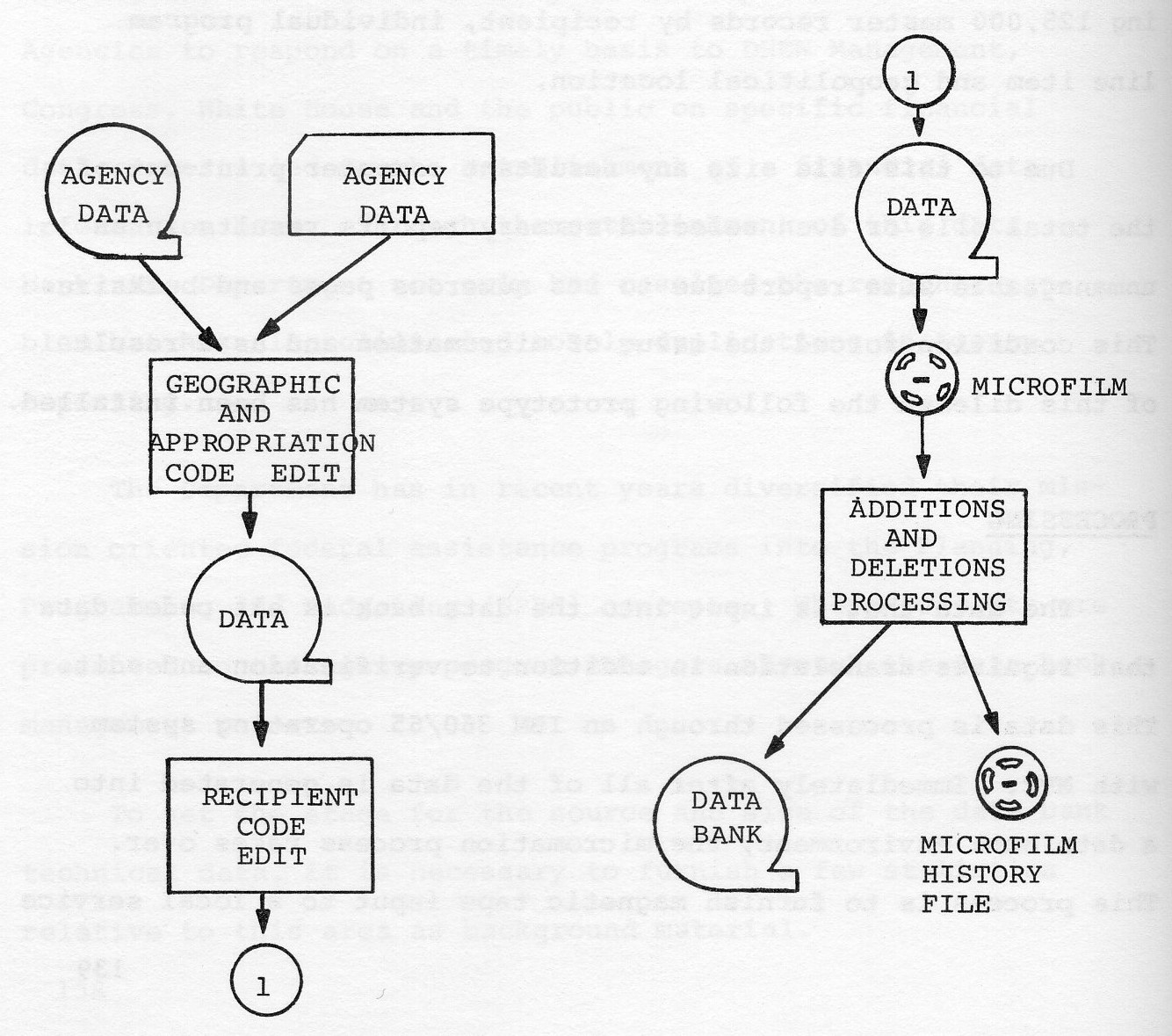

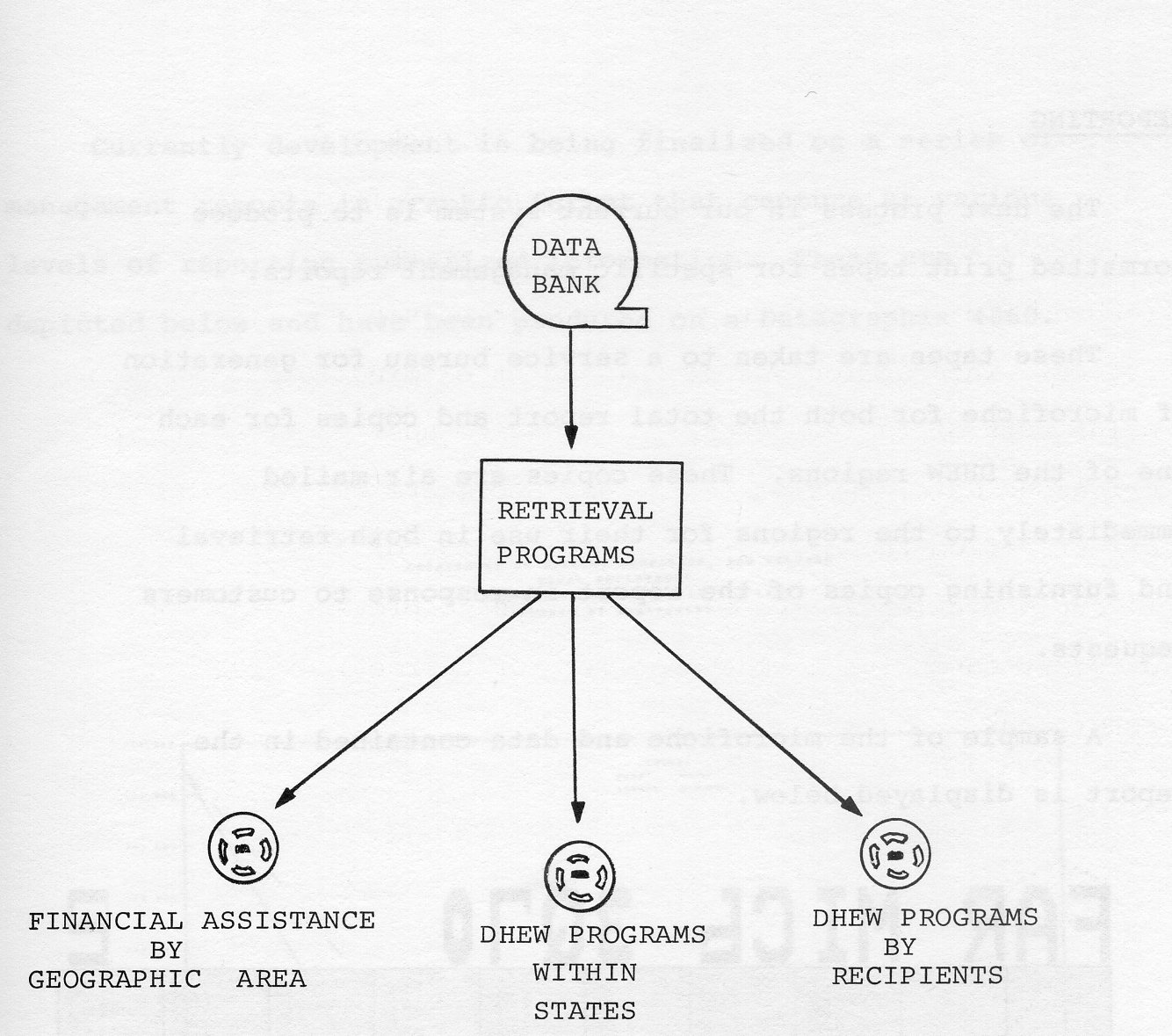

This paper discusses the replacement of a card file and roll film records containing sketchy beneficiary and Medicare data maintained in the 825 local Social Security Administration offices, with a microfiche system which provides more comprehensive and current data used to answer questions asked by beneficiaries. The 105mm microfiche are prepared on the D-4440 micromation system at a 25 to 1 reduction. Duplicate fiche are prepared on the Kalvar Model 96 duplicator. The Kalvar fiche, in sets alphabetically by names of beneficiaries within each state, are updated biannually. In the field, the fiche are filed in Acme Visible files and referenced on Realist and/or Bell and Howell reader-printers. The project has been operating successfully for 14 months. The primary results are better public service, cost reduction in the elimination of the card and roll film records, and a decrease in the use of the Administration's telecommunications system.

The Social Security Administration is currently providing cash benefits to 28 million beneficiaries. It also provides Medicare coverage to 20 million people 65 years of age or over. As a result, local social security offices are visited frequently and asked questions (30 million yearly queries) by beneficiaries relating to the Medicare claim numbers etc.

Although the local offices have kept card files containing major events pertaining to beneficiaries, they have not been able to provide immediate answers to a large number of questions. In many cases, the questions were sent to the headquarters in Baltimore via a telecommunications network. Beneficiaries at times had to wait 3 to 5 days for answers which were taken from magnetic tape files. Requests for Medicare numbers were provided at the local office from roll microfilm. Because of the deterioration of public service. the local offices finally requested that they be given more complete and up-to-date from the master tape files kept in Baltimore. NOTE: (SSA would prefer to have a real-time system; however, it may not be feasible for another 7 to 10 years.)

Serious thought was given to providing additional beneficiary data from magnetic tapes on 16mm roll film. However, microfiche was favored and selected because the look-up time was shorter, less file space would be required, (no need for film boxes and file cabinets), and fiche was more easily handled. SSA had experience with micromation equipment producing 16mm roll film since 1958. However, we had no experience with, the use of 105mm roll film.

The fiche we decided on to carry frames of beneficiary data transferred from master magnetic tapes is 4" × 6" in size. Using the DatagraphiX 4440 and the Universal camera (25 to 1 reduction), we are printing 100 characters to a data line, 76 lines to a frame and placing 73 frames on a fiche (72 frames of data and 1 frame of indexing information). One column of the fiche is used to print a programmed eye-visible header. A cut mark is recorded on each fiche to activate the automatic fiche cutter in a later operation. Although we are using the 25 to 1 reduction feature on the D-4440 we have not eliminated the future possible use of the 42 to 1 feature.

An average frame of data contains nine summarized beneficiary records, and a fiche contains an average of 600 records. In addition, the Medicare claim numbers and name information are now carried on the microfiche, eliminating the need for roll film in the field.

The master file is divided generally into alphabetical segments by names of beneficiaries in each state. Actually, there are 56 sets of master fiche for the entire country. Large states such as New York and California have subdivided sets. One-sixth of the total file is updated each month and written on 5,000 silver master fiche using the D-4440. The silver fiche in roll form is processed by the Eastman Kodak Company. After processing has been completed, the film is edited for obvious photographic imperfections or camera malfunctions. Edited films are next cleaned on a Lipsner-Smith ultrasonic film cleaner. The cleaned films are duplicated on Kalvar 105mm roll film using the Kelvar Model 96 film duplicator. An average month's production of duplicates totals 300,000 fiche. The fiche are placed on an Alves automatic cutter which cuts each set into a stack of 4" × 6" fiche ready for editing. Ten percent of each set is randomly edited on a Realist fiche reader as a quality control. Approximately three stacks of 600 fiche are placed in a heavy-duty envelope for flat mailing to the appropriate offices.

A single fiche duplicating operation was added for the purpose of making one Kalvar copy fiche from the silver film in roll form. This, we found, was the least expensive way of reprinting one bad fiche out of a set. For this purpose, we use a Kalfile printer for timed exposure and a Kalvar Model M-160 for developing. A set of 105mm rewinds were added to the operation to create a unitized system.

In the local offices, the sets of fiche are filed in vertical fan-fold Acme Visible files. Realist readers and Bell and Howell reader-printers with 21X lenses are used for referencing fiche. An estimated 20 million prints are made from fiche yearly.

The project has satisfactorily met all of the anticipated requirements. Public service has been improved, and some savings have resulted from the elimination of the card file formerly used for reference. There have been minor problems with almost every machine in the system, particularly in the early months of operations, such as curled fiche copies, missing cut marks, blurred copy fiche, silver processing flaws, and overprints on the D-1440. Time, experience, manufacturers' cooperation, and ingenuity have helped to provide improvements which have made this project one of SSA's most successful film operations.

Before I talk about COM at Eastern Airlines, let me give you some background information about the company. Eastern is the second largest airline in the free world. Our fleet numbers more than 150 planes comprised of six different types of aircraft. We maintain repair facilities in more than 300 separate locations throughout Eastern's system. Each facility must have maintenance manuals describing precisely how to remove, repair, and replace every nut, bolt, cam, switch, wire, pulley, gauge, relay, valve, and rivet for each type of aircraft that we fly. We produce about one million pages of revisions to these maintenance manuals per month. Prior to 1956, one revision page in four was never inserted in a manual. It ended up lost in the mail, on someone's desk, or accidentally discarded. This meant that each year Eastern's maintenance manuals were three million pages in arrears. The Federal Aviation Authority periodically audits these maintenance manuals for completeness and accuracy. Eastern was continually being cited by the FAA for these discrepancies. Our analysts determined that a microfilming installation would be justified by the elimination of the labor required to insert new pages into the maintenance manuals, to say nothing of the reduced materials, distribution and storage costs. The simplest way to handle this problem was to maintain one master manual. Each month this manual would be filmed and new copies of the complete book distributed to the maintenance locations in which viewers have been installed. Eastern initially began with two Bell and Howell Rotary Cameras which were subsequently replaced by a Kodak Planetary unit. The manuals are on 16mm roll film at a 24-to-l reduction ratio, negative polarity. Actually, all of Eastern's microfilm is negative polarity. We find negative images easier on eyes. Furthermore, negative images screen out most impurities in the film and lens of the viewer.

Meanwhile our reservations group determined that our reservations agents needed a quick and easy access to a wealth of information related to air travel. For example, it might be necessary for a reservations agent in Woodbridge, New Jersey, to explain in detail to a caller how to get to the Atlanta airport from downtown Atlanta, even though the agent had never been there herself. In addition, she needed fast access to information on hotel rates, rental cars, limousine service, ticket office hours, calculation of fares, interrelated to air travel. After several false starts with other companies, the Houston-Fearless Company developed a unit specifically for Eastern. This unit contains microfiche in a revolving carousel and places more than 72 thousand pages of information at the agent's fingertips. Maximum access time for any one page is less than four seconds. Eastern now employs about 1,800 of these units.

With this exposure to microfilm, COM was a natural for Eastern from the introduction of the concept. However, like many other companies in a tight money situation, coming up with a hard-dollar justification for equipment was difficult. The COM concept was too new and, at the time Eastern began considering it, it was relatively untried for commercial applications.