I came to Harwell in the summer of 1948 at the invitation of Dr. Klaus Fuchs. During the war I had been a member of a small group headed by Hartree, hidden away in a basement in the University of Manchester and working on a variety of mathematical problems connected with war-time activities. Our main tool for numerical work was the mechanical differential analyser, a fearsome and massive piece of mechanical engineering looking very much like a large-scale Meccano model - Hartree had in fact built a small prototype in Meccano before getting the full-scale machine. It was the most powerful calculating engine in Britain, probably in Europe, at the time - and an almost exact copy was built for Cambridge shortly after the Manchester machine came into use. It was, of course, an analogue, not a digital machine (although I don't think the name digital machine had been invented then). Using it was what one can fairly call man's work - you put on a boiler suit to change the set-up from one problem to another. One half of the Manchester machine is in the Computer Gallery in the Science Museum in South Kensington; I appear there in the accompanying photograph.

We - meaning Hartree's group - had done what at the time was rated a large-scale calculation for Fuchs and Peierls, concerned with the atomic bomb project. Fuchs went to Harwell when it was set up in 1946 to form and head the Theoretical Physics Division, and about a year later asked if I would join his Division to take charge of the computing section he was building. Thus I arrived on the site in mid-1948 - 31 years ago.

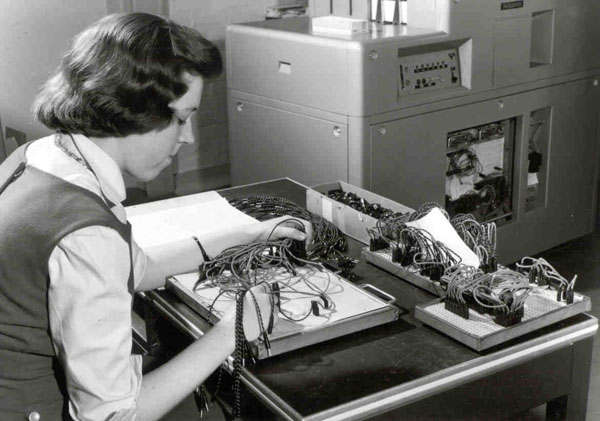

Computing then was a completely different world from what it is now. There were no computers as such apart from tremendous adventures going on in a small number of institutions in Britain and America, amongst which the universities of Cambridge and Manchester were outstanding. The computing at Harwell, as elsewhere, was done with mechanical desk machines, either hand or electrically operated. When I joined the group consisted of about eight young people, mostly girls. Harwell was then just beginning its period of rapid build-up; the BEPO reactor had started up that summer and other large projects were under way. Fuchs had formed his computing group solely to serve Theoretical Physics Division but several things soon became very clear:

It may seem strange today that an enterprise so large and so technologically and scientifically sophisticated should have been planned without a central computing service, but remember, this was 30 years ago and the power and all-pervading nature of computation could not possibly have been realised at the time. Anyhow I told Fuchs of my views and he agreed; we then went to Sir John Cockcroft, the Director, with the proposal that the Theoretical Physics computing group should be developed into a station-wide service and he agreed; and that was the start of the Harwell computing organisation.

This was before the U.K.A.E.A. had been created, when Harwell was still part of the Ministry of Supply. The administration in London - who remembers the Adelphi? - classed desk machines with typewriters and other office equipment and controlled the supply rigidly. I made pilgrimages to the O & M people in the Adelphi to argue for almost every new machine we needed. I have a clear and pleasant memory of the occasion when, one morning, I convinced the head of that section - a very hearty Australian called MacPherson - that we really did need half-a-dozen new electric machines; and of celebrating the achievement by taking myself to lunch at Simpson's, more or less next door - and spending almost ten shillings on this blow-out. Official subsistence was probably about 3s 6d. at the time.

There is scope for a tremendous amount of technique in hand computing and with good techniques and good organisation a surprising amount can be achieved. But the limitations are very great indeed and put a premium on carrying the mathematical development of a problem as far as possible before turning to numerical methods. This is especially true of what was the main field of work at Harwell at the time, nuclear reactor theory, where one is dealing with partial differential equations or, worse, integro-differential equations. Direct numerical attack was quite out of the question then and a whole battery of analytical weapons had been developed to make it possible to get anywhere at all with the limited computational resources available.

An excellent and comprehensive account of the body of mathematical methods developed for the attack on these problems is contained in Nuclear Reactor Theory: Proceedings of the 11th Symposium in Applied Mathematics of the American Mathematical Society, April 1959, published by the A.M.S., 1961.

This, of course, applied everywhere, not just to Harwell. The late Boris Davison was an outstanding classical mathematician and a great expert here. He wrote, jointly with John Sykes (who is now with Oxford University Press) the definitive book on the subject, Neutron Transport Theory (Oxford University Press. 1957), a tour de force of applied complex-variable theory. Boris knew his way around the complex plane better than anyone else I have ever met. The methods almost always led to some form of series expansion which was evaluated numerically term-by-term; doubtless many will remember the names P3 or P5 method, the suffix giving the order of the spherical harmonic to which the expansion was taken. Sheer algebraic complexity usually set the limit to this. Reactors being more or less cylindrical, Bessel functions turned up in most calculations and we made great use of published tables. The best tables by far were those publicised by the British Association; but we were still suffering severely from war-time restrictions and just could not buy the number of copies we needed. Entirely illegally, I got photographic copies made (no Xerox machines then). It's an interesting comment on the times, when one recalls that Harwell had pretty well unlimited funds.

Everyone who runs a computing service knows that when someone comes with a numerical problem the first thing to do is to find out if this is really the problem he wants solved, or whether it is, in fact, some transformation, which may or may not be useful, of the original problem. We had any number of experiences of scientists doing a lot of mathematics on a problem before bringing it to us, when a straight numerical attack from the beginning was far more effective. One case I remember is being asked to evaluate a singularly horrible mess of series, polynomials and quadratures which would have taken days of work; asking where it all came from I was shown a fairly simple non-linear ordinary differential equation and one of the girls got the result which was wanted by direct numerical integration in half a day.

Somewhere around 1952 we were asked by Dr. T.E. Cranshaw if we could help with what one would now call a data collection problem. He was studying cosmic ray showers and had an array of detectors on the Culham airfield; he needed to know, over a long period of time, which detectors had fired when, and to do a good deal of fairly simple arithmetic on the observations. This seemed a good application for standard punched-card accounting machinery and we went with the problem to the relevant manufacturers, British Tabulating Machine Co. (B.T.M.) and to I.B.M., the latter having just set up in a small way in Britain following the dissolution of the link between the two companies. Ironically, I.B.M. were not interested but B.T.M. were; they were enthusiastic and gave us a lot of help. We finished up with an 80-column card punch into which the signal cables from the detectors came and which punched a card showing the pattern of firings, and the time, whenever any one or a combination fired.

The reason for relating this is that the undertaking gave us elementary but valuable experience of punched-card machinery. Not long afterwards news of the Monte Carlo method of tackling neutron transport problems began to come over from America. Essentially a simulation method, in which one followed the life-histories of individual neutrons, this side-stepped the formal mathematical difficulties of the classical methods but required very large amounts of relatively simple computation if results of acceptable precision were to be obtained. Hand computing was quite inadequate but the method was well adapted to punched card machinery which was available as standard, commercially-produced equipment. Further, there was a good deal of experience of the use of this machinery for scientific computation, in the National Physical Laboratory especially. We set up a Punched Card Machine section around 1953 and lured James Hailstone (and his wife Elizabeth) from N.P.L. to run it.

We did a lot of Monte Carlo work with this machinery. K.W. Morton (now Professor of Applied Mathematics at Reading) had joined the group not long before and did a great deal to improve the statistical techniques and so to reduce the amount of computation needed in a problem. We did much general computation also, and here the Hailstones, who had a true flair for exploiting the capabilities of these machines, were invaluable. The manufacturers produced increasingly powerful and sophisticated machines capable of doing, automatically, increasingly complicated calculations, and we finally installed two of what I think can fairly be called the culmination of the punched card machines, the BTM 555. This isn't the place to give a long account of what I still think was a remarkable machine; so let me just say that whilst it was programmed by setting up a plug board, it had a magnetic drum store, allowed the repetition of program steps (DO loops?) and, in effect, the incorporation of sub-routines and could be made to do remarkable tricks. It was, in fact, not far off a computer. James Hailstone exploited this machine to the full; he wrote a short book, which B.T.M. published, describing the machine from the point of view of one concerned with scientific computation and giving half-a-dozen examples of applications: one was Monte Carlo, another a tricky combinatorial problem which arose in nuclear structure theory. I still have a copy.

I think. it worth recording that we used the 555 for what must have been one of the earliest examples of automatic data processing. Reactor Physics Division had a time-of-flight neutron spectrometer in the BEPO hangar for measurement of cross-sections. It was a simple but tedious calculation to go from the numbers they recorded in the experiments to the cross-sections they wanted, and they were doing this by hand and getting swamped. We automated the process, but not without difficulty. The basic calculation was simple enough; the real problem was to find out exactly what calibration and other corrections had to be applied to the raw observations, how these varied from day to day (as they did) and what other folk-lore came into the process. It was quite a salutary experience for both sides at the time.

The punched-card machine installation was in operation and doing good work from about 1953 to about 1957, but meanwhile the development of the true digital electronic computer was gathering momentum. Wilkes at Cambridge was building EDSAC-l, to be followed by EDSAC-2; Williams and Kilburn at Manchester were building the machine which the Ferranti Mark 1 was based on, to be followed by Mark 1*; and at N.P.L. a group which included Turing and Wilkinson was building ACE, or more correctly Pilot ACE, on which the English Electric DEUCE was based. I went to what must have been the very first programming course in Britain, at Cambridge in 1950, and shortly afterwards some of the members of the computing group went to courses at Manchester. I and some of my colleagues went regularly to the seminars which Wilkes arranged on alternate Thursday afternoons in the Cambridge laboratory. as did members of the Electronics Division, notably E.H. Cooke-Yarborough. R.C.M. Barnes and D.J.H. Thomas. When petrol became more readily available. Cooke-Yarborough drove us there and back in his red Allard - a great experience. I've often told people that for several years the entire British computer population could and did meet together in the Cambridge lecture room.

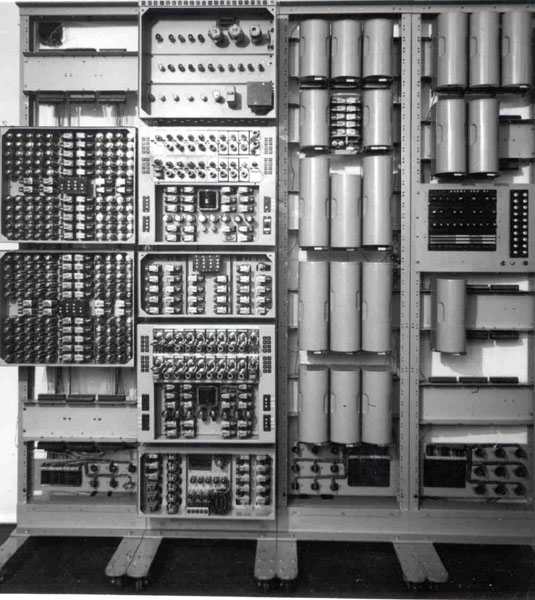

It was becoming clear that the computer was going to happen and to be important, though I doubt if anyone foresaw just how important. There are, by the way, plenty of stories about estimates made at the time - for example that five or six machines at most would meet all foreseeable needs in Britain. These stories are true - I was at one quite high-level meeting at which such an estimate was agreed. No-one should laugh; who, only a few years ago, would have predicted the market for hand electronic calculators? However, as a step on the way Electronics Division offered to design and build for us an automatic calculator in which the switching was done by relays (as had been done in a classic series of machines built by Stibitz in Bell Labs.) but in which the decimal arithmetic and memory were electronic, using about 800 scale-of-ten Dekatron tubes. We got this in 1951 and housed it in the old control tower on the south-east corner of the airfield. It was, of course, slow, not much faster than hand calculation on single operations, but fully automatic, extremely reliable and utterly relentless.

One day E.B. Fossey, an excellent hand-computer (still with what used to be called the Atlas Laboratory), settled down beside the machine with his desk machine and attempted a race. He kept level for about half an hour working flat out, but had to retire, exhausted; the machine just ploughed on.

It took little power and could be left unattended for long periods; I think the record was over one Christmas-New Year holiday when it was all by itself, with miles of input data on punched tape to keep it happy, for at least ten days and was still ticking away when we came back. It was perhaps only just a computer, but granted that, it was certainly one of the earliest in serious and regular use in the country. There is an account in Bowden's Faster Than Thought. The subsequent history of this machine is interesting. We used it up to about 1958 and then, rather than scrap it. offered it as a prize to the educational institute which could give us the best reason for having it. This was the idea of J .M. Hammersley of Oxford and we conducted the operation in collaboration with the Oxford Extra-Mural Department. It went to Wolverhampton Polytechnic who used it for teaching and for real work for at least the next 15 years - an astonishing record; it is now in a museum in Birmingham, and I'm told it can still be made to work.

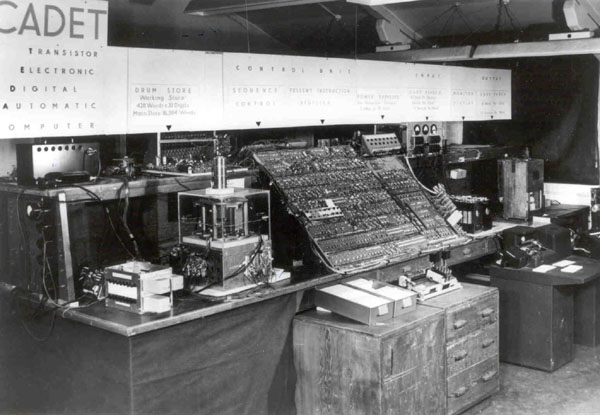

The first computers, and those in service right up to the early 1960's, were valve machines. Transistors began to appear in the early 1950's and Electronics Division immediately began to take an interest in their possibilities. In 1953 Sir John Cockcroft encouraged them to design and build for us, using transistors throughout. The resulting machine was called CADET (Transistor Electronic Digital Automatic Computer - backwards). It went into regular service in an experimental form in August 1956. However, CADET used point-contact transistors which were the only ones available when the project started. But by 1958 these had been made obsolete by the development of junction transistors, so the machine was never re-built in a fully engineered form. It continued in regular use for about four years.

The first half of the 1950's saw the foundations being laid for the computer industry and also for methods for direct numerical solution of field problems, particularly the equations of neutron transport and diffusion. The second is, of course, not independent of the first; these methods lead to very heavy computation and there is no great incentive to carry their development very far if there is no possibility of actually using them. The greatly improved understanding of what was going on in these numerical processes led to the development of effective and efficient computer programs for studying, for example, criticality conditions in reactor assemblies. A.W.R.E. Aldermaston had installed a Ferranti Mark 1* in 1954. We were able to get time on it, mostly to run a reactor-criticality program written by A. Hassitt of T.P. Division. A single run could take from 10 to 30 minutes and the reactor design people always wanted a lot of runs so as to explore some parameter space. We got most of our time at night, between about 10 p.m. and 6 a.m. the following morning. There was no such thing as multi-programming - you had the machine to yourself and you drove it from the console - so you were really working all the time. I, and most other members of the computing group, spent a lot of our nights in the A1dermaston machine room. Driving back at dawn over the Berkshire countryside was often quite delightful. By 1955 it was obvious that we needed a powerful machine of our own at Harwell - powerful must be understood as relating to what was considered powerful at the time. Neither of the machines which were available then, the Mark 1* and the English Electric DEUCE, seemed suitable. In 1956 Ferranti announced their Mercury, one of the first machines to have built-in floating-point arithmetic, which seemed very suitable. Mercury, like Mark I, was essentially a Manchester University design. As well as the novelty of floating-point it had a core store; it's wryly amusing to recall that Ferranti's first announcements of the machine spoke of the giant immediate-access store - 1024 words (of 40 bits each). We ordered one in 1956, not without some opposition from, curiously enough, parts of the scientific population; not everyone was convinced that a computer was really a necessity. The actual process of getting the machine ordered is a delight to recall. I had convinced my Division Head - Brian Flowers - (now Lord Flowers) that this was what we needed and he told me to go ahead. I wrote a one-page letter to Tom LeCren, who was then Secretary of Harwell, setting out the case and saying it would cost £80,000. He asked me to explain a few points and substantiate a few statements, accepted what I said and sent off the order. We got the machine in 1958 and installed it in decidedly slummy conditions in Building 328. Ferranti made about twenty of these machines, quite a number of them going to nuclear-energy centres; ours was, I think, number 4; there was already one at Saclay when we got ours, and later ones went to Risley and Winfrith and also to CERN.

All early computer users wrote their programs in machine code so programming was something of a black art. R.A. Brooker in Manchester (now Professor and pro-Vice Chancellor at Essex) devised an Autocode for the Mark-l and Mark-I*, which was a simple, easy-to-learn and easy-to-use high level language - not very high, to be sure, and very slow in execution, but a great improvement on machine code if you had only a fairly small program to write. We introduced this to Harwell and it caught on. When Ferranti embarked on building Mercury - based, as I said, on the next Manchester design - Brooker decided to write a new Autocode which, because of the higher basic speed and better facilities offered by Mercury, would be much more powerful and flexible and much faster. He spent a lot of time with us at Harwell discussing what should go into the new system, so that Harwell certainly had a significant influence on what he produced. The system, Mercury Autocode, proved a great success. Although simple when compared with modern high-level languages it provided an admirable range of facilities and was very easy to learn. With various enhancements it had a remarkably long life; a compiler for the final version, called CHLF because it was produced by a collaboration between CERN, Harwell, London (University) and Farnborough (RAE), was written for Atlas and was still in use in the early 1970's.

Things began to move very fast in the computer world in the second half of the 1950's. Technology, particularly that of producing core stores, improved greatly and several American companies began to produce bigger and more powerful machines than Mercury; above all, I.B.M. entered the field in earnest with the 704. This succeeded their first large-scale machine, the 701, of which they had produced eighteen between 1952 and 1954. It had built-in floating point, like Mercury, but was altogether on a larger scale and, in particular, could have what was then a scarcely believable size of core store, 32 Kwords. It is only fair to add that it cost a very great deal more than Mercury. A.W.R.E. installed one of these machines in 1957 and we used it for the increasingly large amount of work which was too big for Mercury. Tony Hassitt had rewritten his reactor program for Mercury and the new version, much faster and more powerful, was much used. But the spread. of these bigger machines in America had led to the development of bigger and more powerful programs, and of linked suites of programs, which the Harwell reactor engineers and physicists wanted to use. These programs were produced in the big American nuclear laboratories such as Argonne, Knolls (General Electric) and Bettis (Westinghouse); also much important work was done at Los Alamos, including, for example, the production by Bengt Carlson of the simple but ingenious Sn method for direct numerical integration of the neutron transport equation. I think it was about this time that the writers began to give names, mostly acronyms, to their products. The 704, like Mercury, was a valve machine. Towards the end of the decade I.B.M. produced a transistor (and enhanced) version, the 7090, which was probably the first large-scale machine using transistor circuitry. A.W.R.E. replaced their 704 by the faster 7090 in 1960. As early as 1959 I and several of my colleagues were feeling concerned at the way computer production was going. We could see that at least in the scientific and technological field (and especially the reactor field) there was a need for ever more powerful machines. American industry was already clearly in the lead and I.B.M. was embarking on the Stretch machine which at the time seemed to be aiming almost at the ultimate in computers. Talks between myself, Bill Morton, Ted Cooke-Yarborough and John Corner (in charge of computing and theoretical work at A.W.R.E.) led to the view that something should be done to get an advanced, big machine project going in Britain. Corner and I wrote to Sir John Cockcroft; he was most sympathetic to the idea and wrote to all the leading people in the country concerned with computers inviting them to come to Harwell to discuss the needs, problems and possibilities. There was a ready response and we had two very constructive meetings -in which in addition to A.E.A. people, Wilkes, Williams, Kilburn, Strachey, Halsbury (then Managing Director of N.R.D.C.) and others took part. Everyone agreed that there was a need for an advanced machine project to be supported in Britain. The final decision was that this should be based on the new machine then being designed at Manchester by Kilburn and his colleagues, a machine which satisfied the criteria of computing power, storage capacity and general flexibility and sophistication at which we had arrived. Whilst there were great and important differences between the two concepts, the aim was a machine in the same class as I.B.M.'s Stretch. A.W.R.E., incidentally, installed a Stretch in 1962.

Merely to shower blessings on an R & D project was a long way from being enough. What was important was that the Manchester design should become a properly engineered machine, manufactured by the computer industry and sold as an industrial product. In 1960 Ferranti, who had a long association with Manchester University, indicated that they would engineer and market the new design if they were guaranteed one order. They proposed to call it Atlas - after Mark I they had given all their machines mythological names: Mercury, Pegasus, Orion ... Sir John Cockcroft, who all along had taken the view that this was a project which the A.E.A. might well support, gave me the job of building up the case. I spent most of 1960 going round the A.E.A. Establishments (not A.W.R.E., understandably, although I had many talks with Corner) trying to assess the future demand for computing; Cooke-Yarborough helped a lot. The Authority accepted the case for buying an Atlas, but because of the cost, nearly £3M, they had to have the approval of the Treasury and of the Minister for Science, Lord Hailsham. After much discussion, in which Sir William (now Lord) Penney took an important part, Sir John Cockcroft having now left Harwell to become the first Master of Churchill College, Cambridge, the following proposal was put to the Treasury and to the Minister:

The authorisation which came out of the Minister's office, however, was:

The last statement reflected the fact that N.I.R.N.S. had been set up with the right to provide services without charge to universities, whilst neither the A.E.A. nor Government bodies had this right.

N.I.R.N.S., with Lord Bridges as Chairman, then took over the project and agreed to build a new laboratory, the Atlas Computer Laboratory, on the Chilton site. The computer was ordered in the summer of 1961 and Ferranti gave a splendid lunch-party at the Savoy in September to celebrate this. I recall saying to Tom Kilburn as we left that I should never have expected computing to run to such high life. I was given the job of Director of the new laboratory and transferred from the A.E.A. to N.I.R.N.S. in December to get this new enterprise going. My thirteen years with Harwell had been immensely exciting and enjoyable and so were the next fourteen with Atlas. But that is another story.