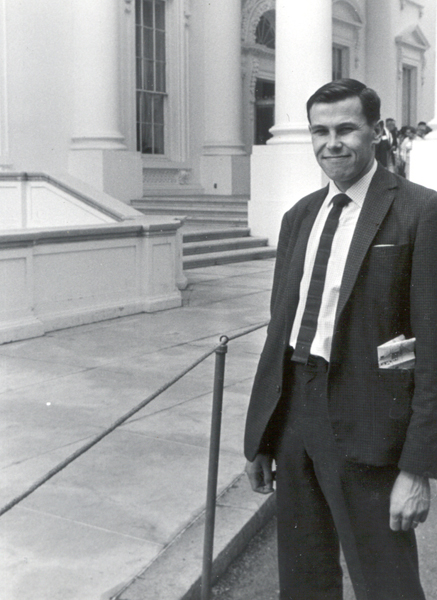

Between 16th May and 13th June 1965 Bob Churchhouse (RFC), Bart Fossey (EBF) and Bob Hopgood (FRAH) visited the United States. The primary reason for the visit was to attend the IFIP Conference held in New York from 23rd to 30th May; the secondary reason was to visit a number of computing laboratories and computer manufacturers.

We flew to Boston on Sunday 16th May and visited M.I.T. on 17th and 18th.

Bob and Jan Conrod had returned to MITRE by now and they met us at the airport and we spent the Sunday afternoon seeing the sites of Boston.

On the evening of the 18th we went to New York and on the 19th RFC and FRAH visited IBM Poughkeepsie. On this day EBF should have visited Brookhaven but an important phone message was not delivered to him and consequently this visit was missed.

* On the subject of non-delivered messages see the report of the visit to Poughkeepsie.

On 20th we all went to Bell Lab., Murray Hill, New Jersey and EBF stayed there next day to give two lectures on the Atlas Supervisor. RFC and FRAH meanwhile went on to Washington and visited National Bureau of Standards. EBF, joined them in Washington for the weekend.

We returned to New York on the afternoon of Sunday 23rd May and stayed there until 31st May attending the IFIP Conference. On 31st May RFC and FRAH went to Pittsburgh whilst EBF went to Oakridge, Tennessee. Although 31st May (Memorial Day) is a public holiday in the U.S.A. RFC and FRAH were able to visit Carnegie Institute of Technology at Pittsburgh. On 1st June they spent some more time at Carnegie and then flew on to Minneapolis where they spent 2nd June visiting C.D.C. Meanwhile EBF was visiting Oakridge National Laboratory before flying on to San Francisco on 2nd June. On 3rd June EBF visit Berkeley Bubble Chamber Group whilst RFC and FRAH flew to San Francisco from Minneapolis. On 4th June RFC visited Professor Lehmer at Berkeley and the others spent the day at the University of Berkeley Computing Laboratory.

We spent the weekend of 5th/6th June in San Francisco and travelled to Palo Alto on the evening of Sunday 6th. We visited Stanford University Computation Center on 7th and on the 8th RFC and FRAH spent half the day with the C.D.C. Programming Group at Palo Alto and EBF went to Los Angeles and visited the Western Data Processing Center. The other two joined him in Los Angeles on the evening of the 8th. On the 9th we visited the RAND Corporation, Santa Monica and on the 10th the California Institute of Technology. Finally on 11th June we visited C.D.C. Systems Programming Group, Los Angeles. On 12th June we flew to New York and then on to London.

We spent the morning of 17th May with Professor Corbato. He talked to us about the history of Project MAC and gave us a demonstration of its use.

Originally there were four or five major computers at MIT and a lot of smaller ones. The largest was the 709 at the Computation Centre. Corbato and a few others tried a time-sharing system*, using originally only 5K of core (it now takes more than 32K).

*There is a clear distinction drawn in the U.S.A. between time-sharing (as in MAC) and multi-programming (as in ATLAS).

The first real working system came with the introduction of the 1301 disk by IBM (Nov. 1961). The computer used for the time-sharing project was replaced by a 7090 in January 1962 but it was effectively not in action until May. The full Project MAC didn't really get started until the Spring of 1963. By Summer 1963 the Computation Centre's machine with 10-15 consoles was in use. It was realised that Project MAC would require its own machine rather than use half the time of the Computation Centre (the other half being used in the conventional manner). Consequently an identical copy of the Centre's 7094 was ordered, it arrived about the end of 1963 and was fully operational within a week. The machine has a 36M word disc.

The present arrangement is that the MAC 7094 operates on the time-sharing system all the time and the Centre's 7094 operates time-sharing for half the time. The Centre has to serve everyone whereas MAC has a list of authorised users (at present about 300).

There are facilities for up to 120 consoles provided in all. Most of these are around the campus but some are at considerable distances, e.g. Lincoln Labs., one at Bell Labs. (~ 300 miles away); John McCarthy at Stanford University (Palo Alto, California) has a TWX line and there is a very crude Telex which has been used by a number of people abroad, including Wilkes at Cambridge. Not more than 30 consoles can be active on the MAC machine at anyone time and not more than 24 on the Centre machine.

The primary reason for embarking on Project MAC was to try to overcome the frustration which most users felt with the conventional system caused by slow turn-round even for very short development runs. (An article by Wilkes which we have seen since we came back puts this very well. In the early days of computers one programmer sat at the machine's console and used the machine at his own speed to debug his program. Since the machine was idle most of the time this was extremely wasteful. With the arrival of 704, 7090's etc. the system of closed-shop operations was introduced with all work going through in a queue. This led to much better use of the machine but also meant that programmers frequently could do nothing for several hours awaiting the result of a run of perhaps a few seconds. Project MAC is an attempt to get the good points of these two extremes. The programmer can use the machine during long periods of the day. If he sat at his console all day he would only get about 2% of the machine's time although he would feel that he had 100% of the time of a machine 50 times slower.)

Corbato told us that experience now shows that people are still frustrated, though much less so than formerly, and that the vast majority feel that the Project is well worthwhile.

All the users have a job number and a codeword and a time allocation. The Supervisor checks their time allocation and they are told at the end of each session how much time they have used and how much remains. Users are also allocated a certain amount of disc storage. A total of 3/8 of a 36M word is available. Each file on the disc has an activity date; theoretically any file not referred to within a week is dumped by the Supervisor into an archive tape and a message printed for the programmer and operations staff. In practice people have developed file massagers which defeat the system. There seems to be an element of dishonesty about the people using MAC, the codeword system had to be introduced because people were using other people's job numbers and names! The codeword is typed in but printing is suppressed so that it never appears on any document.

We asked what sort of work was being done by users of MAC. Among the fields of activity mentioned were: machine-aided design, development of a. LISP-2 system, civil engineering development and problem-oriented languages. People do not get permission to use MAC unless their project, in some sense, will help advance the state of the art. They did not encourage people to use MAC for the development of programs which would later be turned over to operations as long-running production jobs. Our own view was that for the majority of installations, including the Atlas Laboratory, one of the main advantages of a MAC-type system would be the boost to the development of jobs including systems programs, intended for production runs later. MAC itself is rather a special case of course being largely concerned with research into the use of computers.

Latest developments include the provision of a new manual (it is 400 pages in all) which is kept up to date on-line; users can request sections typed out at their consoles. Another facility will allow use of common routines by different jobs; arrangements are also being made to allow use of magnetic tapes from the consoles - at present this can't be done, although the large disc makes use of tapes much less common than elsewhere. Corbato said they also plant to make the allocation of resources more graceful (he didn't elaborate on this except to say that he thought they'd introduce resource pools for groups of users).

They were now feeling dissatisfied with the 7094 - the system works but is limited and were going to introduce their new machine, the GE636, which they had developed jointly with GE and Bell Labs. They were going to receive a GE635 in June, and use it as a 636-prototype by means of a simulator (the efficiency of the simulator is 1%). The 636 would arrive in February 1966 and Bell Labs would get their machine in March. A key feature of the 636 is its file; this allows several people to read it simultaneously but only one can write to it. The core store will be 128K at MAC, but Bell Labs will have 256K (1µ sec cycle time). Hardware can access 4 words/cycle and with 2 CPU's gives 8 words/sec accessed. There is a 100µsec overhead for switching processors. The machine is superficially like a 7094 (it has a 36-bit word, for example) but it has been carefully designed as a multi-processor. The central processors have no direct communication with the I/O controllers; communication is done by messages in memory. The philosophy of the 636 is quite different from the CDC 6600 - although at first sight this seems surprising - the CDC 6600 is best for one user, but the 636 is designed for many.

In the IBM 360 the I/O Controllers were put into the Processor (this was a great disappointment to Corbato and his colleagues). The 360/67 however was introduced to try to remedy this and in fact it now looks quite like a 636. Corbato told us that IBM keep trying to foist a 360/67 onto MIT and that MIT will have none of it because, among other things, the 360 order code is one of the world's worst. The GE 636 has a system of page address registers (in the Atlas sense) but has no fixed size for the Supervisor. As much as possible of the system will be written in PL/1. The present system on the 7094 takes 400K to 1000K in all - the central staff wrote about a Quarter of this. Corbato thought that 100 lines of de-bugged code per man/month was a valid estimate for machine-code programs, or at best twice this. Apart from PL/1 they considered Fortran 4 and MAD as possible alternatives for the language of the system. There is no implementation of MAD on the 636 and although there is an implementation of Algol on the 635 MIT don't like it because it is a very literal interpretation based on the Algol 60 Committee's report and also because it would not be easy to move it to the 636, it also has a lot of faults and does not allow any form of sub-compiling. They hope to have a subset of PL/1 implemented by October. They are not planning (or caring about) any optimisation of this compiler until the 636 arrives in February 1966.

Following the arrival of the 636 there will be a 3-months overlap with the 7094.

Bell Labs have a contract with Digitec to produce a good PL/1 compiler for the 636 within a year, completely debugged and optimised in 18 months.

Questioned about the length of the average job on MAC Corbato said that it was about 4 seconds. The most popular language was MAD and they thought Fortran was crude. In the new system users will pay for time, peripherals, input/output but they will be able to include a parameter in their job descriptions limiting their liability to $N.

Corbato said they were anxious to get both consoles and printers with large character sets. The IBM1050 will be used as consoles, these have 89 printing characters (including space) as well as control keys. They are producing magnetic tapes to be printed off-line on a 1401 having a printer with a 120-character special chain. A new variant on the 360's will allow virtually a small printing press with punched cards defining characters out of several sets.

The Atlas Laboratory Sigma 2 Multi-access Project was inspired partly by this visit.

In the afternoon, discussions took place with the above. The subject was much the same as in the morning with a slightly different slant . Additional information obtained:-

Several lines of research are taking place. These were briefly described:

In the evening after the first day at MIT, Bob and Jan Conrod took us to the Hillbilly Ranch to hear the Lilly Brothers playing with Don Stover. They had arrived in Boston in 1952 and stayed until 1970. This was probably the only location for listening to Bluegrass Music in New England during that period.

The correct name is M.I.T., T.I.P.(Technical Information Project).

The head of this project is Dick Kessler. It has been added as a facility to Project MAC and has been achieved with a remarkably small staff. Kessler's view was that the library function alone couldn't justify private computer facilities; it would have to be grafted on as an adjunct to an existing public utility (i.e. MAC).

Work began about three years ego on a prototype and it was regarded as essential that any system adopted should be capable of being extended 10 or 100 times. Thus, finding 6 physicist-indexers was feasible but finding 60 or 600 was impossible; hence indexers were ruled out. The work of preparing data should not demand high intellectual skills. The system should have serious practical utility (above critical size). After much thought they decided on a system based on the contents of 23 physics journals - analysis had shown that these accounted for 75% of papers abstracted in Physics Abstracts.

It was impossible to solve 100% of the problem, Kessler said, there was a strong law of diminishing returns. They aimed to satisfy 75% of physicist's needs and to know the cost of satisfying the next 5%.

The titles, authors, journal and references are punched for about 1000-1200 articles a month. Since the references are put into the system the very powerful search strategy of a citation index can be employed.

The customers, using the MAC consoles, use a form of basic English viz:

SEARCH Phys Rev Vol 120-125 SEARCH All new (= latest issue of each of 23 journals) FIND AUTHOR Smith FIND TITLE containing Cryogenics FIND microwaves BUT NOT spectroscopy FIND papers WITH superconducting BUT NOT FROM MIT

The citation index also allows such requests as:

FIND all papers REFERRING to paper X FIND all papers SIMILAR to paper X

(i.e. find all papers whose list of references have a correlation coefficient < threshold with the references of paper X).

At present there are about 35,000 articles (~ 3000 tracks of 512 words on the disc). Of the three years that have elapsed since the start of the project one year was spent in studying the broad decisions. The project leans very heavily on MAC.

Apart from Kessler the staff on the project consists of 2 students, 3 keypunchers and 1 secretary.

Kessler said that 2 keypunchers can keep up with the current journals. Coverage of the back-log is spotty; for Phys. Rev. they go beck 15 years but for most other journals they go back only a few years. Several interesting historical facts have come to light in the course of this work, e.g.

Each article put into the system requires about 12 cards - fairly loosely packed (e.g. each author gets a separate card).

They have built up a cross-reference matrix showing how many papers in journal A refer to papers in journal B. By means of this they can decide which journal they should next introduce into the scheme.

The extensions to the system envisaged are as follows:-

A paper on this project was published in Physics Today in March 1965.

Programming was mainly done in FAP. Recoding for the GE636 has not yet started.

The Atlas Laboratory Information Retrieval Project was based on this visit.

We spent the afternoon of 18th May with Dick Steinberg who is Operations Chief on the Computation Centre 7094 Mark I.

The machine is similar to the MAC machine but has a different drum and disc. It is essentially a service machine for the MIT faculty, staff and students + about 50 other colleges, at present free of charge.

There is no help on programming but some consulting service on languages, systems and general help is provided. Only one course (late in the Summer) is given, and this is mainly for new research assistants.

There is a small systems maintenance group (at most 7 people, now only 5). They don't collaborate much with IBM because although they use the Fortran Monitor System they have changed it greatly to allow time-sharing.

Fortran II is still the most-used language at the Centre. They haven't encouraged Fortran IV because they don't like IBSYS. They encourage MAD strongly - its a fast compiler and the bulk of the work at the Centre is compiling.

The typical job is rather less than 2 minutes: about 60% of the time is spent in compiling Fortran programs.

Running of the machine(s) (they have some 1401's as well as the '94) is carried out on 4 shifts by 3 or 4 men per evening or night shift. The 3 or 4 men act as follows:

Of these (2) is most likely to be the Shift Supervisor.

On a day shift they have 5 working operators + a Supervisor (who prepares the schedules for the other shifts) + 1 more to look after various admin, problems including stocks of cards and pay.

The punch room service provided is very small - 3 people in all. Most people punch their work themselves, particularly programs, but the operators are usually asked to punch data. All punching is verified and there are no rules about using ink or special forms. Punching speeds are reckoned to be 500 cards/hour. The keypunch operators are given special training.

Turn-round at this time of the year is 3-4 days, express runs might be returned in about 12 hours. An express run has to satisfy certain conditions: 1 minute computing (at most), not more than 500 lines of output, no private magnetic tapes. Programs are batched on the 1401. There are three 1401' s in all in the Centre; one is tied up for about 8 hours a day on the Calcomp Plotter.

Apart from the discs (initially 18M words, now 36M) they have 500 reels of magnetic tape as back-up for the disc, and about 1000 other reels for general use.

Before time-sharing 3 printers were used pretty solidly (they have 4). Now one or two are always idle. An interesting empirical rule they have found is

1 × 7094 requires 2½ × 1401

As already stated they use the Centre machine for time-sharing as part of Project MAC for about half its time (in fact 10 hours/day in the week and 14 hours/day at weekends).

In addition to the 2 × 7094s and 3 × 1401s there ere about 30 other machines on the campus (including a 7044, 1800, 3 × 1620, 2 × 1401) and some smaller machines LGP-30, PDP-1, etc.

The visit had a very unsatisfactory beginning. We (RFC and FRAH) had been told to go to IBM's new headquarters at 201 East 42nd Street, New York at 8.15a.m. from where transport would be provided to Poughkeepsie (which is about 75 miles North of New York). Unfortunately a telephone message to the hotel informing us of a change of venue was not delivered, despite the fact that we asked twice if any messages had been received. Consequently we missed the transport and by the time alternative arrangements had been made it was 10.30. We reached Poughkeepsie, driven in a Ford Mustang by Dick Larkin, and went to the main plant after lunch.

The visit was thus cut to about 1½ hours but arrangements for showing us round had been laid on and we think we saw all the essentials. We were taken through the 360/40-50 assembly line from beginning to end. Near the beginning we saw the manufacture of core stores: the graphite is first made into small rings of approximately the correct size, these are just made of loose particles and are not compressed at this stage - a puff of air disintegrates them. The rings go through a series of ovens and emerge as hard-baked single cores of internal diameter .019 inches, external diameter .030 inches. Each core is tested for its magnetic properties by having a needle inserted through it, cores which fail fall into a reject bin. Sets of 4096 cores are then collected onto a plate, with a ridged edge. The plate has 4096 holes, each large enough to hold a core, in a 64 #215; 64 mesh. The plate is gently vibrated and after a short time most of the 4096 holes contain a core; a girl then places into holes the few loose cores that remain. Next the 4096 cores are lifted out of the holes, automatically and simultaneously, and 64 horizontal and 64 vertical wires are run through their centres. Any diagonal (sensing) wires required are then put through by hand. The 64 #215; 64 core store plane is put onto a frame and the complete frame is tested for its reading and writing properties. The plane is then put into a stack with, say, 35 other planes and the whole stack is tested. If it passes it is passed on to form part of a computer memory.

In the same room there were several machines which were wiring up chassis for the 360 series. These are quite large machines; they read their wiring information from a large deck (several thousand) of cards in an adjacent card-reader. These machines place one wire on a chassis in seven seconds.

When the chassis are completed they join the core stacks in the main assembly area and the computers are put together. When complete the computers undergo a series of logical and physical checks the most taxing being the final one in which the machine is placed in a special room. The temperature in the room is 90° F but the tape decks are placed in ice. The machine is expected to function correctly for several hours.

On the assembly line we saw about 100 computers of the 360/40 and 360/50 types being put together. The production schedule is apparently very tight: over 1,000 machines are to be produced this year and over 10,000 by the end of 1966. One production target had just been met, on schedule.

We saw the new I.B.M. hyper-tape units in action. The hypertapes themselves are physically smaller than the usual 729-type reels. The hypertapes are provided in rectangular-shaped enclosed capsules which contain not only the reel of hypertape but also the take-up reel. The whole cartridge is loaded into the deck en bloc and the cartridge can only be opened by a key in the tape deck itself. Thus the cartridge is completely protected from dust whilst it is off the tape deck. When the cartridge is loaded onto the deck the key unlocks it and the reel of tape and take-up-reel slide out of the capsule into position for reading/writing. On being disengaged the reels are automatically loaded back into the capsule, the cartridge is locked and unloaded from the deck. The deck is considerably smaller (in height, not width) than a 729 deck and the amount of tape in the vacuum chambers is only about half of that on the 729. When the tape is reading, writing or unwinding the section of tape in the vacuum chambers remains of constant length - thus there is no moving up and down of these sections to be seen.

Odd fact, gleaned from Dick Larkin, IBM's turnover in 1964 was $3,319,000,000.

In all: a very impressive production line and a very worthwhile visit.

Bell Labs have three installations in New Jersey: Murray Hill, Holmdel and Whippany. The three of us visited Murray Hill on the 20th May and EBF gave two lectures on the Atlas Supervisor at Murray Hill and Holmdel on 21st May.

Most of our information was obtained from Dr. Holbrook. There are four 7094's at the three installations. A typical set-up is the one at Murray Hill:

Some tape units are switchable from the 7094 to the 1460s. (A 1460 is an upgraded 1401, being about twice as fast.) The two Holmdel machines are similar to the Murray Hill machine but Whippany have a 7044 in place of the 1460s.

On the conventional work-load (i.e. not including the on-line work to M.I.T.) Holbrook said that there is a very heavy batch-processing load which will remain even when time-sharing on the GE 636 is introduced (the 636 is due in May 1966). In March three machines at the three installations did 999 hours of useful work.

Holbrook gave us some very interesting statistics on the conventional work-load:

On turn-round he also had some interesting comments:

On growth of the installation: they began (on a large-scale) in 1958 with a 704. The rate of growth of work was initially a factor of 2.0 per year until 1961 and since then has been 1.5 per year. They started charging for machine time in January 1961. The 7094 was converted to a 7094-II about the end of 1964. The conversion took 10 days and the machine had a lot of down-time in the weeks following the conversion. Since January 1965 it has been very reliable. Theoretically the period from 7.00a.m. to 9.00a.m. is used for scheduled maintenance but this is not always necessary. For example in April there were 27 hours unscheduled maintenance and 29 hours scheduled. If there is a machine fault and the machine is not working within 4 hours a call for an expert is made to Poughkeepsie (125 miles away). On relative speeds of the 704, 7090 and 7094 Holbrook gave the following factors, relative to the 704:

They also reckon that the 7094-II = 1000 × 1620.

Bell use their own monitor, not F.M.S. Bell needed a monitor in 1958 and at that time the only one in existence was one by General Motors. By December 1959 it seemed unlikely that F.M.S would be available by July 1960 so Bell wrote a 704 monitor for the 7090 - this gave a poor performance compared to the 704 (a factor of 2.5 down), because of inefficient buffering, but they gradually improved the system. At Whippany IBSYS is used quite a lot. The IB loader requires 12 seconds or so and F.M.S. has a 25-30 seconds inter-job time.

In the three installations there are over 1,000 programmers. Bell Labs employ about 15,000 people of whom 4,500 are at Murray Hill.

There are high speed data links joining the three locations. Although something of a luxury they use speech channels working at 90KC. Rental of these lines costs them (part of Bell Telephone system!) $20 mile/month; to others it would be $45 mile/month. The work for Holmdel (which was only founded in January 1962) was done for 7 months at Murray Hill. Bell consider that this saved them (in some unexplained way) $400,00O. The second machine at Holmdel was only installed in April this year.

Time is sold to customers at the rate of $670 hour for 7094, $85/hour for 1460. The charge is worked out by the monitor program which reads the 7094 clock and which also estimates 1460 time from the amount of input/output.

The early tape units from IBM were too severe on the tapes so IBM modified the heads. The present decks (729-VI) are OK, they work at 90 KC. With the introduction of discs, tape usage went down significantly. An interesting comment was that no savings were observed in Fortran compilation times when discs were introduced.

On a typical day shift:

On most days at Murray Hill an accelerated service begins at 3.30p.m. For this service there are three input slots on the reception counter:

For (a) the turn-round is about 20 minutes. The system clearly relies on the honesty of users but it works very well. For (a) and (b) a program must satisfy certain conditions:

To help in all this batched input tapes are kept down to 1 or 2 jobs.

They have a SC4020 (General Dynamics) and find it extremely reliable.

On the data-preparation side, the keypunchers are high-school girls. They teach them some Fortran but they are trained to punch what is written; however they ring around anything they feel may be wrong and they telephone the programmer.

Each job is accompanied by a plastic card which has a series of holes punched in it. When the job is complete the plastic card is put into a slot in the back of a telephone and the programmer's number is automatically called, on answering he receives a message that his work is complete.

Bell will get a GE 636 (see also the report on the visit to M.I.T.). This will be at Murray Hill and will comprise:

In explanation of (f); apart from the drum, Bell will have to lose 2 of something before being out of business. This philosophy brings the Computing Lab. into line with the rest of Bell Systems Equipments.

FRAH and EBF spent some time talking to Doug McIlroy who gave them some information on the PL/1 compilers envisaged for the GE 636. A compiler is being written at Bell using the TMG system. This is a Compiler Compiler with Backus Type Transition Tables. The compiler will not be very efficient and its main purpose is to get something available as soon as possible. A final optimised compiler is being written by DIGITEC with their Compiler Building Language. There are about 12 people working on the project under Jim Dunlop and Don Moore. Amongst other things it will do optimisation of arithmetic statements over basic blocks.

One interesting fact which emerged was the different approach to the implementation of variable length strings. The IBM compilers allocate fixed amounts of store for the variable length strings (as defined by the maximum length) whereas the Bell system will have a chained area of store allowing more flexibility. This will, however, almost certainly cause some incompatibility between the two implementations of the language.

Meanwhile RFC talked to R.F. (Dick) Hamming. Hamming is building up information on program libraries in computer installations. He is very critical of nearly all of them and would appreciate any information on good libraries that we might come across.

All of us later went to a demonstration of the use of the BEFLIX language for generating animated films (Ken Knowlton).

This visit was mainly of interest to RFC. who wanted to meet Morris Newman, whose work on coefficients of functions related to the partition function is in the same area as that of Dr. Atkin of the Atlas Lab. FRAH visited N.B.S. but spent most of the time with the computer personnel. EBF stayed on at Bell Labs to give his two lectures on the Atlas Supervisor and flew on to Washington in the evening.

Morris Newman is head of the Applied Mathematics Division at N.B.S.; his main interests are nevertheless clearly in number theory and allied topics. Newman's work is linked with that of J. Lehner and RFC asked Newman if Lehner worked at N.B.S. It turns out that Lehner is a Professor of Maths at nearby College Park (University of Maryland) but he works for N.B.S. one day a week.

There are two computers at N.B.S. The major one is a 7094-II; the other is called PILOT. Newman does most of his work on PILOT; time on this machine is free whereas 7094 time must be paid for. According to Newman, PILOT is about as fast as the 7094 but has a much smaller memory: 2K or 3K of 28 or 56-bit words). There is a lot of time available on PILOT and Newman finds that this makes up for its deficiencies.

Newman was extremely interested in the work on Number Theory being done at the Atlas Laboratory and RFC gave him a fairly full account of it. He then told RFC about some of his own work which can be summarised in the form of problems.

Let A be a matrix (ai j). Form

- the permanent of A.

Per(A) has very few properties; it is important in combinatorial analysis. Suppose that A is doubly-stochastic,, i.e. ai j ≥ 0

Then: Per(A) ≤ 1 (trivially, since it is bounded by the product of the row-sums)

Also: there exists a constant Cn such that

0 < Cn ≤ Per(A) (in fact Cn < 1/nn )

The problem is to find

Min Per(a)

There is a conjecture (due to Van der Waerden) that the minimum occurs for A = Jn where Jn is the matrix having all its elements = 1/n. In this case

Per( Jn ) = n! / nn

This is true if n = 3 and is probably true if n = 4 (assuming some work of Gleason's is correct).

If we assume A is positive semi-definite then M. Marcus and Newman have proved that

Per(A) ≥ n! / nn

and the minimum occurs for A = Jn.

For n = 6, thousands of random doubly-stochastic permanents have been generated and analysed on the N.B.S. computer and no counterexample to Van der Waerden's conjecture has been found.

Let A be an n × n matrix having all its elements 0 or 1. Then 0 ≤ Pere(A) ≤ n! Let K be a fixed integer. Find the matrix of smallest order having K as its permanent. Let F(K) denote the smallest order of a matrix A such that Per(A) = K then Newman can prove that

log F(K) ~ log log K

Related to the above: for fixed n what values of Per(A) can actually occur?

Let x1, x2, ... xn be a sequence of positive numbers. Let

Then there was a conjecture that

Sn ≥ n/2

This is known to be true for n ≤ 8 and false if n = 14, and also false for n ≥ n0 (n0 sufficiently large). Newman thinks it may be true for n ≤ 12, and false at n = 13. He bases this belief on the fact that a certain matrix of second partial derivatives associated with Sn is positive semi-definite for n ≤ 12.

Can we find a counter-example for n = 13?

Rankin has proved that

Sn > Cn for all n (and C > .3).

What about Min Sn / n? It has been proved that this exists.

Given a pair of distinct primes (p, q) show that

This has not been proved or disproved. It is believed. that it may be true even when p, q are only relatively prime. This problem arose in some work on simple groups. The: result is trivial for q = 2 but even for q = 3 it has not been proved.

There were some other problems as well but RFC's notes on one are incomplete and the others are probably not of general interest.

Newman was very anxious for Dr. Atkin to write-up his work on (mod 11) etc. and would be delighted if he could spend a year in the Washington area or at least visit N.B.S.

The three of us spent the weekend in Washington before travelling back to New York

This would require a report in itself and since the Proceedings of the I.F.I.P. Conference have already been published in part and will be published in full we refer those interested to these Proceedings.

In New York, the three of us shared a suite in a hotel with Mike Powell from Harwell for $28 a night ($7 each) which even in those days was a bargain. It was a US Government rate that the Embassy had obtained for us. We managed to do a bit of sight seeing during the week.

RFC and FRAH flew to Pittsburgh from New York on the morning of Monday, 31st May. This was Memorial Day, a public holiday in the U.S.A. but fortunately there were quite a lot of people at work at C.I.T. We spent most of the day and part of 1st June with David Cooper, who used to run the Programming Group at ULICS and now holds a post (Assistant Professor) at C.I.T.

Cooper is largely responsible for giving a computer science course at C.I.T. Cooper's own lectures are based on reprints of papers by Brooker, Floyd and McCarthy; the general lines of the course were laid down by the Director of the Computing Laboratory, A. J. Perlis, (who is also a Professor of Mathematics), and are described in an article by him in Comm. of the A.C.M., Vol. 7, Number 4, April 1964 (210-211). The course is rather unusual in content and is really very advanced in its approach. As Perlis himself wrote (op. cit): The major themes covered are: (1) Structure of algorithms; (2) Structure of languages; 3) Structure of machines; (4) Structure of programs and (5) Structure of data. The course so described is officially titled: 3205 - Elementary Theory of Computation. This is open to all interested and usually about 100 students take it. The programming language is Algol. Note that no numerical analysis is included in this course.

The machine is a G-21 (originally built by Bendix, now taken over by CDC). There are 2 central processors with a total core store of about 67K (6 µsec). The backing store is a 48 million character disc. Most operations in the central processor take 'about 20 microseconds. They also have a 7040 but this is mainly for the physicists.

The G-21 has about 20-30 teletype consoles which share 8 channels into and out of the machine (soon to be increased to 16 channels). Cooper was just about to get his own console in his office. A few people already had consoles at home. They also have display tubes and are going to install some light pens. The machine will be replaced by a 360/67 in due course.

For the S-205 course mentioned above the G-21 does a certain amount of automatic marking of the students exercises, comparing the answers with a list stored on the disc.

More advanced courses than S-205 are provided but students can only attend these if their marks are sufficiently good. These courses are S-206, S-207 and S-208; S-206 (for example) includes tuition in IPLV and LISP. Courses in numerical analysis are given by the Mathematics Department (Perlis himself used to do them) but Cooper didn't say much about them since they are not the responsibility of the Computer Centre.

They now have a more advanced course; Systems in Computer Sciences, which leads to a Ph.D. Originally this course came under Newell but not any longer, although he still runs it to a large extent. The Ph.D. is really awarded in some other field (e.g. maths, psychology, engineering, etc.) with the Computer Sciences course tacked on as an important subsidiary.

On the financial side the Computer Lab. seems to be very well endowed. ARPA (Advanced Research Projects Agency) gave several million dollars to Carnegie (not just the Computer Lab) last year and now the Mellon Foundation has given $5M specifically to the Lab for Information Processing ($2M for joint maths-computer lab building, $1M for IBM 360/67, rest for research). The money was obtained mainly on the strength of Perlis and Newell's reputations.

Cooper's own work is aimed at being able to prove algorithms/ programs equivalent - a form of theorem proving (Perlis in fact regards this as the only useful form of theorem proving, although few would agree with this view). Cooper's work follows along the lines set by McCarthy (Comm. ACM, Vol. 3, page 184; also published in the Proceedings of the IFIPS Conference in Munich, 1962).

Among the other people we met were Feldman who has a compiler generator who gave an account of his work to FRAH. and Renato Itturiaga who spent some time with RFC.

The work by Feldman is written up in his thesis Formal Semantics for Computer Oriented Languages. The Compiler Compiler accepts the Syntax in the form of Floyd Productions (Floyd 'A Descriptive Language for Symbol Manipulation,' Journal of the ACM October 1961) instead of the more normal Backus Normal form. The semantics are represented in a similar way to Brooker's Compiler Compiler with a large number of housekeeping and code producing routines supplied by the system. The work is interesting because of the use of Floyd Productions and also because of the Extended Algol Compiler written using the system.

The main additions to the Carnegie Algol Compiler are in the regions of formula manipulation (A Preliminary Sketch of Formula Algol by A. J. Perlis, R. Itturiaga, T. P. Standish), list processing and limited storing processing capabilities.

Renato Itturiaga, who is at present completing his Ph.D., has been doing some interesting work on using a computer to deduce the next term of a sequence and to print out the general term. The program to do this is in IPL-V. He showed us examples where the program successfully found the general term of sequences such as

Another program (in Formula Algol: see reference above) allows him to find limits of functions

and A = infinity is allowed. Cases such as 0/0 are dealt with by the program using de L'Hospital's rule.

A number of other visitors were at C.I.T. on 31st May and we had dinner with some of them. These included Dorodnicyn of Moscow, whose main interest seemed to be partial differential equations, Glushkov of Kiev who was interested in artificial intelligence and theorem proving and Professor Ercoli of Rome who seemed to be mainly interested in programming languages.

Perlis showed us an interesting gadget during our visit. This was a typewriter device with a black box attached into which a telephone could be fitted - the earpiece and mouthpiece fitting into two cup-shaped holders. By dialling a number connected to the computer one would be automatically on-line and could use the machine exactly as in MAC. This gadget was the first of its kind at Carnegie (it had been made there); it weighed about 100 pounds in all. Two days later, at C.D.C., we saw a similar and considerably lighter device (the CDC 6060).

EBF. having left us to visit Oakridge, we (RFC and FRAH) flew from Pittsburgh to Minneapolis on 1st June. The journey was memorable in that it took several hours, partly because we landed at Cleveland, Detroit and Milwaukee, but principally because we arrived in Minneapolis just in time to be caught in one of the worst storms in memory. The rain began within minutes of our arrival and during the next 24 hours several inches of rain fell, most of it within an hour of the start. Within minutes the roads were like raging torrents and in places trees seemed to have been washed out of the ground.

We spent most of 2nd June with John Lacey and his colleagues at the main CDC production plant outside Minneapolis. Lacey, who was an S.S.0. at GCHQ in 1961, is now second in command of this plant, which employs about 2,000 people. After a discussions on the administrative structure of CDC we had a talk on the 6600, illustrated by slides and examples. The interesting sections of the administrative charts of CDC's activities are given below:

The Computer Group is organised into the following Divisions:

A diagram of the organisation of the Computer Division is given below. Of the other divisions it is perhaps worth remarking that the Chippewa Labs., which are in Wisconsin, are run by Seymour Cray (Chief Designer and a Vice-President of CDC) who has a total staff of 34 people with him. The first machine of each new series goes to the Chippewa Group. The System Sciences Division is at Los Angeles, their responsibility is to produce all special software and hardware (if necessary); in particular they are producing the software for the 6000 series machines.

The structure of the Computer Division is:

Zemlin, Williams and Miller are at Palo Alto, Downing (who used to be at Oakridge) is in Minneapolis. We spoke to Downing and he told us that among other topics the people in his group were working on: Communications Science (including Time-sharing), Management Science, Physical Science and a variety of other topics including Game-Playing.

On the 6600 we learned little new. An example of programming the 6600 in machine code was worked out; it was quite instructive, although clearly designed to take advantage of the multiple registers of the machine. The example is given below (times are given in minor cycles = 0.1 secs).

Example: A = (B × C) + (D × E)

Step Issue at time Start execution Complete instruction Result available B to A1 0 0 3 8 C to A2 1 1 4 9 X0 = X1 × X2 2 9 19 D to A3 3 3 6 11 E to A4 4 4 7 12 X6 = X3 × X4 5 12 22 X7 = X0 + X6 6 22 26 A7 to A 7 26 29 39

Thus the total time is 39 minor cycles, or 3.9 microseconds. Instruction have to be delayed when it requires the result of a previous instruction.

We saw the 6600 and 3000 series assembly-lines. There were working machines of each type on view. There was a very nice card punch working at 300c.p.m. attached to the 6600.

The production lines are nothing like so automated as those at IBM, Poughkeepsie. Much more work is done manually - there seems to be a good supply of people to do low-grade wiring etc available in Minneapolis. The back-wiring of the 6600 looked a terrible mess; on the other hand the machine seemed to work satisfactorily.

We also saw the CDC 6060, a small typewriter console intended for using a machine via a telephone connection. In principle it was similar to the gadget we'd seen at Carnegie, i.e. it had a black-box which includes a kind of telephone-holding cup and the user merely dialled the number of the computer and began to type. The 6060 however had some extra keys on the typewriter which gave access to various library functions (square root, determinant, eigenvalue, log, cos, sin etc). This device was developed by the Los Angeles people (System Sciences Division).

There were some illuminating discussions about CDC managerial policy. They are proud of the fact that their over-riding policy is

Decentralisation with profit/loss responsibility pushed to the lowest practicable level.

(This is borne out in practice as is apparent in any dealings with CDC. The UK representatives for example seem to be empowered to cut their prices when bidding against rivals without consulting Minneapolis).

It also appears that around 1st June each year there is an edict to all Divisions to cut staff by 5-10% within the next three months: pruning off the dead-wood.

We were amazed to learn that CDC were unaware of the existence of the ICT 1900 series until April this year. When we told them that we found this hard to credit since, (a) the 1900 series was announced publicly in September 1964 and (b) the existence of the 1900 series must be affecting their sales in the U.K, they replied that they had decided not to worry about sales in the U.K. because (1) there was a strong home computer industry, (2) there was now a 15% surcharge on imports and (3) the Government were apparently pushing a buy-British policy. It sounded sensible enough but we had the feeling of a rather sour grapes attitude behind the facade.

Whilst EBF and FRAH were otherwise employed, RFC visited Professor D. H. Lehmer at Berkeley (San Francisco), part of the University of California. They spent about 1½ hours in Lehmer's office and were then joined by Mrs Lehmer and Professor K. Mahler, now of Canberra, who taught RFC Number Theory as an undergraduate at Manchester. The rest of the day was spent in sightseeing around San Francisco Bay, lunching at Fisherman's Wharf and talking Number Theory as they went.

Lehmer was extremely interested in all our work on Number Theory at the Atlas Lab. Needless to say he had anticipated some of it, e.g. - RFC's work on irrationals, which includes tests for a relationship between (3) and (5). He had tried by hand in 1928. Of course he hadn't been able to carry out more than a few of the thousands of trials done by Atlas in one minute; his technique was identical, using the continued fraction expansions of the ratios of expressions such as

He also told RFC that G. Neubauer had run a 7094 program to test a weaker form of Merten's Hypothesis, viz Von Sterneck's hypothesis that of

then

(Merten's Hypothesis is that M(x) ≤ x½). By means of a (forgotten?) formula of von Sterneck's, i.e. If n > 210,

where and λ runs through all integers which are not divisible by any of 2, 3 or 5.

Neubauer was able to compute values of M(n) for small regions of n around 8 × 109 although full calculations only had to be done up to about 8 x 108. At several points near 7.77 x 109 he found values of M(n) which disprove von Sterneck's Hypothesis, e.g.

M(777 × 107) = 49,122 > 0.55 × (777 x 107)½

This work of Neubauer's was published in Numerische Mathematik in 1963 and Lehmer subsequently sent RFC a copy of the paper.

In view of this work it seemed best to stop the running of Mertens on Atlas until we'd had time to study the von Sterneck formula to see if any use could be made of it.

The work by Oliver Atkin on coefficients of modular forms etc. was quite new to Lehmer as also was RFC's work on the binary partition function. Mahler, as we already knew, had done some work on the binary partition function and was able to supply some further useful references to work of de Bruijn of Eindhoven. All this work however concerns the asymptotic formula for b(n) and the properties (mod 2k) had not been noticed before.

On his own work. Lehmer told RFC of a very recent discovery he had made on continued fractions of cubic irrationals. Two Russians (Fadeer and Delone) wrote a book on 'cubic irrationals' and computed some for roots of x3 = ax + b with |a|, |b| ≤ 9. They found nothing surprising, Lehmer extended the range of values of a, b and almost immediately discovered that for

x3 = 8x + 10

the continued fraction for the real root has elements which exceed 160,000,000. This is astonishing. There is a theorem, due to Khinchin which says that the elements of the continued fractions of almost all irrationals satisfy 41% = 1, 19% = 2,... .. etc so that only a minute percentage will have elements > 106 in the first few hundred places. Lehmer evaluated about 200 elements of each continued fraction (he obviously has very good multi-length arithmetic routines).

The discriminant of the above cubic is -4 × 163. Lehmer remembered that -163 is the most negative discriminant of a quadratic form having class number 1. Now the next most negative discriminant associated with class number 1 is -67; he therefore looked for a cubic of discriminant -4 × 67 and, having found, expanded its root as a continued fraction, To his delight he found a similar phenomenon i.e. occasional very large elements. He doesn't understand the connection as yet but it is extremely interesting.

Lehmer apparently does a lot of programming himself and writes in 7094 machine code. He gets machine time by operating the machine himself in the early hours of the morning when nobody else wants it.

We spent the weekend sightseeing in San Francisco.

We spent most of the day with Gene Golub, Assistant Professor in the Computing Centre. Stanford is an unusual University: it was founded by one of California's rich men ('robber barons' was the phrase Golub used) in memory of his son, around 1880. Originally a relatively poor University it is now very rich, mainly because of the value of its land which its charter allows it to rent but not sell.

There are three computers in the Computing Centre; a 7090, a Burroughs 5500 and a PDP-1. Programming of the 5500 is almost entirely in Algol - the machine was designed around Algol; machine code is apparently very awkward to use. There seemed to be more interest in the 5500 and PDP-1 than in the 7090 which had an almost deserted atmosphere, although it is a fine installation and works extremely well. Work on the PDP-1 struck us as being mainly playing games and not of much interest.

We saw J. McCarthy, of LISP fame, but only for a few minutes. He has a console with a line to Project MAC (3000 miles away). We also met George Forsythe and had lunch with him; Golub, like Forsythe is mainly interested in Numerical Analysis.

The most rewarding session of the day was the discussion FRAH and EBF had with N. Wirth. FRAH's account is given below:

The work of Niklaus Wirth is interesting in two main areas. The first is in the area of language design. He has now produced two papers (A Generalization of Algol. Communications of the ACM, September 1963; EULER A Generalization of Algol and its Formal Definitions, Stanford 1965) which describe rather wide and fundamental changes to Algol which may well be incorporated into Algol y. One of the most interesting features of Euler is the storage declaration which is independent of type. The variable type is defined dynamically. The second area of interest is in implementation. The Syntax of Euler is defined in such a way that it is a rigorous Precedence Grammar of an extended type defined by Wirth with syntactic phrases being closely tied to semantic meaning of the phrase. This allows fast compilation. Here again the work of Floyd is apparent.

We spent some time with William Miller, who had fairly recently come to Stanford from Argonne. He is interested in languages for control of equipment (e.g. SLAC = Stanford Linear Accelerator).

Stanford hope to get a 1012 bit photon file from IBM in the Fall of 1967. One of these is apparently working at San Jose and there are prospects of files several orders of magnitude greater.

Following the visit to Stanford University, EBF left early the next day to visit the Western Data Processing Centre, Los Angeles. RFC and FRAH having learned of the existence of a group of C.D.C. programmers in Palo Alto arranged to spend a few hours with them before leaving for Los Angeles.

RFC spent some time with Bill Olle (T.W. Olle) who is developing C.D.C's information language package, INFOL(information oriented. language). Basically INFOL will handle lists of descriptors nested to any depth. It is designed for the C.D.C. 3400 and 3600 machines. It permits a user to deal with variable-length information and contains data-editing and retrieval routines. Since the visit Olle has issued an official C.D.C. publication on INFOL:

3400, 3600 Computer Systems, INFOL. General information manual.

A copy is available in the Atlas Lab., so I will not describe the system further here.

FRAH meanwhile spent his time with Dick Bielsker, one of the advanced programming group. At C.D.C. the advanced programming group are working on a very powerful and sophisticated Compiler Compiler system. This is divided into four main parts:

It is hoped that each section will be easily changed without altering any of the others. The Syntax Tables will, it is hoped, be sufficiently flexible to allow several different types of language to be analysed although probably the first stage will be based on BNF. The Object Code Specifications are intended to contain information about the number of B-lines, accumulators and speed of operation of instructions. The Semantics will be defined in an internal language which will, it is hoped be capable of being optimised dependent on the Object Machine characteristics. The first use of the system will be in producing the PL/I compiler for the C.D.C. 6600.

The visit to C.D.C. Palo Alto was well worthwhile and we were sorry we had so little time to spend there.

We spent the day with Mario Juncosa of the Computer Science Department and some of his colleagues. Juncosa gave us an indication of the main lines of work associated with computers carried out in Rand. These included:

We subsequently discussed JOSS (Johnniac Open Shop System) with Cliff Shaw and also Information Retrieval with Levien and Meron.

The JOSS System is well described in two Rand Publications which we have in the Atlas Lab., viz:

JOSS: Experience with an experimental computing service for users at remote typewriter consoles. By J. C. Shaw, Rand Corp., May 1965

JOSS: Examples of the use of an experimental on-line computing service. By J. C. Shaw, Rand Corp., April 1965

These are quite short and very nicely written. Interested people should read them. The JOSS System is based on the Johnniac a valve machine of 1954 vintage. Johnniac has only 4K of core and 12K of drums. In power Shaw estimated it to be worth about half of a 704 (say about 1/30 of an At1as). There are 10 typewriter consoles, 8 of which can be active at anyone time. For further details see the reports cited above.

Levien and Maron gave us an account of their work on information retrieval. This work has been in progress for 1-2 years and is really concerned with a sub-part of I.R., viz, fact retrieval. The typical question that they would like to answer is: Who is doing what and where is he doing it?.

For sources of data they use scientific publications and some non-scientific - including newspapers. The language used is a "relational information language", e.g. statements are of the type

aRb

meaning "a is in relation R to b", for example

"J. Smith is the author of Transcendental Numbers"

From the user's point of view he asks

"Who is the author of X?"

There is a possibility (not yet realised) of getting the retrieval program to deduce implicit facts from two related statements. At present the user must provide any inference rules he wishes to be used, such as:

x R1 y & y R2 z implies x R3 z

No attempt has been made at Rand to take all this beyond the Research Project stage. It is based on the 7040/44 System (not Johnniac) mainly because of the associated 4½ million word disc. It is an interesting approach to fact retrieval but it is difficult to assess how successful it will be at this stage.

We spent most of the day with Ken Hebert and Charles Ray of the Computing Centre. Hebert is head of the Programming Division. The machine is a 7094 Mark I supported by a formidable array of smaller equipment:

7094 - 1301 - 7040 - 7288

The 7288 allows linking of on-line experiments in biology, seismology and physics. The computer also controls (via punched tape~) an x-ray diffractometer. The largest users of data are 5 or 6 experiments in biology.

There are 4 disc modules (18 million words in all) and these have had the effect of making tape jobs quite rare. A total of about 300 jobs a day go through the system; about 85% of jobs take less than 2 minutes computing. Systems programs get special sessions on the machine and the maintenance engineers take the machine for 2 hours a day (needless to say the machine is on three shifts). Most of the programs are written in Fortran-4 or machine language.

The 7094 system is nicely organised for the users. The Share Library is held in BCD on a magnetic tape and is automatically punched out when requested. All listings of share programs are kept available on microfilm. Punching services are provided and data or programs will be punched from any piece of paper. This seemed to be an extraordinarily generous attitude.

There are several remote consoles associated with the 7094 system, these are usually (but not always) card readers. These consoles get a very high priority, on average the output is sent back within 1½ minutes, hardly ever over 5 minutes. The group of biologists studying the nervous system have 4 remote consoles and will soon be introducing a 5th. These consoles, basically flexowriters, were made on site. We asked how reliable these flexowriters were and they said that they were quite satisfactory, partly because they had designed around them to cut down on the effects of noise.

The most astonishing fact of all is that they run the large 7094 system with only 2 operators per shift. This may be largely due to the disc having more or less supplanted the tapes and to the use of remote consoles.

Virtually all the work is for research purposes: both graduate students and faculty members. The 7094 system was introduced in December 1963; Hebert said that it wasn't yet saturated but he was sure it soon would be. Cal. Tech has a curious distribution of personnel: 600 undergraduates, 700 graduates and 400 faculty members. With such a distribution the percentage of potential machine users is very much higher than in any corresponding British University. The faculty includes some very eminent people; the theoretical physicist Feynman, and the mathematicians Marshall Hall and John Todd; in addition there are a large number of distinguished visitors. Fred Hoyle and several astronomers from Mt Palomar sat near us at lunch. The proximity of the large observatories (Mt Wilson and Mt Palomar) makes Cal. Tech a favourite computing centre for astronomers.

On the physics side there are a linear accelerator, synchrotron and bubble chamber. The physicists use the computer, but not too much as yet. A lot of their work is done at Berkeley and Brookhaven.

A Syntax Directed Compiler version of PL/1 is now running on the 7094 and will be made available on the 7040. This program was done after 2 months preliminary study by one person, mainly during a weekend. The compiler generates assembly language code for input to IBMAP. The final object code is pretty inefficient at present. Steve Crane (head Systems Programmer) thinks that the PL/1 compiler could be at least as efficient as Fortran-4 if properly written. Knuth (another Systems Programmer, also adviser on Algol to the A.C.M.) thinks that PL/1 will replace Algol, certainly in the U.S.

Apart from the 7094 complex there is a PDP-5 (in the physics lab.) and the seismologists have a Bendix G-15, which they hardly ever use.

A 360/50H is due in October 1965 and an IBM 1800 in February 1966. These machines will replace an ancient Burroughs 220. The structure of the computing group at present is

RFC spent some time with Joel Franklin. His interests lie in the fields of numerical analysis and, even more, statistics and probability including a particular interest in pseudo-random numbers. He presented RFC with several offprints of his papers. Franklin knew Hammersley and admired his work.

We found the visit to Cal. Tech particularly worthwhile. Intellectually it is a very high-powered place and the computing centre was a model of quiet efficiency. Hebert is a modest, quiet man who obviously does his job very well.

The C.D.C. System Sciences Division is a medium size group of people (~ 100) under R. E. Fagen. The structure chart is:

Clayton's group is the largest, about 60 in all. Hopper's group has 9 or 10; Dawson's is quite small, only about 5 or 6. We were not told the size of Johnson's group but it is unlikely to exceed 20.

Clayton's group has the responsibility of producing the 6600 Fortran Compiler etc. Any special routines for the 6600, 3600, 6800 etc. that are required are written in Los Angeles.

Johnson's group developed the CDC 6060 which is a typewriter/telephone device designed to permit access to a computer from any telephone. This was similar to the equipment which Perlis showed us at Carnegie on 1st June. The 6060 was considerably lighter than the one at Carnegie (about 40 lbs compared to 100 lbs) and it had a number of keys allowing calling of library routines (these appeared to be a luxury; e.g. they included automatic finding of the largest eigenvalue of a 3 x 3 matrix). For a suitably designed computer system the 6060 could provide a very cheap way of adding a large number of MAC-type consoles; one could be placed in any room which had a telephone, regardless of its physical distance from the computer. The telephone line would then only be tied up when the 6060 was in use.

RFC spent some time discussing mathematical problems with W.J. Westlake* of Reed Dawson's group. Westlake is particularly interested at present in queueing theory, mainly in relation to multiple access consoles. His work is partly theoretical (and I suspect that others have got further in this direction) and partly quite practical: his model consists of two queues of different priorities. Queue 1 gets a short run fairly often, and Queue 2 gets a somewhat longer run less often. RFC hadn't heard of this approach to the MAC-problem before. There is nothing dramatic to report at this stage; Westlake was experimenting with varying the time quanta for each queue. These quanta are of the order of a few milliseconds (~ 6 or 8) . If the quantum is allowed to become very much larger (~50) a bottleneck soon appears. In the system Westlake was investigating it seemed that 54 milliseconds was approximately the largest tolerable value of the long-run quantum.

RFC and WJW had shared 'digs' in London in 1952/3. They had both subsequently worked at different times with Fagen, Clayton, Dawson and Hopper in Washington. Small world!

Westlake is also interested in testing random-number generators. His work is very much along the lines of MacLaren and Marsaglia of Boeing Labs., as given in their recent paper Uniform Random Number Generators, Journal A.C.M., Vol. 12, Part 1, Jan. 1965, 83-89.

Westlake is largely responsible for deciding which mathematical routines, particularly those connected with statistics, should be provided for the CO-OP (CDC Users) Library. He does very little of the actual programming himself; he provides the specification and the program is written by a member of Clayton's group.

Whilst RFC was with Westlake, EBF and FRAH talked to Neal Smith of Clayton's Group about some aspects of the 6600 software.

The original supervisor for the 6600 was the Chippewa System which was not very flexible and had a fairly crude Fortran Compiler. At present the basic CIPROS system is available and modifications and improvements are being made to this. One headache which is causing quite a lot of disagreement among users is the tape labelling system to be used. This will probably be similar to the Atlas system although operator overriding of requests may be allowed. Some users do not like this and a jump out to their own system will probably be inserted so that they may be able to take any action they like. The basic buffer size for tape transfers is 512 words. Not everyone likes this and for long records a two buffer system is being implemented using two peripheral processors. One buffer is filled while the other is being read.

Fortran 66 is compatible with 3600 Fortran. It allows assembly language statements to be mixed with Fortran Statements in the same routine. At present code is generated in assembly language form and then it uses the assembler to finish the compilation. No attempt is made, at present, to bypass any of the assembler and reanalysis of identifiers and other time consuming actions take place. Later it is hoped to bypass the first stage of the assembler. At present the assembler compiles at 12000 orders per minute which forces compilation to be rather slow in the Fortran compiler. If no listing of the assembled code is asked for, it is hoped that compilation may go straight to code in the future. Code generation at present is very bad for indexed expressions. No special action is taken for constants and

A(2,3) = B(4,5,6)

requires 30 instructions at present.