Front cover shows the AVS Network Editor.

The VMS service on CCD's DEC Alpha AXP 7000 was formally introduced into production on 17 May. Articles discussing the installation and trial phases have appeared in previous issues of FLAGSHIP where it was stated that the Alpha service would soon be extended through the addition of a further two processors. This change was successfully implemented on 25 July and the DEC 7000 (AXPRLl) machine is now running with three processors. This article describes the production service in some detail, including projected future developments.

Initially the service was provided on a single Alpha processor running Open VMS with 512 Mbytes of main memory and 22 Gbytes of disk storage; that system has now been enhanced. The modifications made to the system consisted of firmware upgrades to the CPU and some of the device controllers to enable them to run a new version of the VMS operating system. This new version (OpenVMS AXP version 1.5) supports Symmetric Multi-Processing (SMP); it is this that has allowed us to add the two further processors enabling a significant boost in job throughput and general performance.

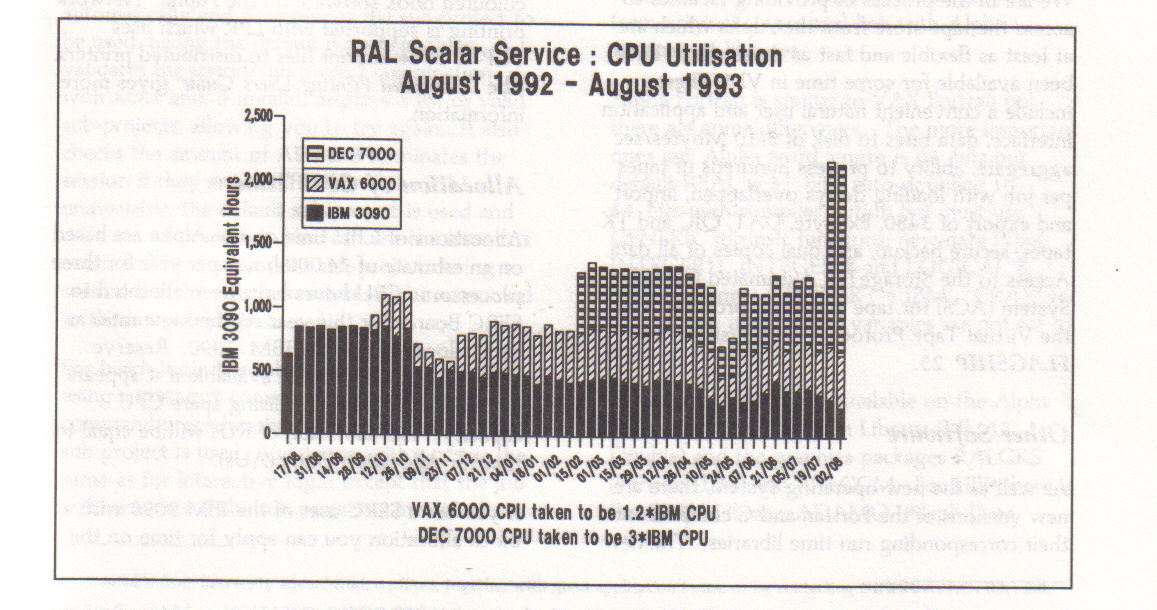

This increase in throughput can be seen emerging in the presentation below which shows the number of hours of processing time, in units of equivalent IBM hours, provided by the Atlas Scalar service over the last year. It is assumed for this figure that one hour of Alpha processing time is equivalent to three hours of IBM 3090 time. This factor is a good approximation for much of the work currently being processed on the Alpha service machine. It can be much higher for other applications.

It should be noted that the extra processors themselves will not make any single task go faster; this will have to wait for changes such as the introduction of a parallel version of the Fortran compiler. It will, however, allow more tasks to be run concurrently.

OpenVMS AXP is based on VAX/VMS which is a general purpose, time-sharing operating system with command level processing, user environment tailoring, text processing, symbolic debugging and run time libraries. Similarities between the two include the Digital Command Language (DCL), file structure, data formats for 32 bit INTEGER and REAL, Fortran and C compilers, batch, mail and much more. The main difference is the executable code because the Alpha is a RISC machine. There are other differences in some data types which are simulated rather than handled in hardware. Some local code has been added to the batch system to make the scheduling fairer and more like the other central computers. Jobs are submitted to an input queue which is checked every 60 seconds to find eligible jobs to move to the execution queue. Ordering is by sub-project or username to avoid a single user blocking the queues. Normal VMS queue display commands still apply.

We are in the process of providing facilities to access the tape store from the Alpha which are at least as flexible and fast as those which have been available for some time in VM. These include a convenient natural user and application interface, data rates to disk of 5-10 Mbytes/sec aggregate, ability to process hundreds of tapes per job with loading delays overlapped, import and export of 3480, Exabyte, DAT, QIC and TK tapes, secure backup, and dual copies of all data. Access to the StorageTek Automated Cartridge System (ACS) for tape staging is provided via the Virtual Tape Protocol (VTP) system - see FLAGSHIP 23.

As well as the new operating system, there are new versions of the Fortran and C compilers and their corresponding run time libraries. The new compilers and libraries may in many instances produce faster code. While it is not necessary to re-compile any code to make use of the faster libraries, it is strongly recommended, where possible, that users should re-compile and re-link their code.

There are also new versions of some layered products including DECwindows Motif (X windows), a program development tool set (language-sensitive editor, code management system, etc) and VEST, a tool for migrating VAX code to Alpha code.

Other compilers and packages such as Pascal and DXML (Digital's Extended Maths Library - similar to the NAG library) are now available. At the time of writing these are being investigated and further information will be made available via NEWS and FLAGSHIP.

Network connections to the Alpha include DECnet, LAT and TCP/IP over FDDI and X.29 login over JANET. Note that there is no coloured book software on the Alpha. Network printing is supported with LPR which uses TCP/IP to send print files to distributed printers. The Distributed Printing Users Guide1 gives more information.

Allocations of CPU time on the Alpha are based on an estimate of 24,000 hours per year for three processors. CPU hours have been allocated to SERC Boards for this year on the same ratio as their allocations on the IBM 3090. Reserve Units (RUs) will be made available if it appears to be the best way of utilising spare CPU capacity. The allocation of RUs will be equal to that for Allocation Units (AUs).

If you are a SERC user of the IBM 3090 with a block allocation you can apply for time on the Alpha simply by giving your userid and preferred sub-project to Resource Management. If you are a SERC grant holder you can apply for up to 5 hours pump-priming time on the Alpha. You can also transfer all or part of your IBM allocation to the Alpha on the basis that 3 IBM CPU hours equals I Alpha CPU hour. If you are a prospective user with no current grant you should use the AL54 form2 to apply for either a grant or pump-priming time.

Up to 10% of the CPU time can be made available for commercial use and is charged at a rate of £75 per hour. Potential customers should contact the Head of Marketing Services/

Accounting on the Alpha makes use of CCD's central accounting system (ACCT) on the IBM 3090. VMS (and Open VMS) normally allow only one sub-project per userid. We have written a new command, MEWACCT, to allow multiple sub-projects per userid as on the Cray and IBM.

At login, the MEWACCT command is invoked automatically and prompts for the sub-project to be used, taking the default if none is given. It validates the userid/sub-project combination with ACCT and, if invalid, displays a list of valid sub-projects, allowing you to try again. It also checks the amount of AUs and terminates the session if they are insufficient. If the IBM is unavailable, the default sub-project is used and AUs are not checked. You can use the MEWACCT command to change your sub-project during a session but the whole session will be accounted to the last sub-project used.

For batch login the sub-project can be specified using a NEWACCT command as the first line of the command procedure but otherwise the default sub-project is used. Validation with ACCT is the same as for interactive login except that the job will terminate if the sub-project is invalid or there are insufficient AUs and, if ACCT is not available, will keep re-trying until it is.

To query usage, ACCT commands can be issued using the SYSREQ interface. A file giving weekly usage is stored on the Alpha. IBM users can query Alpha usage with the On-line Accounting Table System (OATS).

By default, you will be allocated 10 Mbytes of file-space when you register to use the Alpha. You can apply for more by contacting the Data Manager but disk space is limited at present with a total of 22 Gbytes. A further 40 Gbytes will be installed in the near future. A disk quota system, to allow category representatives themselves to manage disk space, is being developed.

A general strategy for porting software to the Alpha is to start by making an inventory of components in your application such as source and languages used, external routines eg libraries and input data files especially binary. Fortran or C should port very easily. Rebuild your code from original source and link with Alpha versions of libraries.

Alpha Fortran is similar to VAX Fortran but there are some differences. The more important ones are: Alpha word length is 64 bits but default REAL is 32 bits; auto-doubling to 64 bits with compiler option but must use GENERIC intrinsic functions; new data types INTEGER'1, INTEGER'S and LOGICAL'8; extra keywords CONVERT ='IBM', 'CRAY' for binary files; uninitialised variables are not set to zero.

Libraries and packages available on the Alpha include the NAG Fortran Library (Mk 15 Double) and the graphics packages RALGKS (version 1.38) and RALCGM. For HEP there is the CERN Library, ADAMO (Aleph Data Model), PATCHY and PAWXll. Other packages such as the Harwell Subroutine Library will be installed but we would like to know what your requirements are.

The Applications and User Support Group has VMS expertise within the group and will answer queries on service or facilities and will give advice on any aspect of porting software. Contact US by e-mail to US@UK.AC.RL. IB or AXPRL1::us or via Service Line (0235 44 6389).

Documentation includes VMS on-line HELP, DECWindows Bookreader, Alpha user notes and supplements, an Introduction to VMS, NEWS(CCDVMS on the IBM and Alpha News. There are two computer based training(CBT) courses which can be made available on CCD's VAX 6000. One is Introduction to VMS and the other Introduction to the editor (EVE). There are no CBT courses available yet on the Alpha. We will be giving a series of one-day courses at the Atlas Centre for people converting from VM/CMS to VMS (see separate article for details).

We have another Alpha 7000 with a single processor which is being used for test purposes. Later this year it is expected that this will be put into production service. The two machines can then be configured as 2+2 or 3 + 1 CPUs and finally clustered together. Each machine can take up to 6 CPUs so a cluster of 12 CPUs is a future possibility.

As well as Open VMS, DEC has committed to providing OSF/1 (Unix) and Windows NT operating systems on all its Alpha platforms. We will certainly be looking at OSF/1 later this year with the aim of providing a central Unix service. OSF/1 supports 64 bit data types and addressing, System V shared libraries, X.Desktop, Motif, file-systems up to 32 Gbytes and physical memory up to 14 Gbytes. It is expected to be given C2 security status and will include the Distributed Computing Environment (DCE).

Windows NT operating system is potentially very attractive and we are already making preliminary investigations. It may be used in a desk-top system in single user mode, or in a distributed environment in client/server mode where multiple users can make concurrent use of powerful server facilities.

So the future operating system on the Alpha machines could be Open VMS, OSF/1, Windows NT or some combination of these running on different processors. We intend to provide an environment which is as flexible as possible both in hardware and software so that it can grow and change to keep abreast of all your requirements.

As Tim Pett mentions in the article on CCD Alpha services, Central Computing Department at RAL is planning to offer a central Unix service in response to user demand. Since we started planning this issue of FLAGSHIP there has been a lot of progress with this; by the time you read this we hope to be offering trial user access to such a service. This service will run on a small farm of five DEC 3000 model 400 Alpha workstations running the OSF/1 operating system as implemented by Digital. The machines will each have 2 Gigabytes of disk and 64 Megabytes of memory and be interconnected by FDDI. They will share access to a larger amount of disk space and will have access to tapes in the StorageTek ACS.

I hope that by the issue of the next FLAGSHIP there will be a full production service in place. I am publicising the service now so that you can plan your work over the next year. Anyone who would like access to the trial service should contact User Support (us@UK.AC.RL. IB).

The Supercomputing Management Committee has approved the purchase of a 256 Mword (2 Gbyte) Solid State Device (SSD) for the Atlas Cray Y-MP8I/8128. The SSD can be used in several different ways to enhance the performance and versatility of the Y-MP.

Cray's flexible file system enables the user to control the I/O buffers in an optimised way to follow a known pattern of access.

The SSD should arrive around October 1993; the details of its use will be discussed at the next Atlas Cray User Meeting see next article.

AVS has developed in many ways since the last article about it in FLAGSHIP: RELEASE 5.0 has arrived, LICENSING arrangements have changed and DOCUMENTATION has been updated. Usage of AVS has grown (with several UK universities subscribing to the CHEST deal) and the Atlas Video Facility is now processing AVS files. This article discusses all these developments and also provides a reminder about the AVS Repository.

AVS (the Advanced Visualization System) is a system that allows users to visualize data; it consists of a very large number of modules (to which users can add others) and a visual editor for constructing networks for processing the data. It is therefore highly suitable for applications where you do not know in advance the best way of looking at (visualizing) the data. AVS runs on many Unix systems, using either X Windows or the host graphics system for the final display. It is also being made available on DEC VMS systems (VAX VMS and OpenVMS).

AVS was chosen after an evaluation by the Advisory Group on Computer Graphics (AGOCG) and a CHEST deal covering AVS for the UK academic community was organized late in 1992.

SERC has now received AVS release 5.0 for the following platforms:

Appropriate media for each platform should already have been sent to each SERC location for them to install AVS release 5.0 on their machines.

AVS Inc. are in the process of producing a release of AVS for all DEC platforms (VAX VMS, OpenVMS, Ultrix and OSF/1) but these are not yet publicly available.

AVS release 5.0 contains many new modules and enhancements to existing modules. The new modules are largely for Image Processing, Volume Rendering and UCD (Unstructured Cell Data) handling. The Image Processing Modules contain a significant portion of the SunVison Image Processing Library. Enhanced modules include the Geometry Viewer, the Image Viewer, and UCD functions. Bugs in release 4 have also been fixed.

Full details of the changes are contained in the AVS 5 Update Manual, available from Manchester Computing Centre (see below).

| List of Contacts for Network Licences | |||

|---|---|---|---|

| Community | Contact | Phone | |

| RAL Informatics | Janet Haswell | jh@inf.rl | RAL ext 5806 |

| Daresbury | Royd Whittington | R.Whittington@dl | DL ext 3226 |

| ROE | Malcolm Stewart | jms@starlink.roe | ROE ext 8375 |

| Rest of SERC | Roy Platon | rtp@ib.rl | RAL ext 5764 |

| List of AVS Manuals available from MCC | ||

|---|---|---|

| Code | Name | Price (£) |

| CST 900 | Full set of manuals | 56.25 |

| CST 907 | AVS Developer's Guide | 6.00 |

| CST 908 | AVS Module Reference | 8.25 |

| CST 909 | AVS Tutorial Guide | 2.50 |

| CST 910 | AVS Applications Guide | 2.50 |

| CST 911 | AVS Chemistry User's guide | 3.75 |

| CST 912 | AVS User's Guide | 7.00 |

| CST 913 | Animating AVS Data Visualizations | 2.50 |

| CST 916 | AVS 5 Update Manual | 2.50 |

|

plus platform specific Installation/Release notes: Vistra (CST 901), HP 9000/7xx (CST 902), Stardent 1000/2000 (CST 903), IBM RISC System/6000 (CST 904), SGI 4D/xxx (CST 905), SUN SPARCstations (CST 906), DG AViiON (CST 915) all of which are £2.50 apart from CST 904 which is £3.75, and a manual specific to the Cray (CST 914, £2.50). |

||

AVS is an extensible system which allows the addition of both standard and user-written modules into the visualization pipeline. A public repository of modules is maintained by the International AVS Center, North Carolina and is mirrored by the Manchester Computer Centre. In May 1993 the repository contained more than 600 modules. These are available by anonymous FTP from avs.ncsc.org and ftp.mcc.ac.uk respectively. There is no point in copying all the repository modules to a RAL file system, but if you find particular modules which you think are generally useful, we will be pleased to make these available on multi-user machines such as the Cray, rather than having duplicate copies. Please contact Roy Platon via rtp@ib.rl.ac.uk.

Since the initial distribution of AVS to SERC sites, there have been some changes in the way AVS is licensed to users. The changes make it much simpler for users of most Unix systems. This section describes the new arrangements for SERC users; University users should contact their local Computer Centre for information about their systems.

When AVS was first installed on SERC sites, individual licences were issued for each machine that wanted to use it. This created quite an administrative burden (over 200 machines had to be registered and each required an individual key). This was seen to be undesirable and so rather than using these so-called node-locked licences, SERC has switched to network licence managers wherever possible.

A number of machines still use node-locked licences, including the RAL Cray Y-MP, Stardent and various beta-test VAX VMS systems.

If you want to use AVS, contact the person in the first table on page 12 who supports your community and you will be told how to access your local licence manager. This manager runs permanently and grants permission to users as they start up AVS as long as the predefined limit (of simultaneous users) would not be exceeded.

The SERC subscription to CHEST for AVS includes the licensing of the AVS Animator sub-system. This contains a number of facilities that simplify the production of quite complicated scripts, orchestrating the movement of the virtual camera and other parameters. It also contains facilities that make it easy to produce files for the Atlas Video Facility.

The facility runs AVS release 5 and AVS Animator, and has developed improved versions of the modules in the AVS Repository (see above) that convert from AVS frame sequence files to Abekas video format. It is thus a simple task to take an AVS frame sequence file and make a video from it.

Users of AVS who want to use the Atlas Video Facility should still contact Chris Osland (RAL ext 6565, cdo@ib.rl.ac.uk) but all they now have to do is use the Write Frame Sequence module in the Animation menu to produce a ".seq" file, and transfer that to the Atlas Video Facility.

AVS is extensively documented. One complete set of documentation is provided for each platform with the initial media distribution. With AVS release 5 new manuals are available from Manchester Computing Centre (MCC). The new manuals, their codes and prices, are given in the second table on page 12.

Manuals should be ordered directly from the following address; all payments should be to "University of Manchester".

Documentation DistributionAGOCG's Visualization Coordinator (Steve Larkin) has been responsible for several courses on AVS and you will find an invitation to an Introductory AVS Course on page 19.

The world-wide interest in AVS is reflected in a newsgroup (comp.graphics.avs) and an anonymous FTP site for the repository of contributed modules. AGOCG's Visualization Coordinator, Steve Larkin at MCC, is organizing AVS introductory courses.

Staff at SERC sites should contact Roy Platon rtp@ib.rl.ac.uk or your local expert (see above) for further information on the distribution of AVS; University users should contact their local Computer Centre. Steve Larkin (s.Larkin@mcc.ac.uk) is the person to contact for further information on courses.