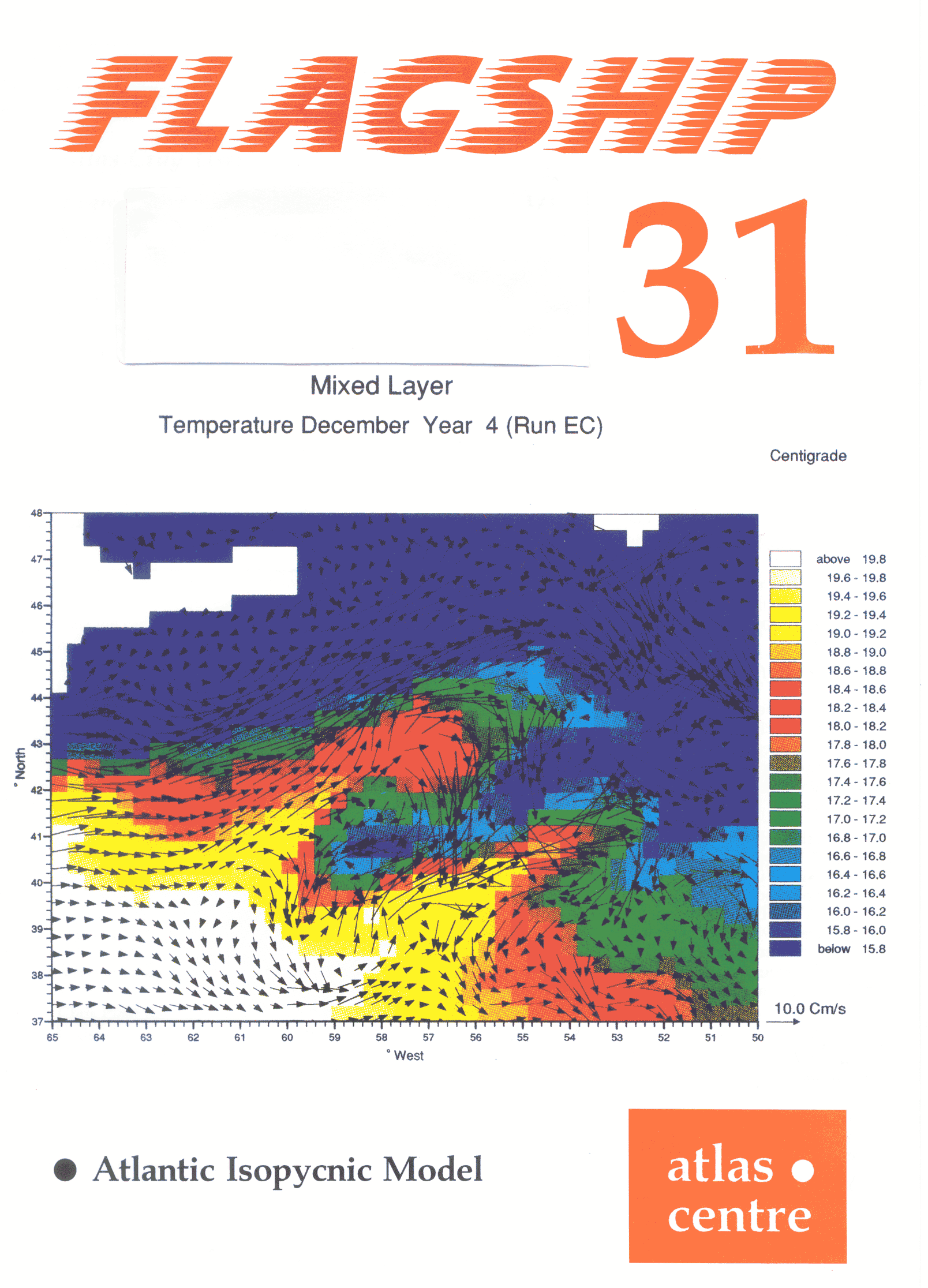

Front cover shows the Atlantic Isopycnic Model (AIM). It reveals the sea-surface temperature and current structure in one of the eddy-resolving runs just south of Nova Scotia.

In his progress report, Roger Evans began by pointing out that the last six months had seen an extremely reliable Cray service, with the only major change being the installation and commissioning of the 256 Mword Solid-state Storage Device (SSD). The Cray Y-MP8 hardware had been exceptionally reliable; the only problem had been associated with powering up the disk drives after the air conditioning shutdown. On the software side, the very few problems had been associated with "outside" events, such as preventive maintenance periods and the installation of the SSD. The moral was clearly that if the Y-MP is left quietly alone it is incredibly reliable!

Since the introduction of the SSD, work through the machine had increased by 5-10% and the machine capacity, as defined to the SERC management committees, has been increased to 15000 hours per quarter. Job turnaround had inevitably worsened as the average loading on the machine had increased; users' views on the acceptability of the turnaround, and other matters raised during the morning presentations, were sought during the Round Table Discussion, later in meeting.

The "User Clinic" for tuning and improving code performance, held last November, had been fully subscribed and one user had seen a twenty times improvement in code performance. Users' views on the format of future clinics were invited.

Chris Plant described important improvements in the Cray service for users of tape data. In the past, users wanting access to data on tapes had been subject to the bottleneck of a small number of real tape drives. The situation is exacerbated by Cray's NQS batch job management software which reserves a tape drive for a whole job, even though the drive may only be used briefly at the start or end of the job.

Virtual Tape Protocol (VTP) has been developed at the Atlas Centre as a means of providing tape support to workstation users, but can also be used to access "virtual tapes" across any computer network supporting TCP/IP protocols. A virtual tape may or may not correspond to a real tape. While a virtual tape is "mounted" (open), the data actually resides on disks, allowing many concurrent accesses, and the system simulates the "tape" interface to the application. Off-line data is loaded onto the disks before use from a variety of manual and automatic tape devices, including IBM 3494 and STK 4400 tape robots.

VTP uses the tape command to access a virtual tape. The data may be accessed either through UNIX pipes or through Fortran or C input/output routines. Since VTP does not access tapes directly there is no restriction on the number of concurrent jobs and VTP may even be used interactively.

Chris described some examples of use of the tape command and asked how soon the older tpmnt command for real tapes could be withdrawn from general use.

In the summer of 1993, the National Audit Office made routine investigations into some of RAL services, including the Cray Supercomputing service. John Gordon presented to the User Meeting some of those findings (see FLAGSHIP 30) for discussion. The NAO report was generally favourable, but included some criticisms which had a common theme of poor information flow to some users. John reiterated the Atlas Centre's use of news files and FLAGSHIP to advertise changes, training courses and other events, and reminded users that there is a formal escalation of complaints if people feel they are not getting a satisfactory service. The escalation route is formally:

but users are, in general, free to contact any of the above who they feel to be appropriate. Cray on-line documentation was also criticised. We should point out that the X windows utility xman provides a more friendly interface to the UNIX man pages than does the character based man command. Cray provides a character-based documentation browser called docview which is useful for keyword searches through many of the Cray manuals. On-line documentation for application packages is harder for us to implement since few vendors supply us with machine readable manuals. The environment variable $DOC on all Atlas Unix services points to our documentation directories: as well as locally-written documentation you will find pointers to example jobs for most of our supported application packages. Looking to the future, UNICOS 8.0 will feature a much improved interface to Cray documentation and we will continue to enhance locally-written documentation.

The concept of a "front end" service to Cray supercomputers dates back to the 1970s when Crays had limited operating system support, no networking, and little disk space for user filestore. The requirement for front end facilities needs to be reconsidered, given that we now have a fully functional Unix operating system and a large file store managed by Cray's data migration software.

Roger Evans described the support costs of the three front end machines provided at the Atlas Centre: the VM/CMS front end on the IBM 3090 has a significant manpower cost because Cray's VM/RQS software has proved very unreliable; the VAX/VMS front end incurs little manpower cost and modest maintenance charges; the RS6000 Unix front end consumes small amounts of money and modest manpower resources. Most of the Cray batch workload is submitted from interactive UNICOS sessions and relatively little from any of the front end machines.

Given that there are now few universities with their own VM/CMS systems, there seems little justification for the ongoing support costs of the Cray front end from VM/CMS, so the Atlas Centre wish to terminate this route to the Cray at the end of December 1994. The VAX/VMS service can continue as long as its costs remain low. Users were asked what features they would find valuable in a Unix front end, to ensure that the most appropriate service could be provided.

Yanli Jia from the NERC James Rennell Centre at Southampton described some of the aspects of the AIM project on the Atlas Y-MP8. This project is described in a separate article in this issue.

Brian Davies gave a short presentation on news from recent meetings of the SMC. The main news was the purchase of the new Cray T3D and the decision to site it at Edinburgh. A bid to house the machine had been submitted jointly by RAL and Daresbury. This had not been successful and the Laboratories were due to be debriefed on the site selection, but this had not happened yet. The other main activity at SMC was the launching of the High Performance Computing Initiative which was concerned with the provision of support for parallel computing. A call for bids to become user consortia had just been issued by the SMC secretariat and this would be followed by a call for bids to become support centres.

The SMC as such had now ceased to exist. It was expected that a new committee would be formed under the aegis of the EPSRC in the new Research Council structure but its terms of reference and membership were not yet known. The issue of charging, addressed by two studies by Coopers and Lybrand, had not been finally resolved and, following the demise of the ABRC, had been left for consideration by the Office of Science and Technology.

Finally, the SMC had agreed that a Town Meeting on high performance computing would be held in July.

The National Supercomputing Facilities Information Coordinator, Alison Wall, introduced herself to the meeting and described her new role. See her article in FLAGSHIP 30 for details.

A general discussion revealed that most users were very happy with the Atlas Cray service in terms of reliability and job turnaround, but there remained specific concerns over the network reliability. Users were concerned that the Y-MP8 might become overloaded to the point where job turnaround became poor, and asked that representations be made to the appropriate management bodies in the new structures.

Since many users were now used to having powerful workstations available locally, it was felt that an increase in the memory limit for interactive jobs to around 10 Mwords would make the Cray appear as even more like an ultra-powerful workstation.

The Atlas Centre should continue to publicise the ways in which the SSD could be used to advantage and other ways to help users improve code performance should be continued. In particular, the next User Meeting will be followed by a User Clinic, and publicity will be given to the fact that users are always welcome to visit the Atlas Centre for a few days for detailed consultation on code tuning.

In reply to Chris's question about virtual tapes, users were happy that tpmnt be withdrawn over the summer, certainly before the next Cray User Meeting.

There were no strong views on front end machine policy expressed from those present, but there were a few groups who would be inconvenienced by removal of the VM/RQS front end software. The general view was that we should go ahead with withdrawal of VM/RQS at the end of 1994, provided these users were given help on alternative routes to the Cray. Users can continue to use VM/CMS for file storage and editing and submit jobs to the Cray using FTP until VM/CMS is eventually withdrawn at some time in the future. It was pointed out that VM was also used for accounting queries and RAL should provide alternatives to this on the same time-scale.

The NAO report had showed the need for easier and more complete communications with users by e-mail; we would continue to press users for information to make the e-mail distribution list more complete. A suggestion that users should have their IDs disabled until they supplied a valid e-mail address was thought to be not necessary - yet!

Your comments on any of the issues raised will be most welcome. The next User Meeting will be held at the Royal Institution in London to see if a change of venue attracts a different or larger group of participants.

The Atlantic Ocean is important because of its influence on the world's climate. Warm near-surface waters flow northwards and release their heat to the atmosphere in the winter time at high northern latitudes. This results in sinking and the production of deep cold return flows to the south. The whole process has been dubbed the Atlantic Conveyor Belt and is responsible for a large heat exchange to the atmosphere. It is therefore important to understand the processes which contribute to the heat transport and circulation in the Atlantic.

Isopycnic-coordinate models, consisting of a set of layers of constant density but varying thickness, are now emerging as a useful tool for the investigation of these ocean processes. These models are in contrast to the more usual gridpoint models which have a set of levels at fixed positions in the vertical at which the model variables are known, and which have been in use for many years. Isopycnic models may possess certain advantages over the gridpoint models, but so far most ocean modelling studies and climate prediction models have been based around the gridpoint models.

It is therefore important to intercompare these two model types to assess their relative merits. With this in mind, the James Rennell Centre for Ocean Circulation has implemented the Miami isopycnic model to describe the Atlantic Ocean from about 15°S to 80°N. A 30-year integration has been carried out at a resolution of about 1° horizontally (latitude-longitude), and this run has been analysed in detail. As a collaborative exercise, the Hadley Centre for Climate Prediction and Research (part of the UK Meteorological Office) has carried out a parallel integration with a gridpoint model, and significant differences are now emerging. In particular, the overall amount of heat carried northwards differs by more than 30% between the two models, and there are significant differences in the outflows of deep water masses across the Greenland-Iceland-Faeroes rise, and in the path of the North Atlantic Current. These discrepancies are likely to cause large differences in climate models which may be integrated for long periods, but it is still too early to say precisely what causes the differences, although further study is in progress.

Further analysis of output from the 1° resolution isopycnic model has revealed significant insights into the interdecadal variability of the ventilated portion of the North Atlantic subtropical gyre fie the top 800 m or so of the water column between 10-40°N). In particular, we have been able to explain changes reported to have occurred in recent decades in the real world (such as cooling on constant depth levels but warming on constant density surfaces) as having been caused by the drawdown (subduction) of cooler water masses from the mixed layer. In addition the analysis of various tracers confirms that water masses in the upper ocean are indeed formed principally by the subduction of water masses from the mixed layer rather than by vertical mixing and diffusion, and heat budget studies in the Labrador Sea area have revealed the relative importance of oceanic advection as compared with air-sea transfers. Finally, further sensitivity studies are showing that historical changes in the composition of the water masses in the Sargasso Sea are likely to have resulted from cold-air outbreaks from the North American continent in severe winters.

The model is now also being run at a higher horizontal resolution, about 1/3°, which is sufficient to allow eddies to form. Eddies are the weather systems of the ocean, and usually occur on scales of 20-200 km or thereabouts. As such, they are too small to be adequately described by typical climate models, which for reasons of computer size and speed, usually have a grid spacing of 1° or 2° horizontally (1° latitude equals 111 km). Nevertheless, it may be that these eddies contribute significantly to the basin-averaged northward heat transport and also to the interactions and transfers between the ocean surface and its interior. It is therefore necessary to perform model integrations containing eddies so that these effects can be studied and, if found to be important, so that parameterisations can be developed for inclusion in the climate models. Several initial tests with the Miami code have now been undertaken and work has so far concentrated on varying the available diffusion parameters to obtain a realistic eddy field. Although further tests are still required, it has already been possible to obtain realistic eddies. For example, as in the real world, cyclonic (anti-clockwise) cold-core eddies have been observed south of the Gulf Stream, with corresponding anticyclonic warm-core features to the north. One example is shown in the figure on the cover which reveals the sea-surface temperature and current structure in one of the eddy-resolving runs just south of Nova Scotia (the cold-core eddy occurs at 58°W, 41°N, the warm eddy at 57°W, 43°N). The net effect of these eddies must be to increase the northward heat transport, at least locally across the Gulf Stream, since cold water from north of the current is being drawn southwards, to form the cold-core feature, and conversely for the warm feature. Collaboration is now beginning with similar modelling groups in Germany and France to intercompare three different model types at eddy resolution, in order to assess their relative merits.

The 1° resolution version of the isopycnic model has been run on 8-processor CRAY Y-MP supercomputers at both the Hadley Centre and at the Rutherford Appleton Laboratory (RAL), whilst the eddy-resolving model has been implemented solely at RAL. The former model typically runs on a single processor with a grid size of 126x104 points and 20 layers in the vertical, in which configuration it takes about 9 Mwords of core memory and 6 CPU hours for a single year of integration. The eddy-resolving model, however, has a grid size of 376x310 points (again with 20 layers in the vertical) and takes about 85 Mwords of core memory, nearly filling the machine. This means that this model is usually auto-tasked across all 8 processors, and speed-ups (compared with running on a single processor) of 5.5 - 6.0 times are typically obtained, giving a total speed of about 500-600 Mflops. The eddy-resolving model takes about 180 (single processor) CPU hours to complete a year of simulation. Full data dumps from the 1° model are usually obtained every month of simulation time, and require about 3 Mbytes of storage, whereas dumps from the eddy-resolving model are obtained every 12 days and require about 25 Mbytes each. To date, the 1° model has been run for a total of about 100 years, and the eddy resolving model for about 10 years.

Further details of the model and the some of the results outlined above are contained in the two papers below, copies of which can be obtained from the author.

New, A.L., R.Bleck, Y.Jia, R.Marsh, M.Huddleston and S.Barnard, 1994. An isopycnic model study of the North Atlantic. Part 1: Model experiment and water mass formation. Submitted to Journal of Physical Oceanography.

New, A.L. and R.Bleck, 1994 An isopycnic model study of the North Atlantic. Part 2: Interdecadal variability of the subtropical gyre. Submitted to Journal of Physical Oceanography.